I – II – III – IV – V – VI – VII – VIII – IX – X – XI – XII – XIII – XIV – XV – XVI – XVII – XVIII

[Single-page view]

According to this mentality, admitting when someone on the opposing side makes a decent point (i.e. “making a concession”) is like giving up points in a game – it’s like losing – and therefore you can never do it willingly. It doesn’t matter what’s actually true; what matters is that you deny your opponents the satisfaction of having scored points against you. This isn’t always something that you even need to be consciously aware of – i.e. realizing when your opponent is making a good point and just cynically refusing to admit it for strategic purposes – a lot of times it can take a more subconscious form, where you don’t allow yourself to even notice when they’re making a good point. Like in that Orwell quotation describing Crimestop, it’s not so much that you consciously think “Hmm, yes, I notice that my opponent’s argument is superior to my own, therefore I won’t acknowledge it” – it’s more of a subtle feeling of frustration that your opponent’s argument isn’t instantly crumbling under the force of your effortless refutations like it should, accompanied by a nagging sense of annoyance at not knowing exactly how to make it so. Again, the more you perceive the issue at hand to be central to your side’s ideology, the stronger this behavior is.

And this tendency to never want to cede any ground to your enemy doesn’t just skew the way you handle opposing ideas in an argument; it can also affect your perception of every single person and event that might have some connection to your (or your opponent’s) ideology. If you see a news story about a prominent figure using some horrible derogatory slur, for instance, or a crazed criminal attacking people in the streets, your response will probably be roughly the same as everyone else’s if the perpetrator is ideologically neutral. However, the moment the story takes on an ideological shade – if new information emerges that the wrongdoer subscribes to a particular religious or political belief system, for example – then you’ll immediately be tempted to start rationalizing in one direction or another. If they’re part of the same team you are, then you’ll start thinking up excuses for why what they said wasn’t actually that bad, or why their actions weren’t motivated by their beliefs but by some other factor like mental illness. On the other hand, if they’re part of the opposing team, then you’ll reject any such excuses out of hand and be more inclined to exaggerate the severity of the wrongdoing, claiming that it’s the most reprehensible atrocity you’ve ever seen and reflects the moral bankruptcy of the ideology behind it.

Alexander provides some insight on how ideology can skew our perception of such events:

I have found a pattern: when people consider an idea in isolation, they tend to make good decisions. When they consider an idea a symbol of a vast overarching narrative, they tend to make very bad decisions.

Let me offer [an] example.

A white man is accused of a violent attack on a black woman. In isolation, well, either he did it or he didn’t, and without any more facts there’s no use discussing it.

But what if this accusation is viewed as a symbol? What if you have been saying for years that racism and sexism are endemic in this country, and that whites and males are constantly abusing blacks and females, and they’re always getting away with it because the police are part of a good ole’ boys network who protect their fellow privileged whites?

Well, right now, you’ll probably still ask for the evidence. But if I gave you some evidence, and it was complicated, you’d probably interpret it in favor of the white man’s guilt. The heart has its reasons that reasons know not of, and most of them suck. We make unconsciously make decisions based on our own self-interest and what makes us angry or happy, and then later we find reasons why the evidence supports them. If I have a strong interest in a narrative of racism, then I will interpret the evidence to support accusations of racism.

Lest I sound like I’m picking on the politically correct, I’ve seen scores of people with the opposite narrative. You know, political correctness has grown rampant in our society, women and minorities have been elevated to a status where they can do no wrong, the liberal intelligentsia always tries to pin everything on the white male. When the person with this narrative hears the evidence in this case, they may be more likely to believe the white man – especially if they’d just listened to their aforementioned counterpart give their speech about how this proves the racist and sexist tendencies of white men.

Yes, I’m thinking of the Duke lacrosse case.

The problem here is that there are two different questions here: whether this particular white male attacked this particular black woman, and whether our society is racist or “reverse racist”. The first question definitely has one correct answer which while difficult to ascertain is philosophically simple, whereas the second question is meaningless, in the same technical sense that “Islam is a religion of peace” is meaningless. People are conflating these two questions, and acting as if the answer to the second determines the answer to the first.

Which is all nice and well unless you’re one of the people involved in the case, in which case you really don’t care about which races are or are not privileged in our society as much as you care about not being thrown in jail for a crime you didn’t commit, or about having your attacker brought to justice.

I think this is the driving force behind a lot of politics. Let’s say we are considering a law mandating businesses to lower their pollution levels. So far as I understand economics, the best decision-making strategy is to estimate how much pollution is costing the population, how much cutting pollution would cost business, and if there’s a net profit, pass the law. Of course it’s more complicated, but this seems like a reasonable start.

What actually happens? One side hears the word “pollution” and starts thinking of hundreds of times when beautiful pristine forests were cut down in the name of corporate greed. This links into other narratives about corporate greed, like how corporations are oppressing their workers in sweatshops in third world countries, and since corporate executives are usually white and third world workers usually not, let’s add racism into the mix. So this turns into one particular battle in the war between All That Is Right And Good and Corporate Greed That Destroys Rainforests And Oppresses Workers And Is Probably Racist.

The other side hears the words “law mandating businesses” and starts thinking of a long history of governments choking off profitable industry to satisfy the needs of the moment and their re-election campaign. The demonization of private industry and subsequent attempt to turn to the government for relief is a hallmark of communism, which despite the liberal intelligentsia’s love of it killed sixty million people. Now this is a battle in the war between All That Is Right And Good and an unholy combination of Naive Populism and Soviet Russia.

[…]

Now, if the economists do their calculations and report that actually the law would cause more harm than good, do you think the warriors against Corporate Greed That Destroys Rainforests And Oppresses Workers And Is Probably Racist are going to say “Oh, okay then” and stand down? In the face of Corporate Greed That Destroys Rainforests And Oppresses Workers And Is Probably Racist?!?

[…]

I call this mistake “missing the trees for the forest”. If you have a specific case you need to judge, judge it separately on its own merits, not the merits of what agendas it promotes or how it fits with emotionally charged narratives.

He gives one more example:

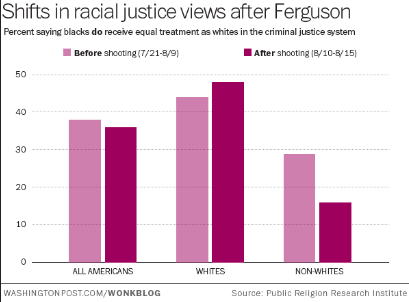

You can see that after the Ferguson shooting [of 2014], the average American became a little less likely to believe that blacks were treated equally in the criminal justice system. This makes sense, since the Ferguson shooting was a much-publicized example of the criminal justice system treating a black person unfairly.

But when you break the results down by race, a different picture emerges. White people were actually a little more likely to believe the justice system was fair after the shooting. Why? I mean, if there was no change, you could chalk it up to white people believing the police’s story that the officer involved felt threatened and made a split-second bad decision that had nothing to do with race. That could explain no change just fine. But being more convinced that justice is color-blind? What could explain that?

My guess – before Ferguson, at least a few people interpreted this as an honest question about race and justice. After Ferguson, everyone mutually agreed it was about politics.

[…]

Anyone who thought that the question in that poll was just a simple honest question about criminal justice was very quickly disabused of that notion. It was a giant Referendum On Everything, a “do you think the Blue Tribe is right on every issue and the Red Tribe is terrible and stupid, or vice versa?” And it turns out many people who when asked about criminal justice will just give the obvious answer, have much stronger and less predictable feelings about Giant Referenda On Everything.

In my last post, I wrote about how people feel when their in-group is threatened, even when it’s threatened with an apparently innocuous point they totally agree with:

I imagine [it] might feel like some liberal US Muslim leader, when he goes on the O’Reilly Show, and O’Reilly ambushes him and demands to know why he and other American Muslims haven’t condemned beheadings by ISIS more, demands that he criticize them right there on live TV. And you can see the wheels in the Muslim leader’s head turning, thinking something like “Okay, obviously beheadings are terrible and I hate them as much as anyone. But you don’t care even the slightest bit about the victims of beheadings. You’re just looking for a way to score points against me so you can embarass all Muslims. And I would rather personally behead every single person in the world than give a smug bigot like you a single microgram more stupid self-satisfaction than you’ve already got.”

I think most people, when they think about it, probably believe that the US criminal justice system is biased. But when you feel under attack by people whom you suspect have dishonest intentions of twisting your words so they can use them to dehumanize your in-group, eventually you think “I would rather personally launch unjust prosecutions against every single minority in the world than give a smug out-group member like you a single microgram more stupid self-satisfaction than you’ve already got.”

When people regard every news story involving their side as a referendum on the truth value of their side’s ideology as a whole, it’s easy to see why they might have a hard time condemning wrongdoers on their own side, even when such criticism is deserved – or why they might struggle to give the other side credit when credit is due. This is what ideological tribalism is all about.

(Another quick disclaimer, by the way: You may be reading this at some point in the future where social issues like race aren’t as all-consuming – the big issue of your day might be a big war or financial crisis or something – but at the time of this writing, at least, they’re the main issues dominating the political discourse, so for better or worse, a lot of the quotations cited here are going to involve things like racial justice and neo-Nazi rallies and so forth. Having said that, for most of these examples you could substitute other contentious issues like capitalism vs. socialism or pro-choice vs. pro-life and they’d apply just as well.)

The irony here is that from a neutral outside standpoint, a person’s inability to notice their own double standards and openly admit when someone on their side acts wrongly reflects even worse on their side than if they simply shrugged and said, “Oh yeah, obviously that person doesn’t represent what I believe in and their actions are clearly counterproductive to the cause.” Someone who can’t bring themselves to acknowledge clear-cut cases of wrongdoing only makes themselves look guilty by association, unable to assess things reasonably or impartially.

But within the tribalist mindset, “assessing things reasonably and impartially” isn’t always the goal. It’s not a matter of trying to dispassionately weigh different ideas to see which one is most accurate, like a judge at a boxing match – it’s more like being one of the boxers. You’re not a judge in the battle of ideas, you’re fighting in it – and when you’re in a fight, the point isn’t to make the right judgment; the point is to win. As Eliezer Yudkowsky writes:

Politics is an extension of war by other means. Arguments are soldiers. Once you know which side you’re on, you must support all arguments of that side, and attack all arguments that appear to favor the enemy side; otherwise it’s like stabbing your soldiers in the back – providing aid and comfort to the enemy. People who would be level-headed about evenhandedly weighing all sides of an issue in their professional life as scientists, can suddenly turn into slogan-chanting zombies when there’s a Blue or Green position on an issue.

And Jason Pargin agrees:

We don’t ingest facts to educate ourselves; we do it so that we have ammunition to use against the opposing tribe. Therefore, it doesn’t matter if we’re oversimplifying or skewing (we’ll point out that guns kill 30,000 Americans every year but omit that two-thirds of those are suicides) because we know our side is right and winning is all that matters.

There are much better ways of thinking, of course. According to Brennan’s theory of political behavior, there are three primary approaches a person might adopt when it comes to their ideological views – the “hobbit” approach, the “hooligan” approach, or the “vulcan” approach – and of these three, the third is decidedly better than the first two. It’s basically the equivalent of putting yourself in the role of judge rather than fighter. Unfortunately, though, the majority of people never make it to the more advanced “vulcan” category; they tend to get bogged down at the “hobbit” or “hooligan” stage:

- Hobbits are mostly apathetic and ignorant about politics. They lack strong, fixed opinions about most political issues. Often they have no opinions at all. They have little, if any, social scientific knowledge; they are ignorant not just of current events but also of the social scientific theories and data needed to evaluate as well as understand these events. Hobbits have only a cursory knowledge of relevant world or national history. They prefer to go on with their daily lives without giving politics much thought. In the United States, the typical nonvoter is a hobbit.

- Hooligans are the rabid sports fans of politics. They have strong and largely fixed worldviews. They can present arguments for their beliefs, but they cannot explain alternative points of view in a way that people with other views would find satisfactory. Hooligans consume political information, although in a biased way. They tend to seek out information that confirms their preexisting political opinions, but ignore, evade, and reject out of hand evidence that contradicts or disconfirms their preexisting opinions. They may have some trust in the social sciences, but cherry-pick data and tend only to learn about research that supports their own views. They are overconfident in themselves and what they know. Their political opinions form part of their identity, and they are proud to be a member of their political team. For them, belonging to the Democrats or Republicans, Labor or Tories, or Social Democrats or Christian Democrats matters to their self-image in the same way being a Christian or Muslim matters to religious people’s self-image. They tend to despise people who disagree with them, holding that people with alternative worldviews are stupid, evil, selfish, or at best, deeply misguided. Most regular voters, active political participants, activists, registered party members, and politicians are hooligans.

- Vulcans think scientifically and rationally about politics. Their opinions are strongly grounded in social science and philosophy. They are self-aware, and only as confident as the evidence allows. Vulcans can explain contrary points of view in a way that people holding those views would find satisfactory. They are interested in politics, but at the same time, dispassionate, in part because they actively try to avoid being biased and irrational. They do not think everyone who disagrees with them is stupid, evil, or selfish.

These are ideal types or conceptual archetypes. Some people fit these descriptions better than others. No one manages to be a true vulcan; everyone is at least a little biased. Alas, many people fit the hobbit and hooligan molds quite well. Most Americans are either hobbits or hooligans, or fall somewhere in the spectrum in between.

Brennan’s description of hooligans as being like “rabid sports fans” is particularly appropriate here. One of the first studies to uncover the extent of this psychological bias actually involved gauging fans’ reactions to a football game, as Tim Harford describes:

An early indicator of how tribal our logic can be was a study conducted in 1954 by Albert Hastorf, a psychologist at Dartmouth, and Hadley Cantril, his counterpart at Princeton. Hastorf and Cantril screened footage of a game of American football between the two college teams. It had been a rough game. One quarterback had suffered a broken leg. Hastorf and Cantril asked their students to tot up the fouls and assess their severity. The Dartmouth students tended to overlook Dartmouth fouls but were quick to pick up on the sins of the Princeton players. The Princeton students had the opposite inclination. They concluded that, despite being shown the same footage, the Dartmouth and Princeton students didn’t really see the same events. Each student had his own perception, closely shaped by his tribal loyalties. The title of the research paper was “They Saw a Game”.

A more recent study revisited the same idea in the context of political tribes. The researchers showed students footage of a demonstration and spun a yarn about what it was about. Some students were told it was a protest by gay-rights protesters outside an army recruitment office against the military’s (then) policy of “don’t ask, don’t tell”. Others were told that it was an anti-abortion protest in front of an abortion clinic.

Despite looking at exactly the same footage, the experimental subjects had sharply different views of what was happening – views that were shaped by their political loyalties. Liberal students were relaxed about the behaviour of people they thought were gay-rights protesters but worried about what the pro-life protesters were doing; conservative students took the opposite view. As with “They Saw a Game”, this disagreement was not about the general principles but about specifics: did the protesters scream at bystanders? Did they block access to the building? We see what we want to see – and we reject the facts that threaten our sense of who we are.

Brennan drives home the parallel between sports fandom and political partisanship:

[Ilya] Somin has a good analogy: some people are political fans. Sports fans enjoy rooting for a team. […] Sports fans, however, also tend to evaluate information in a biased way. They tend to “play up evidence that makes their team look good and their rivals look bad, while downplaying evidence that cuts the other way.”

This is what tends to happen in politics. People tend to see themselves as being on team Democrat or team Republican, team Labor or team Conservative, and so on. They acquire information because it helps them root for their team and against their hated rivals. If the rivalry between Democratic and Republican voters sometimes seems like the rivalry between Red Sox and Yankees fans, that’s because from a psychological point of view, it very much is.

Holding unconscious double standards like this may not be such a big deal when the context is a meaningless sports game. Nobody will hold it against you if you’re a “homer” who supports their team even if it means indulging in a little self-delusion. But this kind of team spirit can have devastating consequences when it seeps into other areas of contention, such as international relations, where the stakes can be matters of life and death. To quote Orwell again:

All nationalists have the power of not seeing resemblances between similar sets of facts. A British Tory will defend self-determination in Europe and oppose it in India with no feeling of inconsistency. Actions are held to be good or bad, not on their own merits, but according to who does them, and there is almost no kind of outrage – torture, the use of hostages, forced labour, mass deportations, imprisonment without trial, forgery, assassination, the bombing of civilians – which does not change its moral colour when it is committed by ‘our’ side.

Troublingly, this pattern permeates practically every contentious issue in our society, regardless of whether the stakes are low or high. Simler and Hanson give a more recent example:

During the Bush administration, U.S. antiwar protestors – most of whom were liberal – justified their efforts in terms of the harms of war. And yet when Obama took over as president, they drastically reduced their protests, even though the wars in Iraq and Afghanistan continued unabated. All this suggested an agenda that was more partisan than pacifist.

McRaney mentions a similar case relating to economic attitudes:

Remember all that handwringing about economic insecurity in red states as political motivation to vote one way or the other? Recent analysis by behavioral economist Peter Atwater has found that almost all of that economic insecurity has evaporated since Trump became president, despite the fact that nothing has changed economically in those places where it was once a supposedly major concern. This suggests that people’s political behavior was driven by tribal psychology, like it usually is, but justified by whatever seems salient at the time, like it usually is. Once their “tribal mood,” as he put it, improved, so did their feelings about the economy.

And Zaid Jilani adds:

“Americans are dividing themselves socially on the basis of whether they call themselves liberal or conservative, independent of their actual policy differences,” [according to Lilliana Mason].

[…]

She noted, for instance, that Americans who identify most strongly as conservative, whether they hold more left-leaning or right-leaning positions on major issues, dislike liberals more than people who more weakly identify as conservatives but may hold very right-leaning issue positions.

The loose connection some voters have with policy preferences has become apparent in recent years. Donald Trump managed to flip a party from support of free trade to opposition to it by merely taking the opposite side of the issue. Democrats, meanwhile, mocked Mitt Romney in 2012 for calling Russia the greatest geopolitical adversary of the United States, but now have flipped and see Russia as exactly that. Regarding health care, the structure of the Affordable Care Act was initially devised by the conservative Heritage Foundation and implemented in Massachusetts as “Romneycare.” Once it became Obamacare, the Republican team leaders deemed it bad, and thus it became bad.

Mason believes the implications of such shallow divisions between people could make the work of democracy harder. If your goal in politics is not based around policy but just defeating your perceived enemies, what exactly are you working toward? (Is it any surprise there is an entire genre of campus activism dedicated to simply upsetting your perceived political opponents?)

“The fact that even this thing that’s supposed to be about reason and thoughtfulness and what we want the government to do, the fact that even that is largely identity-powered, that’s a problem for debate and compromise and the basic functioning of democratic government. Because even if our policy attitudes are not actually about what we want the government to do but instead about who wins, then nobody cares what actually happens in the government,” Mason said. “We just care about who’s winning in a given day. And that’s a really dangerous thing for trying to run a democratic government.”

When you’re locked into this kind of mindset, actual ideas and their consequences are really only a secondary consideration. The main thing is just to beat the other side – so regardless of what they actually say or do, your natural impulse will be to come up with reasons to condemn it; and regardless of what your side says or does, your impulse will be to come up with reasons to support it. It’s not a matter of developing broad overarching principles that can be applied across all different situations and contexts – it’s not even a matter of being consistent in your beliefs at all – it’s just a matter of taking whatever stance is most likely to help your side at the moment. Alexander writes:

An idea I keep stressing here […] is that people rarely have consistent meta-level principles. Instead, they’ll endorse the meta-level principle that supports their object-level beliefs at any given moment. The example I keep giving is how when the federal government was anti-gay, conservatives talked about the pressing need for federal intervention and liberals insisted on states’ rights; when the federal government became pro-gay, liberals talked about the pressing need for federal intervention and conservatives insisted on states’ rights.

He quotes another particularly revealing example from Tetlock and Dan Gardner:

When hospitals created cardiac care units to treat patients recovering from heart attacks, [Archie] Cochrane proposed a randomized trial to determine whether the new units delivered better results than the old treatment, which was to send the patient home for monitoring and bed rest. Physicians balked. It was obvious the cardiac care units were superior, they said, and denying patients the best care would be unethical. But Cochrane was not a man to back down…he got his trial: some patients, randomly selected, were sent to the cardiac care units while others were sent home for monitoring and bed rest. Partway through the trial, Cochrane met with a group of the cardiologists who had tried to stop his experiment. He told them that he had preliminary results. The difference in outcomes between the two treatments was not statistically signficant, he emphasized, but it appeared that patients might do slightly better in the cardiac care units. “They were vociferous in their abuse: ‘Archie,’ they said, ‘we always thought you were unethical. You must stop the trial at once.’” But then Cochrane revealed he had played a little trick. He had reversed the results: home care had done slightly better than the cardiac units. “There was dead silence and I felt rather sick because they were, after all, my medical colleagues.”

This story is the key to everything. See also my political spectrum quiz and the graph that inspired it. Almost nobody has consistent meta-level principles. Almost nobody really has opinions like “this study’s methodology is good enough to believe” or “if one group has a survival advantage of size X, that necessitates stopping the study as unethical”. The cardiologists sculpted their meta-level principles around what best supported their object-level opinions – that more cardiology is better – and so generated the meta-level principles “Cochrane’s experiment is accurate” and “if one group has a slight survival advantage, that’s all we need to know before ordering the experiment stopped as unethical.” If Cochrane had (truthfully) told them that the cardiology group was doing worse, they would have generated the meta-level principles “Cochrane’s experiment is flawed” and “if one group has a slight survival advantage that means nothing and it’s just a coincidence”. In some sense this is correct from a Bayesian point of view – I interpret sonar scans of Loch Ness that find no monsters to be probably accurate, but if a sonar scan did find a monster I’d wonder if it was a hoax – but in less obvious situations it can be a disaster. Cochrane understood this and so fed them the wrong data and let them sell him the rope he needed to hang them.

When it comes to things like politics and religion, a lot of the reason for this kind of inconsistency may just be due to the fact that (as mentioned earlier) people don’t always give much thought to what their stance on a particular issue actually is until they’re asked about it directly. As Alexander puts it:

We don’t have a good explicit understanding of what high-level principles we use, and tend to make them up on the spot to fit object-level cases.

If you consider relatively esoteric political issues like gerrymandering or filibustering, for instance, these probably aren’t the kind of issues that most people have spent enough time thinking about to have formulated a coherent stance on. If you ask them how they feel about these issues, then, their responses will probably depend on the context. If it’s a situation where the opposing side is the one using these tactics to give themselves a political advantage, the person will probably lament that these practices are dishonorable perversions of the democratic process, tantamount to outright cheating – but if it’s their own side that’s using them, they’ll probably shrug their shoulders and say that these tactics are a perfectly legitimate part of “how the game is played,” and that exploiting them is just smart politics.

You may have even found yourself in such a situation before, where you haven’t necessarily spent a lot of time thinking about the question at hand in advance, but you don’t want to embarrass yourself by not being able to give a confident opinion on the issue, so you just make a snap judgment and default to your usual assumption that whatever your side is doing must be right and whatever the other side is doing must be wrong. This kind of ad hoc approach might serve you well in the short term – the fact that you were able to provide an immediate answer can do a fairly good job simulating the certitude that comes with having researched the issue on your own and come to your own conclusions. But if you haven’t actually thought through all the implications in advance and made sure that the stance you’re taking is consistent with the rest of your worldview, it can come back to bite you later on when the roles are reversed and the shoe is on the other foot. Once you’ve staked yourself to the idea that gerrymandering is a valid political strategy, or that states’ rights should take priority over federal mandates, or that deliberately targeting civilians in warfare can be a justifiable tactic, you may find yourself wishing you could take it back once your enemies are the ones gerrymandering your state or bombing your neighborhood.

No doubt, it’s important to be able to stand up for your beliefs. Fighting for your beliefs can even be downright heroic. But that’s only true if what you believe in is actually the right thing to be fighting for. If you’re fighting for the wrong thing – even if your intentions are pure – then there’s a very good chance that you’ll end up painting yourself into a corner and your arguments will lead you somewhere you don’t want to go. That’s why it’s critically important to be willing to actually sit down and figure out exactly what the right positions are on every issue you decide to get involved with – not just which positions will score the most points for your side, but what positions are really true. Just having conviction in your beliefs isn’t enough. Just fighting hard for them isn’t enough. As Alexander writes:

Wrong people can be just as loud as right people, sometimes louder. If two doctors are debating the right diagnosis in a difficult case, and the patient’s crazy aunt hires someone to shout “IT’S LUPUS!” really loud in front of their office all day, that’s not exactly helping matters. If a group of pro-lupus protesters block the entry to the hospital and refuse to let any of the staff in until the doctors agree to diagnose lupus, that’s a disaster. All that passion does is use pressure or even threats to introduce bias into the important work of debate and analysis.

Thoughtful analysis alone isn’t enough either, of course. Once you’ve figured things out to the best of your ability, the next step is to undertake the hard work of putting those ideas into action. But the crucial point here is that you can’t put the cart before the horse. Step Two has to come after Step One. Unfortunately, too many people nowadays are like soldiers who yearn to fight for their country, but don’t have enough interest in foreign policy to first figure out whether the war they’re going to be fighting in is ethically justifiable. They’re just so captivated by the idea of being a hero for their side that they don’t spend enough time thinking about whether the hill they’ve chosen to die on is the right one. To borrow an analogy from Julia Galef, they get so fixated on trying to play this role of soldier – fighting as aggressively as possible to win a particular piece of territory – that they end up doing more harm than good (both to themselves and others) in situations where their mindset shouldn’t have been that of soldier but of scout – not trying to actively attack or defend, but simply trying to survey the territory and form the most accurate map of it possible. And the result of this mistake is that, all too often, they end up with a worldview that’s badly distorted. They end up with a map that doesn’t match the territory they’re trying to navigate, so to speak – and consequently, they end up getting themselves lost.

Even so, a lot of them choose to just keep plowing ahead regardless, because once again, it all comes back to the point that total accuracy and impartiality isn’t always necessarily the goal. People can have other objectives, and when it comes to ideological disputes, the priority that usually takes precedence is sticking up for their chosen side. As Julie Beck writes:

Though false beliefs are held by individuals, they are in many ways a social phenomenon. [The followers of Marian Keech] held onto their belief that the spacemen were coming […] because those beliefs were tethered to a group they belonged to, a group that was deeply important to their lives and their sense of self.

[Daniel] Shaw describes the motivated reasoning that happens in these groups: “You’re in a position of defending your choices no matter what information is presented,” he says, “because if you don’t, it means that you lose your membership in this group that’s become so important to you.” Though cults are an intense example, Shaw says people act the same way with regard to their families or other groups that are important to them.

[…]

In one particularly potent example of party trumping fact, when shown photos of Trump’s inauguration and Barack Obama’s side by side, in which Obama clearly had a bigger crowd, some Trump supporters identified the bigger crowd as Trump’s. When researchers explicitly told subjects which photo was Trump’s and which was Obama’s, a smaller portion of Trump supporters falsely said Trump’s photo had more people in it.

While this may appear to be a remarkable feat of self-deception, Dan Kahan thinks it’s likely something else. It’s not that they really believed there were more people at Trump’s inauguration, but saying so was a way of showing support for Trump. “People knew what was being done here,” says Kahan, a professor of law and psychology at Yale University. “They knew that someone was just trying to show up Trump or trying to denigrate their identity.” The question behind the question was, “Whose team are you on?”

In these charged situations, people often don’t engage with information as information but as a marker of identity. Information becomes tribal.

In a New York Times article called “The Real Story About Fake News Is Partisanship,” Amanda Taub writes that sharing fake news stories on social media that denigrate the candidate you oppose “is a way to show public support for one’s partisan team – roughly the equivalent of painting your face with team colors on game day.”

This sort of information tribalism isn’t a consequence of people lacking intelligence or of an inability to comprehend evidence. Kahan has previously written that whether people “believe” in evolution or not has nothing to do with whether they understand the theory of it – saying you don’t believe in evolution is just another way of saying you’re religious. [When test subjects were incentivized to temporarily suspend their group loyalties – either through monetary compensation or just by being asked to get the correct answers as best they could – their test scores correlated with their level of education. Otherwise, their scores correlated with their level of religiosity.] Similarly, a recent Pew study found that a high level of science knowledge didn’t make Republicans any more likely to say they believed in climate change, though it did for Democrats.

Kahan himself elegantly sums it up:

When [these people are] being asked about those things, they are not telling you what they know. They are telling you who they are.

(See also Manvir Singh’s post here on the distinction between “factual” beliefs and “symbolic” beliefs.)

It’s actually kind of remarkable how much our beliefs can be shaped by our group allegiances, rather than the other way around. The desire to see things in a way that harmonizes with your group’s consensus viewpoint can not only be powerful enough to override your better judgment – it can even override the most basic evidence of your own five senses. Brennan recounts one of psychology’s most famous group conformity experiments:

In Solomon Asch’s experiment, eight to ten students were shown sets of lines in which two lines were obviously the same length, and the others were obviously of different length. They were then asked to identify which lines matched. In the experiment, only one member of the group is an actual subject; the rest are collaborators. As the experiment proceeds, the collaborators begin unanimously to select the wrong line.

Asch wanted to know how the experimental subjects would react. If nine other students are all saying that lines A and B, which are obviously different, are the same length, would subjects stick to their guns or instead agree with the group? Asch found that about 25 percent of the subjects stuck to their own judgment and never conformed, about 37 percent of them caved in, coming to agree completely with the group, and the rest would sometimes conform and sometimes not. Control groups responding privately in writing were only one-fifth as likely to be in error. These results have been well replicated.

For a long time, researchers wondered whether the conformists were lying or not. Were they just pretending to agree with the group, or did they actually believe that the nonidentical lines were identical because the group said so. Researchers recently repeated a version of the experiment using functional magnetic resonance imaging. By monitoring the brain, they might be able to tell whether subjects were making an “executive decision” to conform to the group, or whether their perceptions actually changed. The results suggest that many subjects actually come to see the world differently in order to conform to the group. Peer pressure might distort their eyesight, not just their will.

These findings are frightening. People can be made to deny simple evidence right in front of their faces (or perhaps even come to actually see the world differently) just because of peer pressure. The effect should be even stronger when it comes to forming political beliefs.

And sure enough, that does seem to be the case. As Aurora Dagny writes of her experience belonging to radical political groups, members are often reluctant to say (or even think) anything that deviates even slightly from the consensus group ideology, for fear of being been seen as disloyal:

Every minor heresy inches you further away from the group. […] Conversely, showing your devotion to the cause earns you respect. Groupthink becomes the modus operandi. When I was part of groups like this, everyone was on exactly the same page about a suspiciously large range of issues. Internal disagreement was rare.

When you’re involved in an ideological group like this, you may be aware at some level that you’re fudging your own internal beliefs a little bit in order to support your group’s consensus narrative – or you may not be consciously aware of it at all. But either way, you’re being motivated by the same underlying incentives; you’d rather be the steadfast loyalist who always stands up for the “good side” than the pedantic hairsplitter who always insists on being technically correct even when it undermines the movement’s credibility and annoys everyone in the group. You’d rather be too committed to a cause you know is right than not committed enough – even if it means suppressing what you actually think is true in the back of your mind on those rare occasions when it contradicts the group.