I – II – III – IV – V – VI – VII – VIII – IX – X – XI – XII – XIII – XIV – XV – XVI – XVII – XVIII

[Separate-page view]

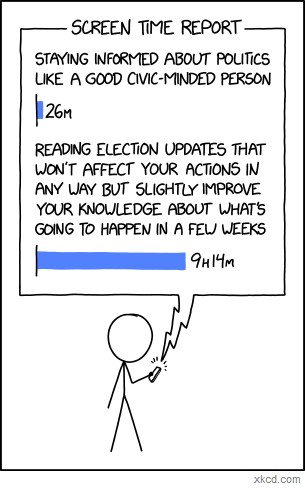

I remember hearing this Eleanor Roosevelt quotation back when I was a teenager, which went: “Small minds discuss people. Average minds discuss events. Great minds discuss ideas.” Of course, like so many other quotations attributed to Eleanor Roosevelt or Abraham Lincoln or Albert Einstein or Mahatma Gandhi, this one turned out not to be genuine; apparently the actual source was someone named Henry Thomas Buckle who I don’t think most people have ever even heard of – which is kind of ironic (or perhaps strangely fitting), that the idea is what has ultimately been remembered and not the person who said it. But at any rate, for whatever reason, it’s an idea that has stuck with me over the years. Granted, it’s not exactly the kind of thing you can take as an absolute (at least not if you want to avoid seeming pretentious); obviously, discussing people and events can be plenty valuable sometimes. Still, I think the basic point that it’s trying to convey – that it’s important to think about things on the level of the underlying concepts, not just the surface-level stuff – is a good one; so I like it as something to try and aspire to, at least, if not necessarily something to judge others by. If nothing else, I like that it so clearly draws the distinction between talking about people and events versus talking about ideas, because as soon as you put a label on it like that, you really start to notice just how little time we actually do spend talking about ideas. We talk about people and events constantly – you can turn on your TV or computer every day and spend hours seeing all the latest news stories and political scandals. And there are plenty of people who do exactly that; being an “information junkie” is the fashionable thing to do nowadays. But I think there’s a difference between information and knowledge; and having a head full of information isn’t the same thing as having a head full of ideas or expertise. Obsessively scrolling through Twitter and following every piece of Washington gossip won’t make you more knowledgeable in the fields of economics or foreign policy than spending that same amount of time reading good books on those subjects. Staying up to the minute on every new consumer technology gimmick won’t make you more knowledgeable in the fields of telecommunications or software development than spending the same amount of time actually learning the systems behind those technologies. Being “well-informed,” even maximally well-informed, doesn’t actually make you more knowledgeable in any real sense if the things you’re well-informed about don’t have any deep significance outside of their immediate context.

I probably don’t need to give a long list of examples here; you can see what I mean for yourself. Watch a random CNN segment from ten years ago – or hell, even ten weeks ago – and see how much of it is information that you think is still important for you to have in your head today. Chances are (barring some major historical event that you’d have heard about whether you closely followed the news or not – i.e. something that wouldn’t properly be considered part of the usual “news cycle” at all), it will be almost none of it. The facts will be outdated; the people being discussed will no longer be relevant; and a disproportionate share of the coverage will simply be dedicated to explaining ever-finer bits of minutiae – what the campaign manager is saying about the latest poll numbers out of New Hampshire, whether the press secretary might be stepping down and who their potential replacement could be, etc. – not necessarily to explaining why these minutiae should change your broader understanding of the world in any meaningful way. (Hence Nassim Taleb’s quip: “To be completely cured of newspapers, spend a year reading the previous week’s newspapers.”)

The same goes for most social media and blog discussions. As Shane Parrish points out, when most of what you see in your feed is coming from people who are manically churning out dozens of posts and Tweets per day in response to every little thing that happens, chances are you aren’t getting a whole lot of deep ideas from them; for the most part, all you’re getting are reactions – people’s most surface-level responses to whatever happens to be right in front of them at the moment.

(Case in point: As I’m writing this in mid-2018, the headline everybody’s obsessing over on CNN and Twitter is “McCain Wife Fires Back at White House Aide Who Mocked Her Husband.” We’ll see if this story is still important enough to dominate the national conversation by the time I publish this post, but something tells me it won’t be.)

I don’t entirely blame the news for being the way it is, mind you – as Parrish notes, “News is by definition something that doesn’t last.” Nor do I blame other outlets like social media and constantly-updating blogs for providing such transient content themselves; that’s what they were designed for. It’s not so much that I’m bothered by the fact that so little of this information content will matter ten years from now – it’s more the fact that we choose to dedicate such a disproportionate amount of our mental bandwidth and public discussion to it, often at the expense of deeper and more enduring forms of knowledge. I obviously don’t think that all news coverage is a waste of time, or that we should stop paying attention to it altogether (especially if it’s something major like a war or a pandemic). It should go without saying that knowing what’s happening in the world is a necessary first step to understanding it. But I do think it’s worth noticing what a remarkable proportion of news coverage – as well as online commentary, social media conversations, and all the other information feeds we engage with on a daily basis – is just “empty calories,” so to speak. It only tells us “what’s happening in the world” in the most literal, superficial sense; it doesn’t help us understand the underlying phenomena that give rise to these surface-level events, or give us any deeper insight into the broader forces at work.

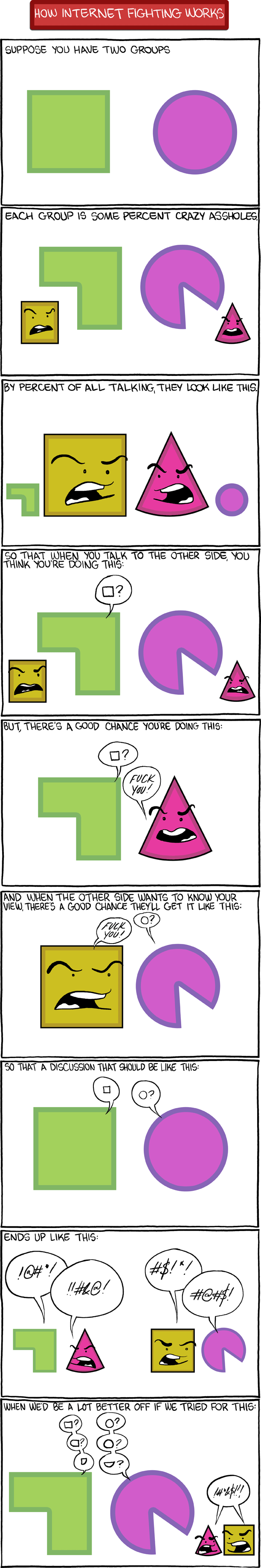

I suppose part of the reason for this lack of depth is just that the superficial things are so much more accessible, and easier to understand, and easier to bring into a conversation, than the more complex ideas underlying them. It’s easier to have a strong opinion on a politician making some dumb gaffe than it is to determine whether her fiscal policies are fundamentally sound. It’s easier to condemn a religious figure for cheating on his wife than it is to explain why his religious worldview represents a flawed basis for morality. People like being able to weigh in on the issues of the day – we all want to feel like we’re smart and informed and can make a contribution to the conversation – and consequently, whichever issues of the moment allow the greatest number of people to jump into the conversation and take a strong stance are the ones that inevitably get all the attention. But because most of us don’t have a deep store of esoteric knowledge about complex socio-political, economic, and philosophical issues, what this means is that most of the topics that dominate the social conversation are the ones that can appeal to the lowest common denominator – i.e. the shallowest and most superficial topics.

There’s actually a term for this phenomenon, it turns out: Parkinson’s Law of Triviality. What it says is that the amount of discussion generated by a particular topic will be inversely proportional to the complexity of that topic; in other words, the more complicated and difficult to understand a topic is, the less people will want to take a stance on it, but the simpler it is to understand, the more people will be willing to weigh in (and to do so vocally and passionately). This is also called “bikeshedding,” after the example given by C. Northcote Parkinson himself, in which a committee is given the task of approving plans for a 300-million-dollar nuclear power plant, and rather than spending their time debating the cost and proposed design of the reactor itself – a tremendously important issue – they pass the resolution in two minutes (because after all, nuclear reactors are expensive and hard to understand), and instead get hung up on the much easier-to-understand issue of a proposed bike shed to be built nearby, debating for hours over what type of materials should be used, whether the few hundred dollars to build the bike shed could be better appropriated elsewhere, and so forth. As the Wikipedia article summarizes it: “A reactor is so vastly expensive and complicated that an average person cannot understand it, so one assumes that those who work on it understand it. On the other hand, everyone can visualize a cheap, simple bicycle shed, so planning one can result in endless discussions because everyone involved wants to add a touch and show personal contribution.”

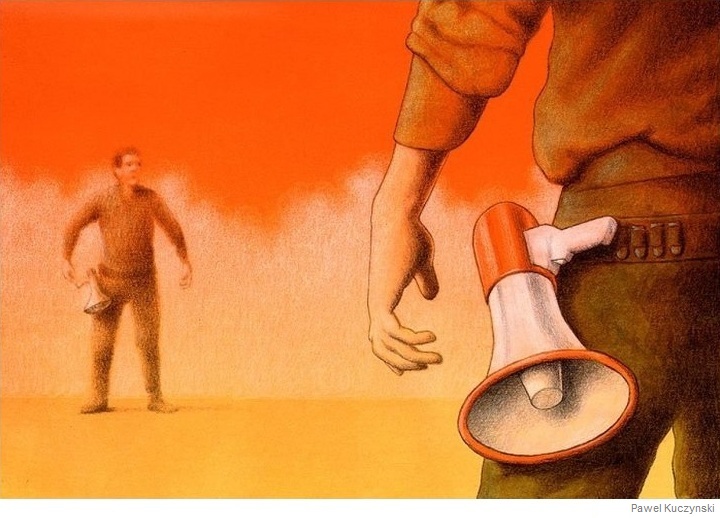

Unfortunately, when it comes to the really important topics in our society – social issues, religion, politics, etc. – I feel like a lot of the conversations we’re having are just glorified bikeshedding. We’re happy to spend hours every day talking about what the world’s major figures and groups have been up to lately, and what events have been making headlines; but like the nuclear power plant in the analogy, the fundamental ideas and philosophies motivating those people and driving those events are simply glossed over. It’s assumed that they’ve already been taken care of. Whether in news reports, blog posts, or social media discussions, it’s simply taken for granted that everyone has already figured out exactly where they stand on the ideas, worked out which philosophies they believe are the correct ones, and sorted themselves into opposing sides on every possible topic – and that therefore all that’s left to do is just to set the opposing sides loose against each other and start keeping score, in as much detail as possible, of which side is winning and which side is losing on any given day.

The thing is, though, I don’t think this assumption is actually true. I don’t think most people actually have thought through all the exact details of their own ideologies – not entirely. They may have convinced themselves that they have a firm grasp on things because they’ve read a lot of articles and watched a lot of videos on the relevant subjects (see Will Schoder’s video here for a great explanation of how this can happen). But I think if you asked them to take out a pen and paper and outline the framework of their worldview from the ground up – to explain their exact stance on every single issue (along with their justifications) from first principles, without referring to some other source of authority – most people wouldn’t be able to do it; or at least, they’d have to do a lot of thinking about it first. There would be a lot of beliefs that weren’t quite fully formulated, that didn’t necessarily connect to each other or fit together in a clear way, or that were adopted on an ad hoc basis. (Kevin Simler and Robin Hanson illustrate this by pointing out that “when people are asked the same policy question a few months apart, they frequently give different answers – not because they’ve changed their minds, but because they’re making up answers on the spot, without remembering what they said last time. It is even easy to trick voters into explaining why they favor a policy, when in fact they recently said they opposed that policy.”) One thing is for sure – hardly anyone who categorizes themselves under some ideological label like “Democrat” or “Republican” or “Christian” or “Muslim” would have come up with that exact bundle of political or religious beliefs if they’d had to formulate everything themselves from scratch.

But that’s the thing; most people never go to the trouble of building their own personal worldview, on an issue by issue basis, from the ground up. They don’t feel like they have to – because they can simply select one of the pre-constructed ideologies that already exist (Christianity, Liberalism, etc.) and adopt that ideology as their own. Why consider each individual issue on its own merits and spend all that time outlining your own ideological framework, when there are pre-packaged worldviews available that have already done the work of answering every question for you? You can just peg your own worldview to one of those and say, “There, whatever that ideology says is what I believe.”

This strikes me as weird, frankly. You’d think that in a country of 325 million people, there’d be 325 million different worldviews. Given the thousands of different topics it’s possible to have an opinion on, you’d think there would be an incredibly wide range of variation, with some people believing in gun control but not creationism or financial regulation or climate change, others believing in creationism and climate change but not gun control or financial regulation, still others believing in financial regulation and climate change but not gun control or creationism, and so on. But instead, what we see is this pervasive cultural mindset that there are only a few possible combinations of views that a person can reasonably hold – only two, really, in most contexts. In the political sphere, you’re either a Republican or a Democrat. In the religious sphere, you either belong to whatever religion you were born into or you aren’t religious at all. There are only considered to be two possible ideologies for a person to sort themselves into – only two possible answers to every problem – and nothing else even computes. As Orson Scott Card writes:

We are fully polarized – if you accept one idea that sounds like it belongs to either the blue or the red, you are assumed – nay, required – to espouse the entire rest of the package, even though there is no reason why supporting the war against terrorism should imply you’re in favor of banning all abortions and against restricting the availability of firearms; no reason why being in favor of keeping government-imposed limits on the free market should imply you also are in favor of giving legal status to homosexual couples and against building nuclear reactors. These issues are not remotely related, and yet if you hold any of one group’s views, you are hated by the other group as if you believed them all; and if you hold most of one group’s views, but not all, you are treated as if you were a traitor for deviating even slightly from the party line.

(Quick disclaimer, by the way: You’ll notice me using a lot of quotations in this post. You’ll also notice that some of them come from highly reputable sources, while others, not so much. But I like to take good ideas wherever I find them, and a lot of great stuff has been written on this topic (even from writers and commentators you might not expect), so I’d rather share those insights with you directly than clumsily try to rephrase them into my own words when it’s not necessary – even if the result is that this post ends up being more of a compilation of interesting ideas I’ve gathered from other people than just a product of my own thoughts.)

Why is it so black and white? Why do worldviews tend to cluster together into only two flavors like this? Why is it that, if I told you someone’s opinion on climate change, you could probably predict their views on gun control, gay marriage, and so on, even though these topics are completely unrelated? Well, one possible answer is that although the topics might not be innately linked, they can become strongly linked due to how frequently they’re associated with each other. As David McRaney writes:

Symbols are a big part of your life thanks to the associative architecture of your brain. When I write a terrible romance novel line such as “It should have been obvious she was born in Africa, she had a beautifully long, slender neck not unlike a…” you can finish my sentence because your brain long ago formed a connection to the words long, slender, neck, and Africa. Neuroscientists call this a semantic net – every word, image, idea, and feeling is associated with everything else, like an endless tree growing in every direction at once. When you smell popcorn, you think of the cinema. When you hear a Christmas song, you think of Christmas trees.

Scott Alexander elaborates on how this idea could apply to socio-political issues:

Little flow diagram things make everything better. Let’s make a little flow diagram thing.

[Say] we have our node “Israel”, which has either good or bad karma [depending on your political opinions]. Then there’s another node close by marked “Palestine”. We would expect these two nodes to be pretty anti-correlated. When Israel has strong good karma, Palestine has strong bad karma, and vice versa.

Now suppose you listen to Noam Chomsky talk about how strongly he supports the Palestinian cause and how much he dislikes Israel. One of two things can happen:

“Wow, a great man such as Noam Chomsky supports the Palestinians! They must be very deserving of support indeed!”

or

“That idiot Chomsky supports Palestine? Well, screw him. And screw them!”

So now there is a third node, Noam Chomsky, that connects to both Israel and Palestine, and we have discovered it is positively correlated with Palestine and negatively correlated with Israel. It probably has a pretty low weight, because there are a lot of reasons to care about Israel and Palestine other than Chomsky, and a lot of reasons to care about Chomsky other than Israel and Palestine, but the connection is there.

I don’t know anything about neural nets, so maybe this system isn’t actually a neural net, but whatever it is I’m thinking of, it’s a structure where eventually the three nodes reach some kind of equilibrium. If we start with someone liking Israel and Chomsky, but not Palestine, then either that’s going to shift a little bit towards liking Palestine, or shift a little bit towards disliking Chomsky.

Now we add more nodes. Cuba seems to really support Palestine, so they get a positive connection with a little bit of weight there. And I think Noam Chomsky supports Cuba, so we’ll add a connection there as well. Cuba is socialist, and that’s one of the most salient facts about it, so there’s a heavily weighted positive connection between Cuba and socialism. Palestine kind of makes noises about socialism but I don’t think they have any particular economic policy, so let’s say very weak direct connection. And Che is heavily associated with Cuba, so you get a pretty big Che – Cuba connection, plus a strong direct Che – socialism one. And those pro-Palestinian students who threw rotten fruit at an Israeli speaker also get a little path connecting them to “Palestine” – hey, why not – so that if you support Palestine you might be willing to excuse what they did and if you oppose them you might be a little less likely to support Palestine.

Back up. This model produces crazy results, like that people who like Che are more likely to oppose Israel bombing Gaza. That’s such a weird, implausible connection that it casts doubt upon the entire…

Oh. Wait. Yeah. Okay.

I think this kind of model, in its efforts to sort itself out into a ground state, might settle on some kind of General Factor Of Politics, which would probably correspond pretty well to the left-right axis.

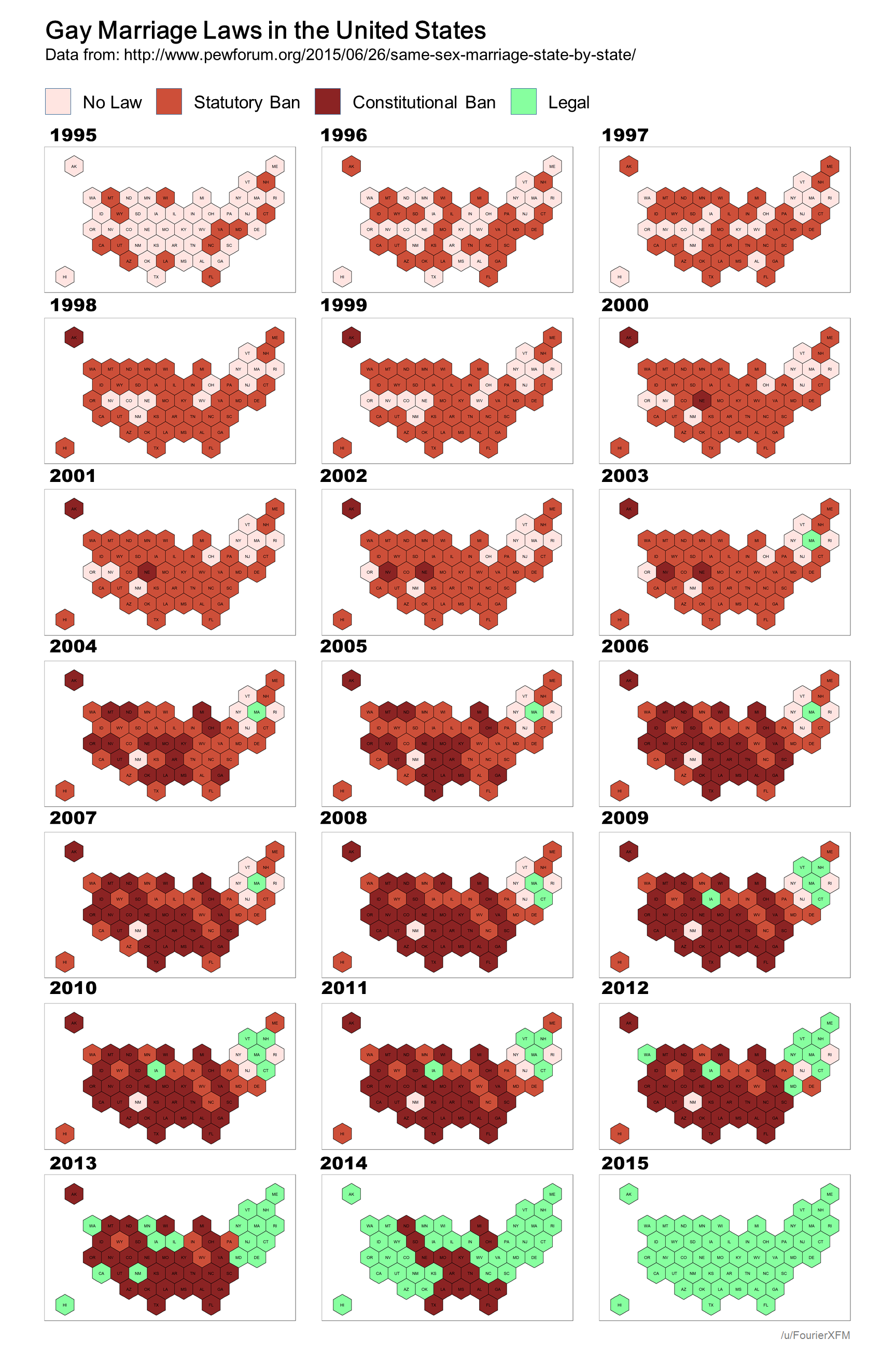

As he sums up, the “theory is that everything in politics is mutually reinforcing.” So if, say, you start off only having one or two main issues that you really care about (like abortion or gun control), you’ll naturally be more receptive toward sources that agree with you on those issues, and will end up absorbing a lot of their opinions on other issues as well (like taxes, foreign policy, gay marriage, etc.) through osmosis. Acquiring one particular belief or set of beliefs will usually mean that certain others come along for the ride. And because everyone else – including the very sources you’re taking your cues from – is doing the same thing, associations between different ideas that might start off fairly weak can accumulate more weight as like-minded people influence and are influenced by each other in turn. People within the same web of influence can reinforce each other’s conceptual connections so much that it causes a consensus cluster of beliefs to form, and the result is that anyone who adopts one of the beliefs within a particular cluster will typically be much more likely to adopt the entire rest of the cluster as well, since every source they encounter that opposes abortion and gun control will also tend to oppose higher taxes and gay marriage, and vice-versa. Ultimately, just by listening to “the people who know what they’re talking about” – i.e. the people who agree with you on your core issues – you can easily end up pigeonholing yourself neatly into the same standard left-right dichotomy as everyone else without even meaning to.

In a way, this relates back to Parkinson’s example of the nuclear reactor, in which everyone quickly comes to an agreement because they all assume someone more qualified has already done the necessary research to justify it. If you encounter a new ideological issue that you haven’t considered very deeply for yourself just yet, or haven’t done much research on, but you still want to have an opinion and appear knowledgeable (and who doesn’t want to appear knowledgeable?), it’s easier to simply slot yourself into whichever one of the pre-constructed ideologies that already exist (and have already figured out their answer to each of the hundreds of issues in question) you more closely identify with, and then adopt that faction’s view as your own, than it is to start from scratch and try to build your own conclusions from the ground up – doing your homework on each of the issues yourself, one by one, and cobbling together your own worldview that may or may not fit perfectly into the black-and-white paradigm used by everyone else. Instead of becoming knowledgeable the hard way, you can be knowledgeable by proxy, claiming your side’s collective expertise as your own. Instead of using the knowledge of trusted commentators to help you form your beliefs and fill in gaps in your worldview (a good and reasonable thing to do), you can use it as a means to skip that step entirely and pick a worldview that’s already been formed.

Like a student taking a pop quiz, it’s easier to answer the question as if it were multiple-choice than to answer it in a free-response essay format. With a multiple-choice question, you don’t have to show your work or explain your reasoning; all you have to do is select the right answer, and your competence is demonstrated. In terms of ideology, likewise, it’s easier simply to self-identify as a Republican or a Democrat or a Christian or a Muslim or whatever than to explain to anyone who asks that although you agree with one faction on issues A, B, and C, you like what this other faction has to say about issues D, E, and F, and on certain issues like G, H, and I you don’t really identify with any of the traditional factions and have formed your own particular views. The latter approach is still perfectly possible, obviously – there are a lot of smart people with independently-constructed worldviews that don’t fit neatly into the traditional dichotomies – but the former approach is undoubtedly the more convenient one.

This kind of ideological shortcut-taking – where everyone lumps themselves (and each other) into one of two groups rather than operating on a piecemeal basis – isn’t always a bad thing. Jason Brennan grants, for instance, that it can be useful for quickly identifying which political candidates’ views are closest to your own:

In modern democracies, most candidates join political parties. Political parties run on general ideologies and policy platforms. Individual candidates may have their own idiosyncrasies and preferences, but they have a strong tendency to fall in line and do what the party wants.

Many political scientists think party systems reduce the epistemic burdens of voting. Voters can get by reasonably well by treating all Republicans and Democrats as two homogeneous groups. In an election, instead of learning what this particular Republican and Democratic want to do, I can treat the candidates as standard Republicans and Democrats, and vote accordingly. This kind of statistical discrimination leads to mistakes on an individual basis, but on a macro level, with 535 members of Congress, these individual mistakes are likely to cancel out. The party system thus provides voters with a “cognitive shortcut”; it allows them to act as if they were reasonably well informed.

There’s much to be said for this line of reasoning. So long as voters tend to have reasonably accurate stereotypes of what policies the two major political parties tend to prefer, then voters as a whole can perform well by relying on such stereotypes.

On a more basic level, there’s also the simple fact that being part of a group is generally more fun and socially rewarding than being an unaffiliated loner without any allies or allegiances. There is power in numbers, and it can just feel so satisfying to belong to a group of like-minded people who will agree with you on all the important issues and praise you for agreeing with them in turn. People love being part of a team; so whenever there’s a contentious issue floating around, they will always want to take a side, even if there are only two sides to choose from. For a lot of people, this is probably the biggest reason of all to want to slot themselves into pre-constructed ideologies rather than constructing their own – because the ideas themselves aren’t actually their main consideration.

The problem with all this, though, is that once you take a side and plant your flag firmly in that camp, you develop a sort of “brand loyalty,” and your chosen affiliation starts to affect what you believe, instead of the other way around. As Carol Tavris and Eliot Aronson put it:

Once people form [an ideological] identity, usually in young adulthood, the identity does their thinking for them. That is, most people do not choose [an ideology] because it reflects their views; rather, once they choose [an ideology], its policies [or doctrines] become their views.

I could give countless examples of this. I remember hearing one case, for instance, of a Jewish man who casually mentioned one day that he didn’t believe in Hell anymore, and when asked why, replied that he found out that Judaism doesn’t include a belief in Hell, so since he was Jewish, then that wasn’t a part of what he believed anymore. Balioc gives a similar common example of “a self-identified libertarian asking ‘can a libertarian believe X?’ rather than just figuring out whether X is a reasonable thing to believe.” Steven Pinker has mentioned his encounters with people who held very strong opinions either for or against the Trans-Pacific Partnership (a trade agreement from 2016), but when asked to give their reasoning, couldn’t actually explain what the TPP even was – they just knew that their social media feeds were adamant about supporting it or opposing it, and so they jumped on the bandwagon as well. And Tavris and Aronson themselves give some even more dramatic political examples:

[Social psychologist Lee Ross has gained valuable insights] from his laboratory experiments and from his efforts to reduce the bitter conflict between Israelis and Palestinians. Even when each side recognizes that the other side perceives the issues differently, each thinks that the other side is biased while they themselves are objective, and that their own perceptions of reality should provide the basis for settlement. In one experiment, Ross took peace proposals created by Israeli negotiators, labeled them as Palestinian proposals, and asked Israeli citizens to judge them. “The Israelis liked the Palestinian proposal attributed to Israel more than they liked the Israeli proposal attributed to the Palestinians,” he says. “If your own proposal isn’t going to be attractive to you when it comes from the other side, what chance is there that the other side’s proposal is going to be attractive when it actually comes from the other side?”

Closer to home, social psychologist Geoffrey Cohen found that Democrats will endorse an extremely restrictive welfare proposal, one usually associated with Republicans, if they think it has been proposed by the Democratic Party, and Republicans will support a generous welfare policy if they think it comes from the Republican Party. Label the same proposal as coming from the other side, and you might as well be asking people if they will favor a policy proposed by Osama bin Laden. No one in Cohen’s study was aware of their blind spot – that they were being influenced by their party’s position. Instead, they all claimed that their beliefs followed logically from their own careful study of the policy at hand, guided by their general philosophy of government.

This is the central flaw of the whole “taking sides” approach. Once you’ve decided that the question “Which ideology is right?” must be a multiple-choice question and not a free-response one, it means that the moment you pick an answer to that question and take a side, your journey of inquiry is done. You no longer feel the need to examine any new facts for yourself, because you already know everything you need to know – you’ve already determined which side is the one that’s correct about everything – so you can reject any contradictory arguments in advance without even hearing them. If any new fact does happen to creep into your awareness which contradicts what your side says is true, well then, all that means is that you have to rationalize the contradiction away somehow – either by coming up with some explanation for why the new fact is actually false or misleading, or by concocting some elaborate interpretation of your side’s ideology that demonstrates how your side has actually been properly accounting for this fact all along, or by some other equally convoluted method. You may not know exactly what the explanation is for why your side is right on some particular given issue, but you know that in the overall scheme of things, your side is the right one, so therefore some explanation must exist, even if you don’t know the particulars off the top of your head. What you can’t do, though, is just admit that in some cases the facts do appear to point in a direction other than your chosen side – much less admit that you have no problem accepting this because you have your own personal set of beliefs that don’t always map perfectly onto one side in the first place. Once you’ve picked a side, it becomes your answer to every problem. It becomes part of your identity – almost like your nationality or something – and it therefore has to be protected from any new information that might threaten it.

A lot of smart people have noticed what a powerful (and detrimental) role this particular brand of mental gymnastics can have in our ideological interactions – from Bill Clinton…

The problem with any ideology is that it gives the answer before you look at the evidence. So you have to mold the evidence to get the answer that you’ve already decided you’ve got to have.

…to Arthur Conan Doyle, via his character Sherlock Holmes:

It is a capital mistake to theorize before one has data. Insensibly one begins to twist facts to suit theories instead of theories to suit facts.

Even George Orwell (perhaps unsurprisingly) weighed in on this phenomenon in his novel 1984, describing how people rid themselves of any thoughts or ideas that contradict their party’s ideology:

Crimestop means the faculty of stopping short, as though by instinct, at the threshold of any dangerous thought. It includes the power of not grasping analogies, of failing to perceive logical errors, of misunderstanding the simplest arguments […] and of being bored or repelled by any train of thought which is capable of leading in a heretical direction. Crimestop, in short, means protective stupidity.

To put it simply, then, there are basically two different approaches you might take when encountering new information. You can either examine all the facts dispassionately and then accept whichever truth they point to – even if it contradicts your preferred conclusion – or you can accept only those facts that are compatible with what you already believe, and rationalize the rest away so that you can maintain your already-held beliefs without having to change any of them. In the latter case, you aren’t actually undergoing an honest search for truth – you’re just searching for one very particular truth. You already have in mind the conclusion that you’re aiming for, and you’re determined to arrive at that conclusion even if it means ignoring or dismissing unwelcome facts.

The official term for this is “motivated reasoning.” Jonathan Haidt delves into some of the theory behind this behavior:

The social psychologist Tom Gilovich studies the cognitive mechanisms of strange beliefs. His simple formulation is that when we want to believe something, we ask ourselves, “Can I believe it?” Then (as [Deanna] Kuhn and [David] Perkins found), we search for supporting evidence, and if we find even a single piece of pseudo-evidence, we can stop thinking. We now have permission to believe. We have a justification, in case anyone asks.

In contrast, when we don’t want to believe something, we ask ourselves, “Must I believe it?” Then we search for contrary evidence, and if we find a single reason to doubt the claim, we can dismiss it.

You only need one key to unlock the handcuffs of must.

Psychologists now have file cabinets full of findings on “motivated reasoning,” showing the many tricks people use to reach the conclusions they want to reach. When subjects are told that an intelligence test gave them a low score, they choose to read articles criticizing (rather than supporting) the validity of IQ tests. When people read a (fictitious) scientific study that reports a link between caffeine consumption and breast cancer, women who are heavy coffee drinkers find more flaws in the study than do men and less caffeinated women. Pete Ditto, at the University of California at Irvine, asked subjects to lick a strip of paper to determine whether they have a serious enzyme deficiency. He found that people wait longer for the paper to change color (which it never does) when a color change is desirable than when it indicates a deficiency, and those who get the undesirable prognosis find more reasons why the test might not be accurate (for example, “My mouth was unusually dry today”).

The difference between a mind asking “Must I believe it?” versus “Can I believe it?” is so profound that it even influences visual perception. Subjects who thought that they’d get something good if a computer flashed up a letter rather than a number were more likely to see the ambiguous figure [below] as the letter B, rather than as the number 13.

If people can literally see what they want to see – given a bit of ambiguity – is it any wonder that scientific studies often fail to persuade the general public? Scientists are really good at finding flaws in studies that contradict their own views, but it sometimes happens that evidence accumulates across many studies to the point where scientists must change their minds. I’ve seen this happen in my colleagues (and myself) many times, and it’s part of the accountability system of science – you’d look foolish clinging to discredited theories. But for nonscientists, there is no such thing as a study you must believe. It’s always possible to question the methods, find an alternative interpretation of the data, or, if all else fails, question the honesty or ideology of the researchers.

And now that we all have access to search engines on our cell phones, we can call up a team of supportive scientists for almost any conclusion twenty-four hours a day. Whatever you want to believe about the causes of global warming or whether a fetus can feel pain, just Google your belief. You’ll find partisan websites summarizing and sometimes distorting relevant scientific studies. Science is a smorgasbord, and Google will guide you to the study that’s right for you.

[…]

People are quite good at challenging statements made by other people, but if it’s your belief, then it’s your possession – your child, almost – and you want to protect it, not challenge it and risk losing it.

Chris Mooney adds:

A large number of psychological studies have shown that people respond to scientific or technical evidence in ways that justify their preexisting beliefs. In a classic 1979 experiment (PDF), pro- and anti-death penalty advocates were exposed to descriptions of two fake scientific studies: one supporting and one undermining the notion that capital punishment deters violent crime and, in particular, murder. They were also shown detailed methodological critiques of the fake studies – and in a scientific sense, neither study was stronger than the other. Yet in each case, advocates more heavily criticized the study whose conclusions disagreed with their own, while describing the study that was more ideologically congenial as more “convincing.”

Since then, similar results have been found for how people respond to “evidence” about affirmative action, gun control, the accuracy of gay stereotypes, and much else. Even when study subjects are explicitly instructed to be unbiased and even-handed about the evidence, they often fail.

[…]

[In ideologically loaded cases such as these,] when we think we’re reasoning, we may instead be rationalizing. Or to use an analogy offered by University of Virginia psychologist Jonathan Haidt: We may think we’re being scientists, but we’re actually being lawyers (PDF). Our “reasoning” is a means to a predetermined end – winning our “case” – and is shot through with biases. They include “confirmation bias,” in which we give greater heed to evidence and arguments that bolster our beliefs, and “disconfirmation bias,” in which we expend disproportionate energy trying to debunk or refute views and arguments that we find uncongenial.

That’s a lot of jargon, but we all understand these mechanisms when it comes to interpersonal relationships. If I don’t want to believe that my spouse is being unfaithful, or that my child is a bully, I can go to great lengths to explain away behavior that seems obvious to everybody else – everybody who isn’t too emotionally invested to accept it, anyway. That’s not to suggest that we aren’t also motivated to perceive the world accurately – we are. Or that we never change our minds – we do. It’s just that we have other important goals besides accuracy – including identity affirmation and protecting one’s sense of self – and often those make us highly resistant to changing our beliefs when the facts say we should.

As Haidt points out (citing the research of Philip Tetlock), it actually is possible to overcome these biases under certain specific circumstances, in which you expect to be held accountable for the accuracy of your beliefs in a very particular way – but as he also mentions, these circumstances hardly ever arise in the real world:

Tetlock found two very different kinds of careful reasoning. Exploratory thought is an “evenhanded consideration of alternative points of view.” Confirmatory thought is “a one-sided attempt to rationalize a particular point of view.” Accountability increases exploratory thought only when three conditions apply: (1) decision makers learn before forming any opinion that they will be accountable to an audience, (2) the audience’s views are unknown, and (3) they believe the audience is well informed and interested in accuracy.

When all three conditions apply, people do their darnedest to figure out the truth, because that’s what the audience wants to hear. But the rest of the time – which is almost all of the time – accountability pressures simply increase confirmatory thought. People are trying harder to look right than to be right.

It’s also worth noting that the greater your commitment is to a certain belief (and in high-stakes realms like politics, religion, and morality, people’s level of commitment tends to be extreme), the harder it is to give up your attachment to it. The more central an idea is to your thinking, the less likely it is that you’ll be willing to consider replacing it, even when the evidence against it becomes overwhelming. It may be no big deal to occasionally make slight adjustments to one or two minor beliefs on the fringes of your worldview, but if a belief underpins the entire foundation of your understanding, it’s simply unthinkable to ever change it – because that would mean tearing down the whole superstructure (perhaps one that you’ve spent years constructing) and starting all over again from ground zero. As Tavris and Aronson write:

America is a mistake-phobic culture, one that links mistakes with incompetence and stupidity. So even when people are aware of having made a mistake, they are often reluctant to admit it, even to themselves, because they take it as evidence that they are a blithering idiot.

[…]

Most Americans know they are supposed to say “we learn from our mistakes,” but deep down, they don’t believe it for a minute. They think that mistakes mean you are stupid.

[…]

One lamentable consequence of the belief that mistakes equal stupidity is that when people do make a mistake, they don’t learn from it. They throw good money after bad.

They provide a particularly striking historical example:

Half a century ago, a young social psychologist named Leon Festinger and two associates infiltrated a group of people who believed the world would end on December 21. They wanted to know what would happen to the group when (they hoped!) the prophecy failed. The group’s leader, whom the researchers called Marian Keech, promised that the faithful would be picked up by a flying saucer and elevated to safety at midnight on December 20. Many of her followers quit their jobs, gave away their homes, and dispersed their savings, waiting for the end. Who needs money in outer space? Others waited in fear or resignation in their homes. (Mrs. Keech’s own husband, a nonbeliever, went to bed early and slept soundly through the night as his wife and her followers prayed in the living room.) Festinger made his own prediction: The believers who had not made a strong commitment to the prophecy – who awaited the end of the world by themselves at home, hoping they weren’t going to die at midnight – would quietly lose their faith in Mrs. Keech. But those who had given away their possessions and were waiting with the others for the spaceship would increase their belief in her mystical abilities. In fact, they would now do everything they could to get others to join them.

At midnight, with no sign of a spaceship in the yard, the group felt a little nervous. By 2 a.m., they were getting seriously worried. At 4:45 a.m., Mrs. Keech had a new vision: The world had been spared, she said, because of the impressive faith of her little band. “And mighty is the word of God,” she told her followers, “and by his word have ye been saved – for from the mouth of death have ye been delivered and at no time has there been such a force loosed upon the Earth. Not since the beginning of time upon this Earth has there been such a force of Good and light as now floods this room.”

The group’s mood shifted from despair to exhilaration. Many of the group’s members, who had not felt the need to proselytize before December 21, began calling the press to report the miracle, and soon they were out on the streets, buttonholing passersby, trying to convert them. Mrs. Keech’s prediction had failed, but not Leon Festinger’s.

You might be tempted to laugh at the gullibility of these seemingly ridiculous cultists, but we all share the same psychological propensity to stack the deck in favor of our preferred conclusions when we’re heavily invested in them; even the most intelligent and highly-trained professionals in their fields are susceptible to this. In fact, even when the stakes are as high as they could possibly be, even when the lives of millions of people hang in the balance, it’s possible to fall prey to this tendency to “put our thumbs on the scale as we weigh the evidence,” as Simler puts it – to take the principle of “the benefit of the doubt” to its logical extreme in favor of our preferred conclusions. Gary Klein cites the example of the pivotal World War II battle of Midway:

[The Japanese] had reason for their overconfidence. They had smashed the Americans and British throughout the Pacific – at Pearl Harbor, in the Philippines, and in Southeast Asia. Now they prepared to use an attack on Midway Island to wipe out the remaining few aircraft carriers the Americans had left in the Pacific. The Japanese brought their primary strike force, the force that had struck at Pearl Harbor, with four of their finest aircraft carriers.

The battle didn’t go as planned. The Americans had broken enough of the Japanese code to know about the Japanese attack and got their own aircraft carriers into position before the Japanese arrived. The ambush worked. In a five-minute period, the United States sank three of the Japanese aircraft carriers. By the end of the day, it sank the fourth one as well. At the beginning of June 4, the Japanese Navy ruled the Pacific. By that evening, its domination was over, and Japan spent the rest of the war defending against an inevitable defeat.

What interests us here is the war game the Japanese conducted May 1–5 to prepare for Midway. The top naval leaders gathered to play out the plan and see if there were any weaknesses. Yamamoto himself was present. However, the Japanese brushed aside any hint of a weakness. At one point the officer playing the role of the American commander sent his make-believe forces to Midway ahead of the battle to spring an ambush not unlike what actually happened. The admiral refereeing the war game refused to allow it. He argued that it was very unlikely that the Americans would make such an aggressive move. The officer playing the American commander tearfully protested, not only because he wanted to do well in the war game, but also, and more importantly, because he was afraid his colleagues were taking the Americans too lightly. His protests were overruled. With Yamamoto looking on approvingly, the Japanese played the war game to the end and concluded that the plan didn’t have any flaws.

How could the Japanese leaders have been so willfully blind to the facts that were staring them right in the face, especially when the stakes were so high? The simple answer is that, precisely because the stakes were so high, the Japanese leaders became too psychologically committed to the stances they’d already taken – to the point that it was less psychologically painful to continue being wrong, and to just have everyone think they were competent (including themselves), than it was to be right by admitting their error and going back to the drawing board. If the stakes had been significantly lower – for instance, if they’d just been playing a casual game of Battleship over drinks with friends – it would have been no big deal to accept some good outside advice on improving their strategy. But because they’d put so much work into their battle plans, and because they were staking so much of their prestige as military commanders on the success of these plans, it was unthinkable to just scrap them and start all over from square one. Tavris and Aronson give another World War II-related analogy illustrating this mindset:

In that splendid film The Bridge on the River Kwai, Alec Guinness and his soldiers, prisoners of the Japanese in World War II, build a railway bridge that will aid the enemy’s war effort. Guinness agrees to this demand by his captors as a way of building unity and restoring morale among his men, but once he builds it, it becomes his – a source of pride and satisfaction. When, at the end of the film, Guinness finds the wires revealing that the bridge has been mined and realizes that Allied commandoes are planning to blow it up, his first reaction is, in effect: “You can’t! It’s my bridge. How dare you destroy it!” To the horror of the watching commandoes, he tries to cut the wires to protect the bridge. Only at the very last moment does Guinness cry, “What have I done?,” realizing that he was about to sabotage his own side’s goal of victory to preserve his magnificent creation.

We’ve all had moments like this, where we become so emotionally attached to our own ideas – so invested in winning the argument and preserving our beliefs – that we lose sight of our more important goal, which should be making sure we’re on the right side of the argument and holding the right beliefs in the first place. As Sister Y puts it:

A lot of us get stuck in traps. We become aware of a powerful insight (atheism, feminism, conspiracy theories) and begin to think it explains all of reality. We commit to our hard-won but limited set of insights until they calcify, protecting us from the trauma (and the pleasure) of further insights.

Sure, we can admit when we’re wrong about small, trivial things – no problem – but when it comes to the big things, we don’t want to let go of anything we’ve worked so hard on and made such an integral part of our identity. Not only is it deeply demoralizing to have to go back to square one; it’s embarrassing. Admitting you were wrong about something means losing face in a big way – especially if it’s something you were really vocal and adamant about previously.

Maybe if we had a different approach toward ideas and beliefs – one in which people didn’t have to feel so self-conscious about being wrong and could freely explore different possibilities in a genuinely open-ended search for truth – we wouldn’t keep running into these problems. But the whole “taking sides” dynamic doesn’t permit such an approach. Not only does it compel you to do all these mental gymnastics massaging the facts to fit into your side’s narrative; it also forces you into a mindset that is, by definition, inherently adversarial and hostile toward any outside challenges. You can’t have a “side,” after all, unless there’s an opposing side – and this means that beliefs and ideas aren’t just a matter of freely exploring different possibilities in an unselfconscious search for truth, they’re a matter of winning and losing. If you happen to be wrong about something, that doesn’t just mean you can correct course and continue onward, feeling grateful for the opportunity to have upgraded to a more accurate set of beliefs; it means you’ve embarrassed yourself and lost face.

There’s a good bit of psychological research to suggest that when you openly challenge a person’s beliefs in a direct confrontation, it doesn’t necessarily make them more open to opposing ideas (as it might if they learned about those ideas in a less adversarial context); on the contrary, what can often happen is that direct confrontation causes them to become more defensive, digging in their heels and entrenching themselves even further in their positions. Again, this is probably something you’ve experienced for yourself – what starts as a friendly disagreement (Person A: “I don’t think that’s really true”) gradually begins to escalate (Person B: “Really? It seems pretty clear to me that it is true”); positions begin to harden (Person A: “Are you kidding? It’s obviously not true; you’d have to be a moron not to see that”), until finally you reach a point where both participants, having entered the conversation with a fairly modest level of confidence in their views, are now swearing that their side is right with absolute 100% certainty. This isn’t so much that they really are 100% certain in their views (if you asked them to bet their life savings on it, for instance, they’d probably start backpedaling pretty quickly unless they’d gotten themselves so steamed up that they were beyond all reason); it’s more that their avowed “certainty” is serving as a proxy for something else, like how important the belief is to them, or how much they want to be perceived as being committed to that belief. A lot of times, it’s just a way of making their argument seem more credible; if someone really is that confident in their beliefs, then those beliefs must be true, right?

Obviously, we know that that’s not the case. There are millions of Christians who will swear with 100% certainty that Jesus has visited them personally and revealed to them that Christianity is the one and only true religion; and there are millions of Muslims and Hindus and other believers who will swear the same thing with 100% certainty about their own deities; but they can’t all be right. Similarly, there are millions of liberals who claim to be 100% certain that their preferred policies are superior, while millions of conservatives claim to be 100% certain that their preferred policies are superior. Again, just because someone claims a high degree of certainty doesn’t prove that their ideas are more credible; all it proves is that people are capable of convincing themselves that they’re certain of things they don’t actually have any way of being certain of. (As Michel de Montaigne said, “Nothing is so firmly believed as that which we least know.”) Nobody wants to admit this out loud, though – especially when they’re talking to someone outside of their religion or political party – because they feel that admitting to anything less than absolute certainty in their beliefs would undermine the perceived credibility of those beliefs. If you’re trying to win an argument, the reasoning goes, then hedging your positions and conceding that there are a lot of unknown gray areas defeats the purpose. You need to know for a fact that your side is right; anything less is self-sabotage.

According to this mentality, admitting when someone on the opposing side makes a decent point (i.e. “making a concession”) is like giving up points in a game – it’s like losing – and therefore you can never do it willingly. It doesn’t matter what’s actually true; what matters is that you deny your opponents the satisfaction of having scored points against you. This isn’t always something that you even need to be consciously aware of – i.e. realizing when your opponent is making a good point and just cynically refusing to admit it for strategic purposes – a lot of times it can take a more subconscious form, where you don’t allow yourself to even notice when they’re making a good point. Like in that Orwell quotation describing Crimestop, it’s not so much that you consciously think “Hmm, yes, I notice that my opponent’s argument is superior to my own, therefore I won’t acknowledge it” – it’s more of a subtle feeling of frustration that your opponent’s argument isn’t instantly crumbling under the force of your effortless refutations like it should, accompanied by a nagging sense of annoyance at not knowing exactly how to make it so. Again, the more you perceive the issue at hand to be central to your side’s ideology, the stronger this behavior is.

And this tendency to never want to cede any ground to your enemy doesn’t just skew the way you handle opposing ideas in an argument; it can also affect your perception of every single person and event that might have some connection to your (or your opponent’s) ideology. If you see a news story about a prominent figure using some horrible derogatory slur, for instance, or a crazed criminal attacking people in the streets, your response will probably be roughly the same as everyone else’s if the perpetrator is ideologically neutral. However, the moment the story takes on an ideological shade – if new information emerges that the wrongdoer subscribes to a particular religious or political belief system, for example – then you’ll immediately be tempted to start rationalizing in one direction or another. If they’re part of the same team you are, then you’ll start thinking up excuses for why what they said wasn’t actually that bad, or why their actions weren’t motivated by their beliefs but by some other factor like mental illness. On the other hand, if they’re part of the opposing team, then you’ll reject any such excuses out of hand and be more inclined to exaggerate the severity of the wrongdoing, claiming that it’s the most reprehensible atrocity you’ve ever seen and reflects the moral bankruptcy of the ideology behind it.

Alexander provides some insight on how ideology can skew our perception of such events:

I have found a pattern: when people consider an idea in isolation, they tend to make good decisions. When they consider an idea a symbol of a vast overarching narrative, they tend to make very bad decisions.

Let me offer [an] example.

A white man is accused of a violent attack on a black woman. In isolation, well, either he did it or he didn’t, and without any more facts there’s no use discussing it.

But what if this accusation is viewed as a symbol? What if you have been saying for years that racism and sexism are endemic in this country, and that whites and males are constantly abusing blacks and females, and they’re always getting away with it because the police are part of a good ole’ boys network who protect their fellow privileged whites?

Well, right now, you’ll probably still ask for the evidence. But if I gave you some evidence, and it was complicated, you’d probably interpret it in favor of the white man’s guilt. The heart has its reasons that reasons know not of, and most of them suck. We make unconsciously make decisions based on our own self-interest and what makes us angry or happy, and then later we find reasons why the evidence supports them. If I have a strong interest in a narrative of racism, then I will interpret the evidence to support accusations of racism.

Lest I sound like I’m picking on the politically correct, I’ve seen scores of people with the opposite narrative. You know, political correctness has grown rampant in our society, women and minorities have been elevated to a status where they can do no wrong, the liberal intelligentsia always tries to pin everything on the white male. When the person with this narrative hears the evidence in this case, they may be more likely to believe the white man – especially if they’d just listened to their aforementioned counterpart give their speech about how this proves the racist and sexist tendencies of white men.

Yes, I’m thinking of the Duke lacrosse case.

The problem here is that there are two different questions here: whether this particular white male attacked this particular black woman, and whether our society is racist or “reverse racist”. The first question definitely has one correct answer which while difficult to ascertain is philosophically simple, whereas the second question is meaningless, in the same technical sense that “Islam is a religion of peace” is meaningless. People are conflating these two questions, and acting as if the answer to the second determines the answer to the first.

Which is all nice and well unless you’re one of the people involved in the case, in which case you really don’t care about which races are or are not privileged in our society as much as you care about not being thrown in jail for a crime you didn’t commit, or about having your attacker brought to justice.

I think this is the driving force behind a lot of politics. Let’s say we are considering a law mandating businesses to lower their pollution levels. So far as I understand economics, the best decision-making strategy is to estimate how much pollution is costing the population, how much cutting pollution would cost business, and if there’s a net profit, pass the law. Of course it’s more complicated, but this seems like a reasonable start.

What actually happens? One side hears the word “pollution” and starts thinking of hundreds of times when beautiful pristine forests were cut down in the name of corporate greed. This links into other narratives about corporate greed, like how corporations are oppressing their workers in sweatshops in third world countries, and since corporate executives are usually white and third world workers usually not, let’s add racism into the mix. So this turns into one particular battle in the war between All That Is Right And Good and Corporate Greed That Destroys Rainforests And Oppresses Workers And Is Probably Racist.

The other side hears the words “law mandating businesses” and starts thinking of a long history of governments choking off profitable industry to satisfy the needs of the moment and their re-election campaign. The demonization of private industry and subsequent attempt to turn to the government for relief is a hallmark of communism, which despite the liberal intelligentsia’s love of it killed sixty million people. Now this is a battle in the war between All That Is Right And Good and an unholy combination of Naive Populism and Soviet Russia.

[…]

Now, if the economists do their calculations and report that actually the law would cause more harm than good, do you think the warriors against Corporate Greed That Destroys Rainforests And Oppresses Workers And Is Probably Racist are going to say “Oh, okay then” and stand down? In the face of Corporate Greed That Destroys Rainforests And Oppresses Workers And Is Probably Racist?!?

[…]

I call this mistake “missing the trees for the forest”. If you have a specific case you need to judge, judge it separately on its own merits, not the merits of what agendas it promotes or how it fits with emotionally charged narratives.

He gives one more example:

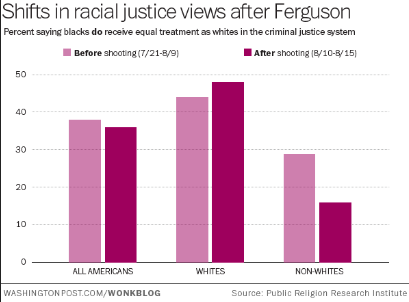

You can see that after the Ferguson shooting [of 2014], the average American became a little less likely to believe that blacks were treated equally in the criminal justice system. This makes sense, since the Ferguson shooting was a much-publicized example of the criminal justice system treating a black person unfairly.

But when you break the results down by race, a different picture emerges. White people were actually a little more likely to believe the justice system was fair after the shooting. Why? I mean, if there was no change, you could chalk it up to white people believing the police’s story that the officer involved felt threatened and made a split-second bad decision that had nothing to do with race. That could explain no change just fine. But being more convinced that justice is color-blind? What could explain that?

My guess – before Ferguson, at least a few people interpreted this as an honest question about race and justice. After Ferguson, everyone mutually agreed it was about politics.

[…]

Anyone who thought that the question in that poll was just a simple honest question about criminal justice was very quickly disabused of that notion. It was a giant Referendum On Everything, a “do you think the Blue Tribe is right on every issue and the Red Tribe is terrible and stupid, or vice versa?” And it turns out many people who when asked about criminal justice will just give the obvious answer, have much stronger and less predictable feelings about Giant Referenda On Everything.

In my last post, I wrote about how people feel when their in-group is threatened, even when it’s threatened with an apparently innocuous point they totally agree with:

I imagine [it] might feel like some liberal US Muslim leader, when he goes on the O’Reilly Show, and O’Reilly ambushes him and demands to know why he and other American Muslims haven’t condemned beheadings by ISIS more, demands that he criticize them right there on live TV. And you can see the wheels in the Muslim leader’s head turning, thinking something like “Okay, obviously beheadings are terrible and I hate them as much as anyone. But you don’t care even the slightest bit about the victims of beheadings. You’re just looking for a way to score points against me so you can embarass all Muslims. And I would rather personally behead every single person in the world than give a smug bigot like you a single microgram more stupid self-satisfaction than you’ve already got.”

I think most people, when they think about it, probably believe that the US criminal justice system is biased. But when you feel under attack by people whom you suspect have dishonest intentions of twisting your words so they can use them to dehumanize your in-group, eventually you think “I would rather personally launch unjust prosecutions against every single minority in the world than give a smug out-group member like you a single microgram more stupid self-satisfaction than you’ve already got.”

When people regard every news story involving their side as a referendum on the truth value of their side’s ideology as a whole, it’s easy to see why they might have a hard time condemning wrongdoers on their own side, even when such criticism is deserved – or why they might struggle to give the other side credit when credit is due. This is what ideological tribalism is all about.

(Another quick disclaimer, by the way: You may be reading this at some point in the future where social issues like race aren’t as all-consuming – the big issue of your day might be a big war or financial crisis or something – but at the time of this writing, at least, they’re the main issues dominating the political discourse, so for better or worse, a lot of the quotations cited here are going to involve things like racial justice and neo-Nazi rallies and so forth. Having said that, for most of these examples you could substitute other contentious issues like capitalism vs. socialism or pro-choice vs. pro-life and they’d apply just as well.)

The irony here is that from a neutral outside standpoint, a person’s inability to notice their own double standards and openly admit when someone on their side acts wrongly reflects even worse on their side than if they simply shrugged and said, “Oh yeah, obviously that person doesn’t represent what I believe in and their actions are clearly counterproductive to the cause.” Someone who can’t bring themselves to acknowledge clear-cut cases of wrongdoing only makes themselves look guilty by association, unable to assess things reasonably or impartially.

But within the tribalist mindset, “assessing things reasonably and impartially” isn’t always the goal. It’s not a matter of trying to dispassionately weigh different ideas to see which one is most accurate, like a judge at a boxing match – it’s more like being one of the boxers. You’re not a judge in the battle of ideas, you’re fighting in it – and when you’re in a fight, the point isn’t to make the right judgment; the point is to win. As Eliezer Yudkowsky writes:

Politics is an extension of war by other means. Arguments are soldiers. Once you know which side you’re on, you must support all arguments of that side, and attack all arguments that appear to favor the enemy side; otherwise it’s like stabbing your soldiers in the back – providing aid and comfort to the enemy. People who would be level-headed about evenhandedly weighing all sides of an issue in their professional life as scientists, can suddenly turn into slogan-chanting zombies when there’s a Blue or Green position on an issue.

And Jason Pargin agrees:

We don’t ingest facts to educate ourselves; we do it so that we have ammunition to use against the opposing tribe. Therefore, it doesn’t matter if we’re oversimplifying or skewing (we’ll point out that guns kill 30,000 Americans every year but omit that two-thirds of those are suicides) because we know our side is right and winning is all that matters.

There are much better ways of thinking, of course. According to Brennan’s theory of political behavior, there are three primary approaches a person might adopt when it comes to their ideological views – the “hobbit” approach, the “hooligan” approach, or the “vulcan” approach – and of these three, the third is decidedly better than the first two. It’s basically the equivalent of putting yourself in the role of judge rather than fighter. Unfortunately, though, the majority of people never make it to the more advanced “vulcan” category; they tend to get bogged down at the “hobbit” or “hooligan” stage:

- Hobbits are mostly apathetic and ignorant about politics. They lack strong, fixed opinions about most political issues. Often they have no opinions at all. They have little, if any, social scientific knowledge; they are ignorant not just of current events but also of the social scientific theories and data needed to evaluate as well as understand these events. Hobbits have only a cursory knowledge of relevant world or national history. They prefer to go on with their daily lives without giving politics much thought. In the United States, the typical nonvoter is a hobbit.

- Hooligans are the rabid sports fans of politics. They have strong and largely fixed worldviews. They can present arguments for their beliefs, but they cannot explain alternative points of view in a way that people with other views would find satisfactory. Hooligans consume political information, although in a biased way. They tend to seek out information that confirms their preexisting political opinions, but ignore, evade, and reject out of hand evidence that contradicts or disconfirms their preexisting opinions. They may have some trust in the social sciences, but cherry-pick data and tend only to learn about research that supports their own views. They are overconfident in themselves and what they know. Their political opinions form part of their identity, and they are proud to be a member of their political team. For them, belonging to the Democrats or Republicans, Labor or Tories, or Social Democrats or Christian Democrats matters to their self-image in the same way being a Christian or Muslim matters to religious people’s self-image. They tend to despise people who disagree with them, holding that people with alternative worldviews are stupid, evil, selfish, or at best, deeply misguided. Most regular voters, active political participants, activists, registered party members, and politicians are hooligans.

- Vulcans think scientifically and rationally about politics. Their opinions are strongly grounded in social science and philosophy. They are self-aware, and only as confident as the evidence allows. Vulcans can explain contrary points of view in a way that people holding those views would find satisfactory. They are interested in politics, but at the same time, dispassionate, in part because they actively try to avoid being biased and irrational. They do not think everyone who disagrees with them is stupid, evil, or selfish.

These are ideal types or conceptual archetypes. Some people fit these descriptions better than others. No one manages to be a true vulcan; everyone is at least a little biased. Alas, many people fit the hobbit and hooligan molds quite well. Most Americans are either hobbits or hooligans, or fall somewhere in the spectrum in between.

Brennan’s description of hooligans as being like “rabid sports fans” is particularly appropriate here. One of the first studies to uncover the extent of this psychological bias actually involved gauging fans’ reactions to a football game, as Tim Harford describes:

An early indicator of how tribal our logic can be was a study conducted in 1954 by Albert Hastorf, a psychologist at Dartmouth, and Hadley Cantril, his counterpart at Princeton. Hastorf and Cantril screened footage of a game of American football between the two college teams. It had been a rough game. One quarterback had suffered a broken leg. Hastorf and Cantril asked their students to tot up the fouls and assess their severity. The Dartmouth students tended to overlook Dartmouth fouls but were quick to pick up on the sins of the Princeton players. The Princeton students had the opposite inclination. They concluded that, despite being shown the same footage, the Dartmouth and Princeton students didn’t really see the same events. Each student had his own perception, closely shaped by his tribal loyalties. The title of the research paper was “They Saw a Game”.

A more recent study revisited the same idea in the context of political tribes. The researchers showed students footage of a demonstration and spun a yarn about what it was about. Some students were told it was a protest by gay-rights protesters outside an army recruitment office against the military’s (then) policy of “don’t ask, don’t tell”. Others were told that it was an anti-abortion protest in front of an abortion clinic.

Despite looking at exactly the same footage, the experimental subjects had sharply different views of what was happening – views that were shaped by their political loyalties. Liberal students were relaxed about the behaviour of people they thought were gay-rights protesters but worried about what the pro-life protesters were doing; conservative students took the opposite view. As with “They Saw a Game”, this disagreement was not about the general principles but about specifics: did the protesters scream at bystanders? Did they block access to the building? We see what we want to see – and we reject the facts that threaten our sense of who we are.

Brennan drives home the parallel between sports fandom and political partisanship:

[Ilya] Somin has a good analogy: some people are political fans. Sports fans enjoy rooting for a team. […] Sports fans, however, also tend to evaluate information in a biased way. They tend to “play up evidence that makes their team look good and their rivals look bad, while downplaying evidence that cuts the other way.”

This is what tends to happen in politics. People tend to see themselves as being on team Democrat or team Republican, team Labor or team Conservative, and so on. They acquire information because it helps them root for their team and against their hated rivals. If the rivalry between Democratic and Republican voters sometimes seems like the rivalry between Red Sox and Yankees fans, that’s because from a psychological point of view, it very much is.

Holding unconscious double standards like this may not be such a big deal when the context is a meaningless sports game. Nobody will hold it against you if you’re a “homer” who supports their team even if it means indulging in a little self-delusion. But this kind of team spirit can have devastating consequences when it seeps into other areas of contention, such as international relations, where the stakes can be matters of life and death. To quote Orwell again:

All nationalists have the power of not seeing resemblances between similar sets of facts. A British Tory will defend self-determination in Europe and oppose it in India with no feeling of inconsistency. Actions are held to be good or bad, not on their own merits, but according to who does them, and there is almost no kind of outrage – torture, the use of hostages, forced labour, mass deportations, imprisonment without trial, forgery, assassination, the bombing of civilians – which does not change its moral colour when it is committed by ‘our’ side.

Troublingly, this pattern permeates practically every contentious issue in our society, regardless of whether the stakes are low or high. Simler and Hanson give a more recent example:

During the Bush administration, U.S. antiwar protestors – most of whom were liberal – justified their efforts in terms of the harms of war. And yet when Obama took over as president, they drastically reduced their protests, even though the wars in Iraq and Afghanistan continued unabated. All this suggested an agenda that was more partisan than pacifist.

McRaney mentions a similar case relating to economic attitudes:

Remember all that handwringing about economic insecurity in red states as political motivation to vote one way or the other? Recent analysis by behavioral economist Peter Atwater has found that almost all of that economic insecurity has evaporated since Trump became president, despite the fact that nothing has changed economically in those places where it was once a supposedly major concern. This suggests that people’s political behavior was driven by tribal psychology, like it usually is, but justified by whatever seems salient at the time, like it usually is. Once their “tribal mood,” as he put it, improved, so did their feelings about the economy.

And Zaid Jilani adds:

“Americans are dividing themselves socially on the basis of whether they call themselves liberal or conservative, independent of their actual policy differences,” [according to Lilliana Mason].

[…]

She noted, for instance, that Americans who identify most strongly as conservative, whether they hold more left-leaning or right-leaning positions on major issues, dislike liberals more than people who more weakly identify as conservatives but may hold very right-leaning issue positions.

The loose connection some voters have with policy preferences has become apparent in recent years. Donald Trump managed to flip a party from support of free trade to opposition to it by merely taking the opposite side of the issue. Democrats, meanwhile, mocked Mitt Romney in 2012 for calling Russia the greatest geopolitical adversary of the United States, but now have flipped and see Russia as exactly that. Regarding health care, the structure of the Affordable Care Act was initially devised by the conservative Heritage Foundation and implemented in Massachusetts as “Romneycare.” Once it became Obamacare, the Republican team leaders deemed it bad, and thus it became bad.

Mason believes the implications of such shallow divisions between people could make the work of democracy harder. If your goal in politics is not based around policy but just defeating your perceived enemies, what exactly are you working toward? (Is it any surprise there is an entire genre of campus activism dedicated to simply upsetting your perceived political opponents?)

“The fact that even this thing that’s supposed to be about reason and thoughtfulness and what we want the government to do, the fact that even that is largely identity-powered, that’s a problem for debate and compromise and the basic functioning of democratic government. Because even if our policy attitudes are not actually about what we want the government to do but instead about who wins, then nobody cares what actually happens in the government,” Mason said. “We just care about who’s winning in a given day. And that’s a really dangerous thing for trying to run a democratic government.”