I – II – III – IV – V – VI – VII – VIII – IX – X – XI – XII – XIII – XIV – XV – XVI – XVII – XVIII

[Single-page view]

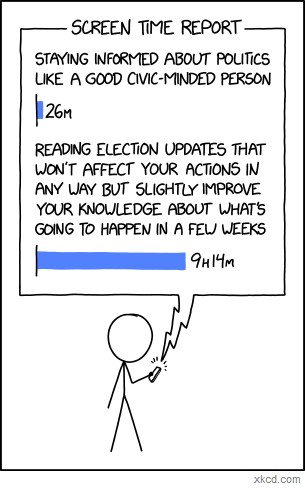

I remember hearing this Eleanor Roosevelt quotation back when I was a teenager, which went: “Small minds discuss people. Average minds discuss events. Great minds discuss ideas.” Of course, like so many other quotations attributed to Eleanor Roosevelt or Abraham Lincoln or Albert Einstein or Mahatma Gandhi, this one turned out not to be genuine; apparently the actual source was someone named Henry Thomas Buckle who I don’t think most people have ever even heard of – which is kind of ironic (or perhaps strangely fitting), that the idea is what has ultimately been remembered and not the person who said it. But at any rate, for whatever reason, it’s an idea that has stuck with me over the years. Granted, it’s not exactly the kind of thing you can take as an absolute (at least not if you want to avoid seeming pretentious); obviously, discussing people and events can be plenty valuable sometimes. Still, I think the basic point that it’s trying to convey – that it’s important to think about things on the level of the underlying concepts, not just the surface-level stuff – is a good one; so I like it as something to try and aspire to, at least, if not necessarily something to judge others by. If nothing else, I like that it so clearly draws the distinction between talking about people and events versus talking about ideas, because as soon as you put a label on it like that, you really start to notice just how little time we actually do spend talking about ideas. We talk about people and events constantly – you can turn on your TV or computer every day and spend hours seeing all the latest news stories and political scandals. And there are plenty of people who do exactly that; being an “information junkie” is the fashionable thing to do nowadays. But I think there’s a difference between information and knowledge; and having a head full of information isn’t the same thing as having a head full of ideas or expertise. Obsessively scrolling through Twitter and following every piece of Washington gossip won’t make you more knowledgeable in the fields of economics or foreign policy than spending that same amount of time reading good books on those subjects. Staying up to the minute on every new consumer technology gimmick won’t make you more knowledgeable in the fields of telecommunications or software development than spending the same amount of time actually learning the systems behind those technologies. Being “well-informed,” even maximally well-informed, doesn’t actually make you more knowledgeable in any real sense if the things you’re well-informed about don’t have any deep significance outside of their immediate context.

I probably don’t need to give a long list of examples here; you can see what I mean for yourself. Watch a random CNN segment from ten years ago – or hell, even ten weeks ago – and see how much of it is information that you think is still important for you to have in your head today. Chances are (barring some major historical event that you’d have heard about whether you closely followed the news or not – i.e. something that wouldn’t properly be considered part of the usual “news cycle” at all), it will be almost none of it. The facts will be outdated; the people being discussed will no longer be relevant; and a disproportionate share of the coverage will simply be dedicated to explaining ever-finer bits of minutiae – what the campaign manager is saying about the latest poll numbers out of New Hampshire, whether the press secretary might be stepping down and who their potential replacement could be, etc. – not necessarily to explaining why these minutiae should change your broader understanding of the world in any meaningful way. (Hence Nassim Taleb’s quip: “To be completely cured of newspapers, spend a year reading the previous week’s newspapers.”)

The same goes for most social media and blog discussions. As Shane Parrish points out, when most of what you see in your feed is coming from people who are manically churning out dozens of posts and Tweets per day in response to every little thing that happens, chances are you aren’t getting a whole lot of deep ideas from them; for the most part, all you’re getting are reactions – people’s most surface-level responses to whatever happens to be right in front of them at the moment.

(Case in point: As I’m writing this in mid-2018, the headline everybody’s obsessing over on CNN and Twitter is “McCain Wife Fires Back at White House Aide Who Mocked Her Husband.” We’ll see if this story is still important enough to dominate the national conversation by the time I publish this post, but something tells me it won’t be.)

I don’t entirely blame the news for being the way it is, mind you – as Parrish notes, “News is by definition something that doesn’t last.” Nor do I blame other outlets like social media and constantly-updating blogs for providing such transient content themselves; that’s what they were designed for. It’s not so much that I’m bothered by the fact that so little of this information content will matter ten years from now – it’s more the fact that we choose to dedicate such a disproportionate amount of our mental bandwidth and public discussion to it, often at the expense of deeper and more enduring forms of knowledge. I obviously don’t think that all news coverage is a waste of time, or that we should stop paying attention to it altogether (especially if it’s something major like a war or a pandemic). It should go without saying that knowing what’s happening in the world is a necessary first step to understanding it. But I do think it’s worth noticing what a remarkable proportion of news coverage – as well as online commentary, social media conversations, and all the other information feeds we engage with on a daily basis – is just “empty calories,” so to speak. It only tells us “what’s happening in the world” in the most literal, superficial sense; it doesn’t help us understand the underlying phenomena that give rise to these surface-level events, or give us any deeper insight into the broader forces at work.

I suppose part of the reason for this lack of depth is just that the superficial things are so much more accessible, and easier to understand, and easier to bring into a conversation, than the more complex ideas underlying them. It’s easier to have a strong opinion on a politician making some dumb gaffe than it is to determine whether her fiscal policies are fundamentally sound. It’s easier to condemn a religious figure for cheating on his wife than it is to explain why his religious worldview represents a flawed basis for morality. People like being able to weigh in on the issues of the day – we all want to feel like we’re smart and informed and can make a contribution to the conversation – and consequently, whichever issues of the moment allow the greatest number of people to jump into the conversation and take a strong stance are the ones that inevitably get all the attention. But because most of us don’t have a deep store of esoteric knowledge about complex socio-political, economic, and philosophical issues, what this means is that most of the topics that dominate the social conversation are the ones that can appeal to the lowest common denominator – i.e. the shallowest and most superficial topics.

There’s actually a term for this phenomenon, it turns out: Parkinson’s Law of Triviality. What it says is that the amount of discussion generated by a particular topic will be inversely proportional to the complexity of that topic; in other words, the more complicated and difficult to understand a topic is, the less people will want to take a stance on it, but the simpler it is to understand, the more people will be willing to weigh in (and to do so vocally and passionately). This is also called “bikeshedding,” after the example given by C. Northcote Parkinson himself, in which a committee is given the task of approving plans for a 300-million-dollar nuclear power plant, and rather than spending their time debating the cost and proposed design of the reactor itself – a tremendously important issue – they pass the resolution in two minutes (because after all, nuclear reactors are expensive and hard to understand), and instead get hung up on the much easier-to-understand issue of a proposed bike shed to be built nearby, debating for hours over what type of materials should be used, whether the few hundred dollars to build the bike shed could be better appropriated elsewhere, and so forth. As the Wikipedia article summarizes it: “A reactor is so vastly expensive and complicated that an average person cannot understand it, so one assumes that those who work on it understand it. On the other hand, everyone can visualize a cheap, simple bicycle shed, so planning one can result in endless discussions because everyone involved wants to add a touch and show personal contribution.”

Unfortunately, when it comes to the really important topics in our society – social issues, religion, politics, etc. – I feel like a lot of the conversations we’re having are just glorified bikeshedding. We’re happy to spend hours every day talking about what the world’s major figures and groups have been up to lately, and what events have been making headlines; but like the nuclear power plant in the analogy, the fundamental ideas and philosophies motivating those people and driving those events are simply glossed over. It’s assumed that they’ve already been taken care of. Whether in news reports, blog posts, or social media discussions, it’s simply taken for granted that everyone has already figured out exactly where they stand on the ideas, worked out which philosophies they believe are the correct ones, and sorted themselves into opposing sides on every possible topic – and that therefore all that’s left to do is just to set the opposing sides loose against each other and start keeping score, in as much detail as possible, of which side is winning and which side is losing on any given day.

The thing is, though, I don’t think this assumption is actually true. I don’t think most people actually have thought through all the exact details of their own ideologies – not entirely. They may have convinced themselves that they have a firm grasp on things because they’ve read a lot of articles and watched a lot of videos on the relevant subjects (see Will Schoder’s video here for a great explanation of how this can happen). But I think if you asked them to take out a pen and paper and outline the framework of their worldview from the ground up – to explain their exact stance on every single issue (along with their justifications) from first principles, without referring to some other source of authority – most people wouldn’t be able to do it; or at least, they’d have to do a lot of thinking about it first. There would be a lot of beliefs that weren’t quite fully formulated, that didn’t necessarily connect to each other or fit together in a clear way, or that were adopted on an ad hoc basis. (Kevin Simler and Robin Hanson illustrate this by pointing out that “when people are asked the same policy question a few months apart, they frequently give different answers – not because they’ve changed their minds, but because they’re making up answers on the spot, without remembering what they said last time. It is even easy to trick voters into explaining why they favor a policy, when in fact they recently said they opposed that policy.”) One thing is for sure – hardly anyone who categorizes themselves under some ideological label like “Democrat” or “Republican” or “Christian” or “Muslim” would have come up with that exact bundle of political or religious beliefs if they’d had to formulate everything themselves from scratch.

But that’s the thing; most people never go to the trouble of building their own personal worldview, on an issue by issue basis, from the ground up. They don’t feel like they have to – because they can simply select one of the pre-constructed ideologies that already exist (Christianity, Liberalism, etc.) and adopt that ideology as their own. Why consider each individual issue on its own merits and spend all that time outlining your own ideological framework, when there are pre-packaged worldviews available that have already done the work of answering every question for you? You can just peg your own worldview to one of those and say, “There, whatever that ideology says is what I believe.”

This strikes me as weird, frankly. You’d think that in a country of 325 million people, there’d be 325 million different worldviews. Given the thousands of different topics it’s possible to have an opinion on, you’d think there would be an incredibly wide range of variation, with some people believing in gun control but not creationism or financial regulation or climate change, others believing in creationism and climate change but not gun control or financial regulation, still others believing in financial regulation and climate change but not gun control or creationism, and so on. But instead, what we see is this pervasive cultural mindset that there are only a few possible combinations of views that a person can reasonably hold – only two, really, in most contexts. In the political sphere, you’re either a Republican or a Democrat. In the religious sphere, you either belong to whatever religion you were born into or you aren’t religious at all. There are only considered to be two possible ideologies for a person to sort themselves into – only two possible answers to every problem – and nothing else even computes. As Orson Scott Card writes:

We are fully polarized – if you accept one idea that sounds like it belongs to either the blue or the red, you are assumed – nay, required – to espouse the entire rest of the package, even though there is no reason why supporting the war against terrorism should imply you’re in favor of banning all abortions and against restricting the availability of firearms; no reason why being in favor of keeping government-imposed limits on the free market should imply you also are in favor of giving legal status to homosexual couples and against building nuclear reactors. These issues are not remotely related, and yet if you hold any of one group’s views, you are hated by the other group as if you believed them all; and if you hold most of one group’s views, but not all, you are treated as if you were a traitor for deviating even slightly from the party line.

(Quick disclaimer, by the way: You’ll notice me using a lot of quotations in this post. You’ll also notice that some of them come from highly reputable sources, while others, not so much. But I like to take good ideas wherever I find them, and a lot of great stuff has been written on this topic (even from writers and commentators you might not expect), so I’d rather share those insights with you directly than clumsily try to rephrase them into my own words when it’s not necessary – even if the result is that this post ends up being more of a compilation of interesting ideas I’ve gathered from other people than just a product of my own thoughts.)

Why is it so black and white? Why do worldviews tend to cluster together into only two flavors like this? Why is it that, if I told you someone’s opinion on climate change, you could probably predict their views on gun control, gay marriage, and so on, even though these topics are completely unrelated? Well, one possible answer is that although the topics might not be innately linked, they can become strongly linked due to how frequently they’re associated with each other. As David McRaney writes:

Symbols are a big part of your life thanks to the associative architecture of your brain. When I write a terrible romance novel line such as “It should have been obvious she was born in Africa, she had a beautifully long, slender neck not unlike a…” you can finish my sentence because your brain long ago formed a connection to the words long, slender, neck, and Africa. Neuroscientists call this a semantic net – every word, image, idea, and feeling is associated with everything else, like an endless tree growing in every direction at once. When you smell popcorn, you think of the cinema. When you hear a Christmas song, you think of Christmas trees.

Scott Alexander elaborates on how this idea could apply to socio-political issues:

Little flow diagram things make everything better. Let’s make a little flow diagram thing.

[Say] we have our node “Israel”, which has either good or bad karma [depending on your political opinions]. Then there’s another node close by marked “Palestine”. We would expect these two nodes to be pretty anti-correlated. When Israel has strong good karma, Palestine has strong bad karma, and vice versa.

Now suppose you listen to Noam Chomsky talk about how strongly he supports the Palestinian cause and how much he dislikes Israel. One of two things can happen:

“Wow, a great man such as Noam Chomsky supports the Palestinians! They must be very deserving of support indeed!”

or

“That idiot Chomsky supports Palestine? Well, screw him. And screw them!”

So now there is a third node, Noam Chomsky, that connects to both Israel and Palestine, and we have discovered it is positively correlated with Palestine and negatively correlated with Israel. It probably has a pretty low weight, because there are a lot of reasons to care about Israel and Palestine other than Chomsky, and a lot of reasons to care about Chomsky other than Israel and Palestine, but the connection is there.

I don’t know anything about neural nets, so maybe this system isn’t actually a neural net, but whatever it is I’m thinking of, it’s a structure where eventually the three nodes reach some kind of equilibrium. If we start with someone liking Israel and Chomsky, but not Palestine, then either that’s going to shift a little bit towards liking Palestine, or shift a little bit towards disliking Chomsky.

Now we add more nodes. Cuba seems to really support Palestine, so they get a positive connection with a little bit of weight there. And I think Noam Chomsky supports Cuba, so we’ll add a connection there as well. Cuba is socialist, and that’s one of the most salient facts about it, so there’s a heavily weighted positive connection between Cuba and socialism. Palestine kind of makes noises about socialism but I don’t think they have any particular economic policy, so let’s say very weak direct connection. And Che is heavily associated with Cuba, so you get a pretty big Che – Cuba connection, plus a strong direct Che – socialism one. And those pro-Palestinian students who threw rotten fruit at an Israeli speaker also get a little path connecting them to “Palestine” – hey, why not – so that if you support Palestine you might be willing to excuse what they did and if you oppose them you might be a little less likely to support Palestine.

Back up. This model produces crazy results, like that people who like Che are more likely to oppose Israel bombing Gaza. That’s such a weird, implausible connection that it casts doubt upon the entire…

Oh. Wait. Yeah. Okay.

I think this kind of model, in its efforts to sort itself out into a ground state, might settle on some kind of General Factor Of Politics, which would probably correspond pretty well to the left-right axis.

As he sums up, the “theory is that everything in politics is mutually reinforcing.” So if, say, you start off only having one or two main issues that you really care about (like abortion or gun control), you’ll naturally be more receptive toward sources that agree with you on those issues, and will end up absorbing a lot of their opinions on other issues as well (like taxes, foreign policy, gay marriage, etc.) through osmosis. Acquiring one particular belief or set of beliefs will usually mean that certain others come along for the ride. And because everyone else – including the very sources you’re taking your cues from – is doing the same thing, associations between different ideas that might start off fairly weak can accumulate more weight as like-minded people influence and are influenced by each other in turn. People within the same web of influence can reinforce each other’s conceptual connections so much that it causes a consensus cluster of beliefs to form, and the result is that anyone who adopts one of the beliefs within a particular cluster will typically be much more likely to adopt the entire rest of the cluster as well, since every source they encounter that opposes abortion and gun control will also tend to oppose higher taxes and gay marriage, and vice-versa. Ultimately, just by listening to “the people who know what they’re talking about” – i.e. the people who agree with you on your core issues – you can easily end up pigeonholing yourself neatly into the same standard left-right dichotomy as everyone else without even meaning to.

In a way, this relates back to Parkinson’s example of the nuclear reactor, in which everyone quickly comes to an agreement because they all assume someone more qualified has already done the necessary research to justify it. If you encounter a new ideological issue that you haven’t considered very deeply for yourself just yet, or haven’t done much research on, but you still want to have an opinion and appear knowledgeable (and who doesn’t want to appear knowledgeable?), it’s easier to simply slot yourself into whichever one of the pre-constructed ideologies that already exist (and have already figured out their answer to each of the hundreds of issues in question) you more closely identify with, and then adopt that faction’s view as your own, than it is to start from scratch and try to build your own conclusions from the ground up – doing your homework on each of the issues yourself, one by one, and cobbling together your own worldview that may or may not fit perfectly into the black-and-white paradigm used by everyone else. Instead of becoming knowledgeable the hard way, you can be knowledgeable by proxy, claiming your side’s collective expertise as your own. Instead of using the knowledge of trusted commentators to help you form your beliefs and fill in gaps in your worldview (a good and reasonable thing to do), you can use it as a means to skip that step entirely and pick a worldview that’s already been formed.

Like a student taking a pop quiz, it’s easier to answer the question as if it were multiple-choice than to answer it in a free-response essay format. With a multiple-choice question, you don’t have to show your work or explain your reasoning; all you have to do is select the right answer, and your competence is demonstrated. In terms of ideology, likewise, it’s easier simply to self-identify as a Republican or a Democrat or a Christian or a Muslim or whatever than to explain to anyone who asks that although you agree with one faction on issues A, B, and C, you like what this other faction has to say about issues D, E, and F, and on certain issues like G, H, and I you don’t really identify with any of the traditional factions and have formed your own particular views. The latter approach is still perfectly possible, obviously – there are a lot of smart people with independently-constructed worldviews that don’t fit neatly into the traditional dichotomies – but the former approach is undoubtedly the more convenient one.

This kind of ideological shortcut-taking – where everyone lumps themselves (and each other) into one of two groups rather than operating on a piecemeal basis – isn’t always a bad thing. Jason Brennan grants, for instance, that it can be useful for quickly identifying which political candidates’ views are closest to your own:

In modern democracies, most candidates join political parties. Political parties run on general ideologies and policy platforms. Individual candidates may have their own idiosyncrasies and preferences, but they have a strong tendency to fall in line and do what the party wants.

Many political scientists think party systems reduce the epistemic burdens of voting. Voters can get by reasonably well by treating all Republicans and Democrats as two homogeneous groups. In an election, instead of learning what this particular Republican and Democratic want to do, I can treat the candidates as standard Republicans and Democrats, and vote accordingly. This kind of statistical discrimination leads to mistakes on an individual basis, but on a macro level, with 535 members of Congress, these individual mistakes are likely to cancel out. The party system thus provides voters with a “cognitive shortcut”; it allows them to act as if they were reasonably well informed.

There’s much to be said for this line of reasoning. So long as voters tend to have reasonably accurate stereotypes of what policies the two major political parties tend to prefer, then voters as a whole can perform well by relying on such stereotypes.

On a more basic level, there’s also the simple fact that being part of a group is generally more fun and socially rewarding than being an unaffiliated loner without any allies or allegiances. There is power in numbers, and it can just feel so satisfying to belong to a group of like-minded people who will agree with you on all the important issues and praise you for agreeing with them in turn. People love being part of a team; so whenever there’s a contentious issue floating around, they will always want to take a side, even if there are only two sides to choose from. For a lot of people, this is probably the biggest reason of all to want to slot themselves into pre-constructed ideologies rather than constructing their own – because the ideas themselves aren’t actually their main consideration.

The problem with all this, though, is that once you take a side and plant your flag firmly in that camp, you develop a sort of “brand loyalty,” and your chosen affiliation starts to affect what you believe, instead of the other way around. As Carol Tavris and Eliot Aronson put it:

Once people form [an ideological] identity, usually in young adulthood, the identity does their thinking for them. That is, most people do not choose [an ideology] because it reflects their views; rather, once they choose [an ideology], its policies [or doctrines] become their views.

I could give countless examples of this. I remember hearing one case, for instance, of a Jewish man who casually mentioned one day that he didn’t believe in Hell anymore, and when asked why, replied that he found out that Judaism doesn’t include a belief in Hell, so since he was Jewish, then that wasn’t a part of what he believed anymore. Balioc gives a similar common example of “a self-identified libertarian asking ‘can a libertarian believe X?’ rather than just figuring out whether X is a reasonable thing to believe.” Steven Pinker has mentioned his encounters with people who held very strong opinions either for or against the Trans-Pacific Partnership (a trade agreement from 2016), but when asked to give their reasoning, couldn’t actually explain what the TPP even was – they just knew that their social media feeds were adamant about supporting it or opposing it, and so they jumped on the bandwagon as well. And Tavris and Aronson themselves give some even more dramatic political examples:

[Social psychologist Lee Ross has gained valuable insights] from his laboratory experiments and from his efforts to reduce the bitter conflict between Israelis and Palestinians. Even when each side recognizes that the other side perceives the issues differently, each thinks that the other side is biased while they themselves are objective, and that their own perceptions of reality should provide the basis for settlement. In one experiment, Ross took peace proposals created by Israeli negotiators, labeled them as Palestinian proposals, and asked Israeli citizens to judge them. “The Israelis liked the Palestinian proposal attributed to Israel more than they liked the Israeli proposal attributed to the Palestinians,” he says. “If your own proposal isn’t going to be attractive to you when it comes from the other side, what chance is there that the other side’s proposal is going to be attractive when it actually comes from the other side?”

Closer to home, social psychologist Geoffrey Cohen found that Democrats will endorse an extremely restrictive welfare proposal, one usually associated with Republicans, if they think it has been proposed by the Democratic Party, and Republicans will support a generous welfare policy if they think it comes from the Republican Party. Label the same proposal as coming from the other side, and you might as well be asking people if they will favor a policy proposed by Osama bin Laden. No one in Cohen’s study was aware of their blind spot – that they were being influenced by their party’s position. Instead, they all claimed that their beliefs followed logically from their own careful study of the policy at hand, guided by their general philosophy of government.

This is the central flaw of the whole “taking sides” approach. Once you’ve decided that the question “Which ideology is right?” must be a multiple-choice question and not a free-response one, it means that the moment you pick an answer to that question and take a side, your journey of inquiry is done. You no longer feel the need to examine any new facts for yourself, because you already know everything you need to know – you’ve already determined which side is the one that’s correct about everything – so you can reject any contradictory arguments in advance without even hearing them. If any new fact does happen to creep into your awareness which contradicts what your side says is true, well then, all that means is that you have to rationalize the contradiction away somehow – either by coming up with some explanation for why the new fact is actually false or misleading, or by concocting some elaborate interpretation of your side’s ideology that demonstrates how your side has actually been properly accounting for this fact all along, or by some other equally convoluted method. You may not know exactly what the explanation is for why your side is right on some particular given issue, but you know that in the overall scheme of things, your side is the right one, so therefore some explanation must exist, even if you don’t know the particulars off the top of your head. What you can’t do, though, is just admit that in some cases the facts do appear to point in a direction other than your chosen side – much less admit that you have no problem accepting this because you have your own personal set of beliefs that don’t always map perfectly onto one side in the first place. Once you’ve picked a side, it becomes your answer to every problem. It becomes part of your identity – almost like your nationality or something – and it therefore has to be protected from any new information that might threaten it.