I – II – III – IV – V – VI – VII – VIII – IX – X – XI – XII – XIII – XIV – XV – XVI – XVII – XVIII

[Single-page view]

So all right, let’s lay some groundwork. Whenever you’re talking about ideologically-charged issues like politics or religion, I always think it’s a good idea to try and adhere to certain meta-ideological principles – sort of general-purpose rules of thumb that can be broadly applied across all kinds of different contexts. For me, the first and foremost of these is that you should “do what works” – i.e. you should take cues from real-world facts and experiences rather than relying on pure theory alone. When you’re trying to figure out what would be the best system of government, or the best system of economics or social organization or whatever, it can be incredibly easy to become enamored with some fantastic utopian system you’ve discovered that seems to explain everything and could theoretically fix all the world’s problems – e.g. communism, anarchism, objectivism, that kind of thing. (I’ll admit to being particularly susceptible to this kind of thinking myself.) But a lot of ideas sound flawless in theory and then turn out to break down for unforeseen reasons in practice – so if you look around and find that your favored idea has never actually been implemented successfully in the real world, it may be a red flag that the system might not actually be as flawless as it seems to be in your mind. There may be some hidden variables there that you haven’t accounted for. A better approach, then, is to see what actually has been implemented successfully in the real world – what’s actually working in the parts of the world where people are the happiest, healthiest, and most successful – and then emulate that. Or at least use those ideas as a starting point.

The Wikipedia entry for “empiricism” sums it up pretty well:

Empiricism in the philosophy of science emphasises evidence, especially as discovered in experiments. It is a fundamental part of the scientific method that all hypotheses and theories must be tested against observations of the natural world rather than resting solely on a priori reasoning, intuition, or revelation.

Empiricism, often used by natural scientists, says that “knowledge is based on experience” and that “knowledge is tentative and probabilistic, subject to continued revision and falsification”. Empirical research, including experiments and validated measurement tools, guides the scientific method.

To me, this seems like about as solid of a foundation for an accurate worldview as you can get. Base your ideas on what’s verifiably true. Do what works. And have enough intellectual humility to know that “what works” might not always be immediately obvious, and what you think should work in your head might not always map perfectly to the facts on the ground.

In that same vein, I think it’s important to realize that when you don’t have all of the expertise to make the most informed possible judgment on a specific topic (and in particular here I’m thinking of issues relating to the hard sciences), it’s usually smartest to entrust that judgment to those who do, and to take what the experts have to say as a starting point for your own beliefs. After all, it’s impossible to know all the relevant information you’d need to judge every issue as accurately as possible; there will be a lot of cases where others are more knowledgeable or more qualified to determine the truth of a particular matter than you are – and in those cases, it’s just a fact that their judgment therefore has a better chance of being accurate than yours does. (Pretending otherwise is how we end up with flat-earthers and anti-vaxxers telling people to “do their own research.”) If you think you’ve found some fundamental mistake in the way the world works that everyone else is overlooking, it’s possible that you really have made an important discovery, but it’s more likely that you’re the one who’s overlooked something. Acknowledging this fact isn’t an admission of stupidity; it’s just a recognition that people have different specialties and different areas of expertise, and the most accurate worldview will be one that pools together all the different pieces of knowledge from their most reliable sources.

There’s a concept in economics called the “efficient market hypothesis,” which Alexander explains like this:

The market economy is very good at what it does, which is something like “exploit money-making opportunities” or “pick low-hanging fruit in the domain of money-making”. If you see a $20 bill lying on the sidewalk, today is your lucky day. If you see a $20 bill lying on the sidewalk in Grand Central Station, and you remember having seen the same bill a week ago, something is wrong. Thousands of people cross Grand Central every week – there’s no way a thousand people would all pass up a free $20. Maybe it’s some kind of weird trick. Maybe you’re dreaming. But there’s no way that such a low-hanging piece of money-making fruit would go unpicked for that long.

In the same way, suppose your uncle buys a lot of Google stock, because he’s heard Google has cool self-driving cars that will be the next big thing. Can he expect to get rich? No – if Google stock was underpriced (ie you could easily get rich by buying Google stock), then everyone smart enough to notice would buy it. As everyone tried to buy it, the price would go up until it was no longer underpriced. Big Wall Street banks have people who are at least as smart as your uncle, and who will notice before he does whether stocks are underpriced. They also have enough money that if they see a money-making opportunity, they can keep buying until they’ve driven the price up to the right level. So for Google to remain underpriced when your uncle sees it, you have to assume everyone at every Wall Street hedge fund has just failed to notice this tremendous money-making opportunity – the same sort of implausible failure as a $20 staying on the floor of Grand Central for a week.

In the same way, suppose there’s a city full of rich people who all love Thai food and are willing to pay top dollar for it. The city has lots of skilled Thai chefs and good access to low-priced Thai ingredients. With the certainty of physical law, we can know that city will have a Thai restaurant. If it didn’t, some entrepreneur would wander through, see that they could get really rich by opening a Thai restaurant, and do that. If there’s no restaurant, we should feel the same confusion we feel when a $20 bill has sat on the floor of Grand Central Station for a week. Maybe the city government banned Thai restaurants for some reason? Maybe we’re dreaming again?

And this concept can apply to ideas as well. He continues:

We can take this beyond money-making into any competitive or potentially-competitive field. Consider a freshman biology student reading her textbook who suddenly feels like she’s had a deep insight into the structure of DNA, easily worthy of a Nobel. Is she right? Almost certainly not. There are thousands of research biologists who would like a Nobel Prize. For all of them to miss a brilliant insight sitting in freshman biology would be the same failure as everybody missing a $20 on the floor of Grand Central, or all of Wall Street missing an easy opportunity to make money off of Google, or every entrepreneur missing a great market opportunity for a Thai restaurant. So without her finding any particular flaw in her theory, she can be pretty sure that it’s wrong – or else already discovered. This isn’t to say nobody can ever win a Nobel Prize. But winners will probably be people with access to new ground that hasn’t already been covered by other $20-seekers. Either they’ll be amazing geniuses, understand a vast scope of cutting-edge material, have access to the latest lab equipment, or most likely all three.

It’s for this reason that maintaining your intellectual humility is such a logical strategy when trying to figure out what’s true. Putting your own judgment above that of the expert consensus can be a valid approach if you happen to be in a situation where you really are one of those people with privileged information, expertise, or resources that nobody else has access to. But otherwise, presuming to be able to come up with the best answers on your own, from the comfort of your armchair, just isn’t typically as reliable a source of insight as judiciously referring to the knowledge of experts and getting your cues from the way things have been successfully implemented in the real world – trusting the wisdom of the intellectual market, so to speak.

If you’re getting your information from good sources, it turns out that expert consensus is actually a pretty darn reliable guide to knowing what works and what doesn’t. And if you can use that knowledge base as your benchmark, then you can start to spin off new ideas of your own and explore new ideological directions. But you’ve got to know what knowledge is already out there first.

Green shares his thoughts on the subject:

My life this week has been dictated by natural phenomena. As Montana has continued through an unseasonable hot and dry summer, my valley has been socked with smoke, sometimes enough that experts advise me not to leave my house […] and that got substantially worse when the nearest fire in Lolo National Forest flared and burned more than 9,000 acres in one evening. […] And then of course, this Monday there was the eclipse. One of the remarkable things about the eclipse is that we knew exactly when it was going to happen for decades in advance – we had enough lead time to get a bunch of eclipse glasses made and sent to gas stations all over the country; people booked hotel rooms and braved terrible traffic – though not before first making sure that the sun would be out in the place where they were going – and we trusted all of those things. I couldn’t tell you how people figured out when eclipses were going to show up before computers, but they did it. I also don’t know how scientists fight fires, or predict them, or know to tell me when I shouldn’t go outside to avoid damaging my lungs. But I do know that someone knows. Someone knows when the eclipse will happen; someone knows when fires will probably get worse; and they’ve had their work checked by other people who also know. I don’t have the space in my head to figure all these things out on my own; and like, good thing, because the story of human progress is not a story of every single person figuring out every single thing for themselves.

[…]

When telling people that I trust experts, I sometimes hear people respond that I’m committing a logical fallacy – appealing to authority. And this is a fallacy when making an argument; saying “But this expert says so” is not a good argument. But I’m not actually making an appeal to one person; I’m making an appeal to a process that has had a good deal of success at accurately explaining and predicting stuff. The expert is the proxy for the process. I’m making an appeal, not just to the people who have figured something out, but to all of the other people who I know are going to check their work. I’m making an appeal to statistics and logic and calculus and peer review. I’m making an appeal to science. And also, I’m often not making an argument – I’m not trying to convince people of what they should believe – I’m trying to explain that when it comes to things that I’m not that interested in, or capable of learning enough about, I’m happy to accept scientific consensus because it’s going to be a whole lot better than whatever hunch I happen to have. This isn’t trusting that they’re right; it’s accepting that people who study things for a living have a far greater chance of being right than someone who just feels like arguing with them. It’s not an appeal to authority; it’s like an appeal to sanity. I get worried about the current tendency of some to feel like they must confirm everything for themselves. That’s individualism taken to maybe a fatal extreme. Human progress is a story of building on the work of others, not every single person starting from scratch. I agree that trusting individuals because they’ve had the “expert” label applied to them can lead to trouble; but one of the great achievements of our culture, of our society, is the creation and refinement of robust systems for creating and identifying trustable expertise. Of course those systems can always be refined and improved, but those who think they can just be discarded… scare me. Watching these beautiful and terrible plumes of smoke flare above my town, I felt very grateful to those who study these things for a living so I do not have to.

Of course, all this talk about trusting experts naturally raises another question: How can you determine who the real experts are in the first place? After all, the flat-earthers and anti-vaxxers will insist that they’re the ones listening to the real experts, and that the so-called mainstream scientists everyone else listens to are actually just phonies and shills. How can you know which “experts” are actually legitimate and worthy of being trusted, and which are just quacks claiming to be experts? For that matter, how can you know how much to trust your own expertise? If you really do know better than the expert consensus on a particular topic, how can you tell?

Again, it all comes back to empiricism – making sure that the sources of information you’re drawing from and the experts you’re listening to are verifiably accurate. If you can pay attention not only to how each side of a particular debate is saying the world is, but also to what they’re predicting will happen in the future, then you can go back later and see whose predictions ended up being the most accurate, and thereby get a good feel for whose ideology most closely reflects reality.

(As it happens, there was actually a study done on this in 2011; researchers tested the predictions of a wide range of pundits and politicians, and found that Paul Krugman was the most accurate, Cal Thomas was the least accurate, and the more liberal-leaning prognosticators tended to do better than the conservative-leaning ones on average – suggesting that Krugman’s views are worth listening to, Thomas’s probably less so, and the liberal worldview more closely reflects reality in general than the conservative one. This is pretty convenient for me to accept, of course, since I tend to identify more closely with the liberal tribe than the conservative tribe most of the time anyway – but this is only one (possibly flawed or outdated) study; and if more studies came out showing that the conservative pundits were the ones making more accurate predictions now, it should make me want to shift the balance of my attention accordingly.)

Likewise, in the same way that you can track the predictions of different commentators and experts to see which ones are the most accurate, you can also keep a personal scorecard for your own predictions. If you think that the Fed’s policy of quantitative easing will lead to hyperinflation, or that President Obama’s election will lead to the mass confiscation of guns, or that the global supply of oil will peak by 2010, you should write out these predictions in advance, note your level of confidence in each of them, and then look back later to see how accurate you ended up being. Did you do better than the experts? Did you do better than random chance? Depending on your results, you can either be more confident or less confident in your own judgments, and you can accordingly give them more weight or less weight than you give the opinions of other commentators you’ve kept track of. Chances are, there will be at least a few experts whose predictive abilities outperform your own – and those are the people you should be listening to and learning from.

There are a lot of people who think that the “expert consensus” is always a product of corruption and incompetence – and that, for that matter, our whole society and culture and political system are irredeemably broken in a fundamental way. According to this worldview, things are worse than they’ve ever been – the True Way is continually being suppressed and forgotten – and the crisis is only growing worse. Every day we stray further from the truth.

But I don’t think this is right. Despite slipping backwards at times (sometimes significantly so), I think the overall trend for our species is to progress more and more in the right direction. The arc of humanity bends toward truth – for the simple reason that, firstly, smart people tend to be right more often; secondly, others tend to recognize who the smartest people are when they hear from them; and thirdly, they tend to pay attention to the smart people and listen to what they have to say. This isn’t always how it goes, of course – the imbalance in favor of intelligence might not even be that big – but I think that on average, there’s a reason why the foremost experts and decision-makers tend to be (and work closely alongside) people who are more intelligent than the typical schlub on the street. Overall, the ideas that come out on top tend to be the ones that are better.

On a related note, this is also why I’m wary of any ideology that calls for “tearing down the whole rotten system and starting over.” It’s true that our society has a lot of problems, and that some of these are very big, very serious problems. But we’ve also got a lot of good things going for us – a lot of social norms, institutions, and practices that really do make our lives better in vital ways, and that are crucial to preserve. (If you don’t believe it, try visiting a Third World country and comparing their institutions to our own.) We have to be extremely careful, then, not to throw the baby out with the bathwater when considering radical changes to the system. As Alexander warns, “There are many more ways to break systems than to improve them.” So although it may be easy to think that you’ve come up with some utopian solution that could solve all of our social ills by tearing down the current system, it’s worth recalling the idea of Chesterton’s Fence mentioned earlier. In all likelihood, the reason your brilliant idea hasn’t been implemented is not because nobody’s ever thought of it before, but because there’s a bull hidden behind the fence you want to tear down, and overturning the current system would create more complications than it solves. Alexander continues:

Since [studying Marx], one of the central principles behind my philosophy has been “Don’t destroy all existing systems and hope [that some invisible force of goodness will just miraculously make] everything work out”. Systems are hard. Institutions are hard. If your goal is to replace the current systems with better ones, then destroying the current system is 1% of the work, and building the better ones is 99% of it. Throughout history, dozens of movements have doomed entire civilizations by focusing on the “destroying the current system” step and expecting the “build a better one” step to happen on its own. That never works.

And Pinker adds:

[According to the worldview of thinkers like Edmund Burke,] however imperfect society may be, we should measure it against the cruelty and deprivation of the actual past, not the harmony and affluence of an imagined future. We are fortunate enough to live in a society that more or less works, and our first priority should be not to screw it up, because human nature always leaves us teetering on the brink of barbarism. And since no one is smart enough to predict the behavior of a single human being, let alone millions of them interacting in a society, we should distrust any formula for changing society from the top down, because it is likely to have unintended consequences that are worse than the problems it was designed to fix. The best we can hope for are incremental changes that are continuously adjusted according to feedback about the sum of their good and bad consequences. […] In Burke’s famous words, written in the aftermath of the French Revolution:

[One] should approach to the faults of the state as to the wounds of a father, with pious awe and trembling solicitude. By this wise prejudice we are taught to look with horror on those children of their country who are prompt rashly to hack that aged parent in pieces, and put him into the kettle of magicians, in hopes that by their poisonous weeds, and wild incantations, they may regenerate the paternal constitution, and renovate their father’s life.

I think this is a really important insight; being too cavalier with systems that affect millions of people can lead to disaster. On a scale from “gradualist” to “revolutionary,” I tend to fall squarely on the gradualist side of the spectrum most of the time.

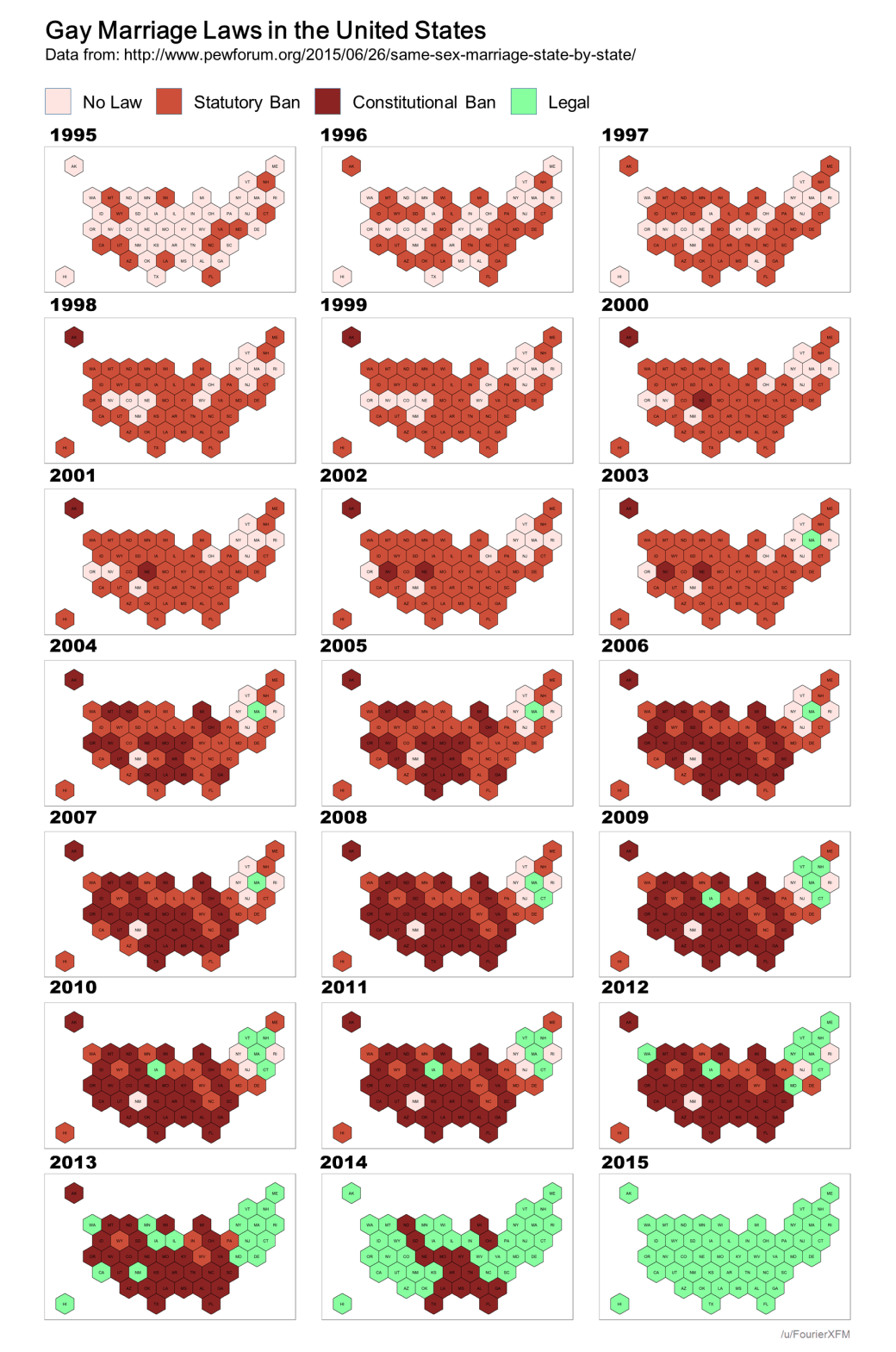

Having said all this, though, I should also point out that it’s possible to take this kind of prudent conservatism too far; if you’re too scared of upsetting the balance of the status quo, you may end up refusing to ever attempt to improve anything at all. It’s true that expert consensus is almost always a better indicator of truth than one particular person’s individual views – and it’s true that trying to make radical transformations to society will almost always have unforeseen negative consequences that outweigh the positive ones – but there’s also a reason why I include the word “almost” in both of those statements. Every now and then, the conventional wisdom really is completely off base, and every now and then there really is an opportunity to improve things that hasn’t been implemented yet. If you travelled back to the 1700s, for instance, the conventional wisdom would have been that it was okay to own slaves – and anyone arguing that black people should not only be free, but should have full equality under the law, would have been considered a fringe radical. Even as recently as a few decades ago, if you were trying to argue for gay rights, the only way to remain within the Overton Window of respectable mainstream opinion would have been to hedge your position and include a bunch of caveats clarifying that you weren’t necessarily advocating for anything as extreme and disruptive as full marriage rights (Heaven forbid), just that you wanted to reduce the severity of the anti-sodomy laws. In retrospect, of course, we can see that in both of those cases the conventional wisdom of the time was simply wrong; the Overton Window was far from where it should have been. Taking the outside view of this fact, then, what makes us think that we just happen to have been born into the exact point in human history where, for the first time ever, there are no such inefficiencies or failures that could be improved upon, and everything is working exactly as well as it possibly could? Are we really to believe that all the good ideas have been had already?

The efficient market hypothesis is a powerful explanatory tool for describing ideal markets – and it can be tempting to want to generalize it to encompass the totality of human experience. (Call it the “efficient world hypothesis.”) But to say that everything is working as well as it possibly can and that there’s no room for useful new ideas would be, in my opinion, naïve to say the least. As Alexander explains in his discussion of Yudkowsky’s writings on the subject:

Go too far with this kind of logic, and you start accidentally proving that nothing can be bad anywhere.

Suppose you thought that modern science was broken, with scientists and grantmakers doing a bad job of focusing their discoveries on truly interesting and important things. But if this were true, then you (or anyone else with a little money) could set up a non-broken science, make many more discoveries than everyone else, get more Nobel Prizes, earn more money from all your patents and inventions, and eventually become so prestigious and rich that everyone else admits you were right and switches to doing science your way. There are dozens of government bodies, private institutions, and universities that could do this kind of thing if they wanted. But none of them have. So “science is broken” seems like the same kind of statement as “a $20 bill has been on the floor of Grand Central Station for a week and nobody has picked it up”. Therefore, modern science isn’t broken.

Or: suppose you thought that health care is inefficient and costs way too much. But if this were true, some entrepreneur could start a new hospital / clinic / whatever that delivered health care at lower prices and with higher profit margins. All the sick people would go to them, they would make lots of money, investors would trip over each other to fund their expansion into new markets, and eventually they would take over health care and be super rich. So “health care is inefficient and overpriced” seems like the same kind of statement as “a $20 bill has been on the floor of Grand Central Station for a week and nobody has picked it up.” Therefore, health care isn’t inefficient or overpriced.

Or: suppose you think that US cities don’t have good mass transit. But if lots of people want better mass transit and are willing to pay for it, this is a great money-making opportunity. Entrepreneurs are pretty smart, so they would notice this money-making opportunity, raise some funds from equally-observant venture capitalists, make a better mass transit system, and get really rich off of all the tickets. But nobody has done this. So “US cities don’t have good mass transit” seems like the same kind of statement as “a $20 bill has been on the floor of Grand Central Station for a week and nobody has picked it up.” Therefore, US cities have good mass transit, or at least the best mass transit that’s economically viable right now.

This proof of God’s omnibenevolence is followed by Eliezer’s observations that the world seems full of evil. For example:

Eliezer’s wife Brienne had Seasonal Affective Disorder. The consensus treatment for SAD is “light boxes”, very bright lamps that mimic sunshine and make winter feel more like summer. Brienne tried some of these and they didn’t work; her seasonal depression got so bad that she had to move to the Southern Hemisphere three months of every year just to stay functional. No doctor had any good ideas about what to do at this point. Eliezer did some digging, found that existing light boxes were still way less bright than the sun, and jury-rigged a much brighter version. This brighter light box cured Brienne’s depression when the conventional treatment had failed. Since Eliezer, a random layperson, was able to come up with a better SAD cure after a few minutes of thinking than the establishment was recommending to him, this seems kind of like the relevant research community leaving a $20 bill on the ground in Grand Central.

Eliezer spent a few years criticizing the Bank of Japan’s macroeconomic policies, which he (and many others) thought were stupid and costing Japan trillions of dollars in lost economic growth. A friend told Eliezer that the professionals at the Bank surely knew more than he did. But after a few years, the Bank of Japan switched policies, the Japanese economy instantly improved, and now the consensus position is that the original policies were deeply flawed in exactly the way Eliezer and others thought they were. Doesn’t that mean Japan left a trillion-dollar bill on the ground by refusing to implement policies that even an amateur could see were correct?

And finally:

For our central example, we’ll be using the United States medical system, which is, so far as I know, the most broken system that still works ever recorded in human history. If you were reading about something in 19th-century France which was as broken as US healthcare, you wouldn’t expect to find that it went on working when overloaded with a sufficiently vast amount of money. You would expect it to just not work at all.

In previous years, I would use the case of central-line infections as my go-to example of medical inadequacy. Central-line infections, in the US alone, killed 60,000 patients per year, and infected an additional 200,000 patients at an average treatment cost of $50,000/patient.

Central-line infections were also known to decrease by 50% or more if you enforced a five-item checklist that included items like “wash your hands before touching the line.”

Robin Hanson has old Overcoming Bias blog posts on that untaken, low-hanging fruit. But I discovered while re-Googling in 2015 that wider adoption of hand-washing and similar precautions are now finally beginning to occur, after many years – with an associated 43% nationwide decrease in central-line infections. After partial adoption.

Since he doesn’t want to focus on a partly-solved problem, he continues to the case of infant parenteral nutrition. Some babies have malformed digestive systems and need to have nutrient fluid pumped directly into their veins. The nutrient fluid formula used in the US has the wrong kinds of lipids in it, and about a third of babies who get it die of brain or liver damage. We’ve known for decades that the nutrient fluid formula has the wrong kind of lipids. We know the right kind of lipids and they’re incredibly cheap and there is no reason at all that we couldn’t put them in the nutrient fluid formula. We’ve done a bunch of studies showing that when babies get the right nutrient fluid formula, the 33% death rate disappears. But the only FDA-approved nutrient fluid formula is the one with the wrong lipids, so we just keep giving it to babies, and they just keep dying. Grant that the FDA is terrible and ruins everything, but over several decades of knowing about this problem and watching the dead babies pile up, shouldn’t somebody have done something to make this system work better?

We’ve got a proof that everything should be perfect all the time, and a reality in which a bunch of babies keep dying even though we know exactly how to save them for no extra cost.

It’s true that in most cases, the system tends to be the way it is for a reason; if you tried to change it, you’d quickly realize why it had been set up the way it was in the first place, and you’d want to change it back. Coming up with a revolutionary new idea that could legitimately change the world for the better is rare – like discovering a proverbial million dollar bill on the ground that nobody’s picked up yet – and if you think you’ve discovered one, your default reaction should be to treat that idea with caution, even outright suspicion. But just because the odds of hitting the jackpot are small doesn’t mean that no one can ever do so – people win the lottery every day. And the same is true for ideas; even if the odds of coming up with a world-changing idea are tiny, every good idea that has ever emerged throughout history necessarily has to have had someone who was the first person to have thought of it. So if you should happen to encounter such a case yourself, you shouldn’t just automatically dismiss it as too improbable to exist – that would be like saying there can be no such thing as lottery winners. You should take it as seriously as the potential payoff demands.

There’s a school of thought that tries to defend the efficient world hypothesis by saying that even though our society is markedly different now from how it was during the days of feudalism, monarchy, and so forth, that’s not because those systems were anything less than optimal at the time – it’s just that our more advanced levels of technical/educational/social attainment allow us to implement systems nowadays that wouldn’t have been workable back then. In other words, feudalism and monarchy really were the best possible systems for the people living in those eras, because their low level of development wouldn’t have been strong enough to sustain anything better. And similarly, countries living under totalitarian regimes today essentially have no better option, because if their societies were capable of handling democracy, they’d already be democratic. According to this view, the status quo is always a product of societies settling naturally into their optimal equilibrium; in every time and every place, the way things work is a close approximation of the best way it’s possible for them to work, given the level of development in that society.

Personally, I don’t buy this view (for a lot of reasons). But even if it were actually true – even if the status quo were always optimal, given the specific circumstances of a particular society – that still wouldn’t suggest that any new idea to change the system must therefore automatically be wrong. After all, the circumstances of a society are always shifting, and it’s always possible for the ideological winds to shift in such a way that a society may finally become ready to accept a new idea that it wasn’t previously ready for. The issue of gay marriage is a classic example where the national consensus went from “not even a debate” to “absolutely a debate” to “not even a debate” again – but this time in the other direction – in just a few short years. An idea that would have seemed like a pipe dream a mere decade or two earlier finally saw its time come – and that wouldn’t have happened if everybody had believed that improving the status quo was impossible. As Yudkowsky says:

Not every change is an improvement, but every improvement is a change […] You can’t do anything better unless you can manage to do it differently.

What’s more, once a major change is finally made, it’s often hard to remember why it ever seemed like such a big deal in the first place. Ideas like gay marriage – not to mention ideas like desegregation and even democracy itself – had a lot of people up in arms when they were first proposed, raising all kinds of uproar about the downfall of civilization (and admittedly, there are still people who feel this way about them now). But for most modern onlookers, those objections now seem absurd. We implemented these supposedly radical ideas, and the world kept turning just as it had before. Things improved considerably, in fact, and we’ve reached the point now where these ideas that used to be considered radical are now considered to be part of the bare minimum standard for normality. As Richard Dawkins puts it: “Yesterday’s dangerous idea is today’s orthodoxy and tomorrow’s cliché.”

So when it comes to considering outlandish new ideas, we can’t be afraid to explore new ideological territory, to speculate, to experiment. We should keep our experimentation within rational limits, of course; we shouldn’t want to blow up the entire system just for the sake of shaking things up and trying something new. But we can’t be so afraid of disrupting the parts of the system that are working well that we refuse to even consider improving the parts that aren’t. (After all, I doubt the people who were being forced to live as second-class citizens under the gay marriage bans and segregation laws would have agreed that the system was “working fine” for them.) Evolution requires variation – so if we want to evolve as a society, we have to be willing to try out different ideas. The only way to “do what works” is to first see what works.

Again, this doesn’t mean that you should always just assume that the most counterintuitive position is the best one. As satisfying as it might be to feel like you’ve uniquely figured out some secret truth that nobody else has, knee-jerk contrarianism isn’t any better than knee-jerk conformity – because by definition, the counterintuitive position is typically more likely to be the wrong one. Alexander illustrates this point with a couple of examples:

Ask any five year old child, and [they] can tell you that death is bad. Death is bad because it kills you. There is nothing subtle about it, and there does not need to be. Death universally seems bad to pretty much everyone on first analysis, and what it seems, it is.

But as has been pointed out, along with the gigantic cost, death does have a few small benefits. It lowers overpopulation, it allows the new generation to develop free from interference by their elders, it provides motivation to get things done quickly. Precisely because these benefits are so much smaller than the cost, they are hard to notice. It takes a particularly subtle and clever mind to think them up. Any idiot can tell you why death is bad, but it takes a very particular sort of idiot to believe that death might be good.

So pointing out this contrarian position, that death has some benefits, is potentially a signal of high intelligence. It is not a very reliable signal, because once the first person brings it up everyone can just copy it, but it is a cheap signal. And to the sort of person who might not be clever enough to come up with the benefits of death themselves, and only notices that wise people seem to mention death can have benefits, it might seem super extra wise to say death has lots and lots of great benefits, and is really quite a good thing, and if other people should protest that death is bad, well, that’s an opinion a five year old child could come up with, and so clearly that person is no smarter than a five year old child. Thus Eliezer’s title for this mentality, “Pretending To Be Wise”.

If dwelling on the benefits of a great evil is not your thing, you can also pretend to be wise by dwelling on the costs of a great good. All things considered, modern industrial civilization – with its advanced technology, its high standard of living, and its lack of typhoid fever – is pretty neat. But modern industrial civilization also has many costs: alienation from nature, strains on the traditional family, the anonymity of big city life, pollution and overcrowding. These are real costs, and they are certainly worth taking seriously; nevertheless, the crowds of emigrants trying to get from the Third World to the First, and the lack of any crowd in the opposite direction, suggest the benefits outweigh the costs. But in my estimation – and speak up if you disagree – people spend a lot more time dwelling on the negatives than on the positives, and most people I meet coming back from a Third World country have to talk about how much more authentic their way of life is and how much we could learn from them. This sort of talk sounds Wise, whereas talk about how nice it is to have buses that don’t break down every half mile sounds trivial and selfish.

So my hypothesis is that if a certain side of an issue has very obvious points in support of it, and the other side of an issue relies on much more subtle points that the average person might not be expected to grasp, then adopting the second side of the issue will become a signal for intelligence, even if that side of the argument is wrong.

That’s why it’s worth bearing in mind: Just because an idea sounds smarter or more sophisticated or more complex than the boring old mainstream view doesn’t mean it’s actually more accurate or more useful. Sometimes it’s just fool’s gold.

So all right then, you might say, that’s all well and good – but how can we figure out which areas really are the ones where the mainstream consensus is most likely to be wrong? Well, it’s not always easy. As Klosterman writes:

If I’m wrong about something specific, it’s (usually) my own fault, and someone else is (usually, but not totally) right.

But what about the things we’re all wrong about?

What about ideas that are so accepted and internalized that we’re not even in a position to question their fallibility? These are ideas so ingrained in the collective consciousness that it seems foolhardy to even wonder if they’re potentially untrue. Sometimes these seem like questions only a child would ask, since children aren’t paralyzed by the pressures of consensus and common sense. It’s a dissonance that creates the most unavoidable of intellectual paradoxes: When you ask smart people if they believe there are major ideas currently accepted by the culture at large that will eventually be proven false, they will say, “Well, of course. There must be. That phenomenon has been experienced by every generation who’s ever lived, since the dawn of human history.” Yet offer those same people a laundry list of contemporary ideas that might fit that description, and they’ll be tempted to reject them all.

Still, if you can put yourself in the right frame of mind, it can be easier to notice flaws in the conventional wisdom. The subtitle of Klosterman’s book – “Thinking About the Present As If It Were the Past” – offers one such helpful mentality. If you can put yourself outside your current social context and try to look at things through the eyes of an outsider, you may start to notice that some of the things you’ve always taken for granted don’t actually make that much sense when you have to justify them from first principles.

Graham offers similar advice; if you notice that certain ideas only seem to dominate the popular consensus not necessarily because there are good justifications for them, but simply because it would be considered weird or shameful not to believe them, it may be a red flag that these ideas can’t actually hold up on their own strength, but are merely the product of the intellectual fashions of the day – and are therefore worth probing and questioning further:

Have you ever seen an old photo of yourself and been embarrassed at the way you looked? Did we actually dress like that? We did. And we had no idea how silly we looked. It’s the nature of fashion to be invisible, in the same way the movement of the earth is invisible to all of us riding on it.

What scares me is that there are moral fashions too. They’re just as arbitrary, and just as invisible to most people. But they’re much more dangerous. Fashion is mistaken for good design; moral fashion is mistaken for good. Dressing oddly gets you laughed at. Violating moral fashions can get you fired, ostracized, imprisoned, or even killed.

If you could travel back in a time machine, one thing would be true no matter where you went: you’d have to watch what you said. Opinions we consider harmless could have gotten you in big trouble. I’ve already said at least one thing that would have gotten me in big trouble in most of Europe in the seventeenth century, and did get Galileo in big trouble when he said it – that the earth moves.

It seems to be a constant throughout history: In every period, people believed things that were just ridiculous, and believed them so strongly that you would have gotten in terrible trouble for saying otherwise.

Is our time any different? To anyone who has read any amount of history, the answer is almost certainly no. It would be a remarkable coincidence if ours were the first era to get everything just right.

It’s tantalizing to think we believe things that people in the future will find ridiculous. What would someone coming back to visit us in a time machine have to be careful not to say? That’s what I want to study here.

[…]

Let’s start with a test: Do you have any opinions that you would be reluctant to express in front of a group of your peers?

If the answer is no, you might want to stop and think about that. If everything you believe is something you’re supposed to believe, could that possibly be a coincidence? Odds are it isn’t. Odds are you just think what you’re told.

The other alternative would be that you independently considered every question and came up with the exact same answers that are now considered acceptable. That seems unlikely, because you’d also have to make the same mistakes. Mapmakers deliberately put slight mistakes in their maps so they can tell when someone copies them. If another map has the same mistake, that’s very convincing evidence.

Like every other era in history, our moral map almost certainly contains a few mistakes. And anyone who makes the same mistakes probably didn’t do it by accident. It would be like someone claiming they had independently decided in 1972 that bell-bottom jeans were a good idea.

If you believe everything you’re supposed to now, how can you be sure you wouldn’t also have believed everything you were supposed to if you had grown up among the plantation owners of the pre-Civil War South, or in Germany in the 1930s – or among the Mongols in 1200, for that matter? Odds are you would have.

Back in the era of terms like “well-adjusted,” the idea seemed to be that there was something wrong with you if you thought things you didn’t dare say out loud. This seems backward. Almost certainly, there is something wrong with you if you don’t think things you don’t dare say out loud.

[…]

Great work tends to grow out of ideas that others have overlooked, and no idea is so overlooked as one that’s unthinkable. Natural selection, for example. It’s so simple. Why didn’t anyone think of it before? Well, that is all too obvious. Darwin himself was careful to tiptoe around the implications of his theory. He wanted to spend his time thinking about biology, not arguing with people who accused him of being an atheist.

In the sciences, especially, it’s a great advantage to be able to question assumptions. The m.o. of scientists, or at least of the good ones, is precisely that: look for places where conventional wisdom is broken, and then try to pry apart the cracks and see what’s underneath. That’s where new theories come from.

A good scientist, in other words, does not merely ignore conventional wisdom, but makes a special effort to break it. Scientists go looking for trouble. This should be the m.o. of any scholar, but scientists seem much more willing to look under rocks.

Of course, actively going against the grain of peer pressure doesn’t always come naturally; seeking out ideas that you know everybody thinks are wrong can feel, well, wrong. But one way to combat your instinctive inclination to want to follow the crowd is to imagine how you might feel differently if the crowd’s stance were different. Alexander suggests imagining which of your beliefs might change if you found out that what you thought was the popular consensus was actually an illusion (created by alien experimenters or something) and the real consensus was the complete opposite view:

[There’s] something I said a while back on Twitter:

[…]

I feel like this is a good thought experiment. Which beliefs of yours would survive that knowledge, be so strong that you would tell the experimenter that you are right or they are wrong, or make you start thinking that it’s all part of a meta-experiment like in [this] story? Which ones would you start to doubt in ways that you might not have thought of back when they were common? Which, if any, would you say “Yeah, I knew it all along, I guess I was just too scared to admit it”?

I’m thinking here of antebellum Southerners, let’s say early 1800s. Their society is built around slavery. There are a couple of abolitionists around, but not many, and none who can force anyone to listen to them. Pretty much everyone around them says slavery is okay, the books they read from the past are all about Romans or Israelites who thought (rather different forms of) slavery were okay, and they have heard a lot of plausible-sounding arguments justifying slavery.

Now bring them forward to the present day. Tell them “Right now in the present day pretty much every single person believes that slavery is morally wrong. No one would justify it. Here, come out of the laboratory and spend a few years living in our slave-free society.”

I don’t know if the Southerner would learn a whole lot of new facts during this period. They might learn that black people could be pretty capable and intelligent, but Frederick Douglass was a person, everyone knew he was smart, that didn’t change anyone’s mind. Yet even without learning many new facts, I can’t imagine he would stay pro-slavery very long.

And I wonder whether this is purely a conformity thing, and upon being returned to the antebellum South he would start conforming with them again, or whether it is a one-directional effect that primes your thoughts to go in the direction of the truth and allows you to see new valid arguments, and that upon going back to the South he would be a little wiser than his countrymen.

And I also wonder whether a sufficiently smart Southerner could do all this via a thought experiment, say “I think slavery is pretty okay now, but imagine I went to a world where everyone was absolutely certain it was terrible, how bad would I feel about it?” and get all the benefits of spending a while in our world and going through all that moral reflection without ever actually leaving the antebellum South. And if this would be a more powerful intuition pump than just asking him to sit down and think about slavery for a few hours.

This is a pretty powerful ethical test for me. I imagine waking up in that Matrix pod and being told that no one in the real world believes in abortion, that pro-choice is obviously horrible, that all my fellow experimental subjects saw through it, that as far as they can tell I’m just a psychopath. And I feel like I would still argue “No, actually, I think you guys are wrong.” (but, uh, your mileage may vary)

If it was vegetarianism – if they said no one in the real world ate meat or had tried to justify factory farming, and every single one of my co-participants had become vegan animal rights activists – I don’t think there’s a lot I could say to them. “Sorry, I have an intense disgust reaction to all vegetables which has thwarted all of my attempts at vegetarianism?” “Yeah, we know, we put that in there to make it a hard choice.”

There are some issues where I could imagine it going either way. If the alien simulators were conservative, I could imagine exactly the way in which I would feel really stupid for having ever believed in liberalism. And if the alien simulators were liberal, I could imagine exactly how it would feel to get embarrassed for ever having flirted with conservative ideas. I don’t think that’s necessarily a flaw in the thought experiment. Both of those feelings are useful to me.

There’s a similar thought exercise you can do when assessing the value of a particular idea or cultural practice, where you imagine an alternate version of history in which the idea or practice in question didn’t exist and nobody had ever even considered it before, and ask: Would it still make sense to introduce the idea into the world and start applying it in the modern day? For instance, if the practice of spanking children had never existed and somebody tried to introduce it today, would the idea still fly? How about the concept of beauty pageants? What about boxing matches? If it doesn’t seem like the idea would be widely accepted if it weren’t already a part of the status quo, it’s might be a good indication that the only reason why the idea is widely accepted in the real world is because it’s already a part of the status quo – not necessarily because it’s actually optimal to have it around. The status quo has its own kind of inertia that makes people want to resist doing things differently from how they’re already being done; again, it goes back to the mentality of “Why change something that’s already working fine?” But you have to be able to avoid falling into the trap of this status quo bias as best you can, because otherwise the results can be harmful or simply embarrassing, as Alexander points out:

Alex Tabarrok beat me to the essay on Oregon’s self-service gas laws that I wanted to write.

Oregon is one of two US states that bans self-service gas stations. Recently, they passed a law relaxing this restriction – self-service is permissable in some rural counties during odd hours of the night. Outraged Oregonians took to social media to protest that self-service was unsafe, that it would destroy jobs, that breathing in gas fumes would kill people, that gas pumping had to be performed by properly credentialed experts – seemingly unaware that most of the rest of the country and the world does it without a second thought.

…well, sort of. All the posts I’ve seen about it show the same three Facebook comments. So at least three Oregonians are outraged. I don’t know about the rest.

But whether it’s true or not, it sure makes a great metaphor. Tabarrok plays it for all it’s worth:

Most of the rest of the America – where people pump their own gas everyday without a second thought – is having a good laugh at Oregon’s expense. But I am not here to laugh because in every state but one where you can pump your own gas you can’t open a barbershop without a license. A license to cut hair! Ridiculous. I hope people in Alabama are laughing at the rest of America. Or how about a license to be a manicurist? Go ahead Connecticut, laugh at the other states while you get your nails done. Buy contact lens without a prescription? You have the right to smirk British Columbia!

All of the Oregonian complaints about non-professionals pumping gas – “only qualified people should perform this service”, “it’s dangerous” and “what about the jobs” – are familiar from every other state, only applied to different services.

Since reading Tabarrok’s post, I’ve been trying to think of more examples of this sort of thing, especially in medicine. There are way too many discrepancies in approved medications between countries to discuss every one of them, but did you know melatonin is banned in most of Europe? (Europeans: did you know melatonin is sold like candy in the United States?) Did you know most European countries have no such thing as “medical school”, but just have college students major in medicine, and then become doctors once they graduate from college? (Europeans: did you know Americans have to major in some random subject in college, and then go to a separate place called “medical school” for four years to even start learning medicine?) Did you know that in Puerto Rico, you can just walk into a pharmacy and get any non-scheduled drug you want without a doctor’s prescription? (source: my father; I have never heard anyone else talk about this, and nobody else even seems to think it is interesting enough to be worth noting).

And I want to mock the people who are doing this the “wrong” way – but can I really be sure? If each of these things decreased the death rate 1%, maybe it would be worth it. But since nobody notices 1% differences in death rates unless they do really good studies, it would just look like some state banning things for no reason, and everyone else laughing at them.

Actually, how sure are we that Oregon was wrong to ban self-service gas stations? How do disabled people pump their gas in most of the country? And is there some kind of negative effect from breathing in gas fumes? I have never looked into any of this.

Maybe the real lesson of Oregon is to demonstrate a sort of adjustment to prevailing conditions. There’s an old saying: “Everyone driving faster than you is a maniac; anyone driving slower than you is a moron”. In the same way, no matter what the current level of regulation is, removing any regulation will feel like inviting catastrophe, and adding any regulation will feel like choking on red tape.

Except it’s broader than regulation. Scientific American recently ran an article on how some far-off tribes barely talk to their children at all. New York Times recently claimed that “in the early 20th century, some doctors considered intellectual stimulation so detrimental to infants that they routinely advised young mothers to avoid it”. And our own age’s prevailing wisdom of “make sure your baby has listened to all Beethoven symphonies by age 3 months or she’ll never get into college” is based on equally flimsy evidence, yet somehow it still feels important to me. If I don’t make my kids listen to Beethoven, it will feel like some risky act of defiance; if I don’t take the early 20th century advice to avoid overstimulating them, it will feel more like I’m dismissing people who have been rightly tossed on the dungheap of history.

And then there’s the discussion from the recent discussion of Madness and Civilization about how 18th century doctors thought hot drinks will destroy masculinity and ruin society. Nothing that’s happened since has really disproved this – indeed, a graph of hot drink consumption, decline of masculinity, and ruinedness of society would probably show a pretty high correlation – it’s just somehow gotten tossed in the bin marked “ridiculous” instead of the bin marked “things we have to worry about”.

So maybe the scary thing about Oregon is how strongly we rely on intuitions about absurdity. If something doesn’t immediately strike us as absurd, then we have to go through the same plodding motions of debate that we do with everything else – and over short time scales, debate is interminable and doesn’t work. Having a notion strike us as absurd short-circuits that and gets the job done – but the Oregon/everyone-else divide shows that intuitions about absurdity are artificial and don’t even survive state borders, let alone genuinely different cultures and value systems.

And maybe this is scarier than usual because I just read Should Schools Ban Kids From Having Best Friends? I assume this is horrendously exaggerated and taken out of context and all the usual things that we’ve learned to expect from news stories, but it got me thinking. Right now enough people are outraged at this idea that I assume it’ll be hard for it to spread too far – and even if it does spread, we can at least feel okay knowing that parents and mentors and other people in society will maintain a belief in friendship and correct kids if schools go wrong. But what if it catches on? What if, twenty years from now, the idea of banning kids from having best friends has stopped generating an intuition of absurdity? Then if we want kids to still be allowed to have best friends, we’re going to have to (God help us) debate it. Have you seen the way our society debates things?

And I know some people see this and say it proves rational debate is useless and we should stop worrying about it. But trusting whatever irrational forces determines what sounds absurd or not doesn’t sound so attractive either. I think about it, and I want to encourage people to be really, really good at rational debate, just in case something terrible loses its protective coating of absurdity, or something absolutely necessary gains it, and our ability to actually judge whether things are good or bad and convince other people of it is all that stands between us and disaster.

And, uh, maybe the people who say kids shouldn’t be allowed to have best friends are right. I admit they’ve thought about this a lot longer than I have. My problem isn’t that someone thinks this. It’s that so much – even the legitimacy of friendship itself – can now depend on our culture’s explicit rationality. And our culture’s explicit rationality is so bad. And that the only alternative to dragging everything before the court of explicit rationality is some version of Chesterton’s Fence, ie the very heuristic telling Oregonians to defend full-service gas stations to the death. There is no royal road.

Maybe this is a good time to get on our chronophones with Oregon (or more prosaically, use the Outside View). Figure out what cognitive strategies you would recommend to an Oregonian trying to evaluate self-service gas stations. Then try to use those same strategies yourself. And try to imagine the level of careful thinking and willingness to question the status quo it would take to make an Oregonian get the right answer here, and be skeptical of any conclusions you’ve arrived at with any less.

Status quo bias can be powerful. A lot of times, you won’t be able to get someone to break their comfortable patterns of thought and behavior unless there’s some kind of crisis – some kind of ideological jolt to their system that serves as a proverbial wake-up call. But that’s why it’s so important to always be exploring weird and exotic new ideas – so that if such a wake-up call ever does occur, you’ll be ready for it. Milton Friedman put it best:

There is enormous inertia – a tyranny of the status quo – in private and especially governmental arrangements. Only a crisis – actual or perceived – produces real change. When that crisis occurs, the actions that are taken depend on the ideas that are lying around. That, I believe, is our basic function: to develop alternatives to existing policies, to keep them alive and available until the politically impossible becomes politically inevitable.

Even if the system seems to be functioning fairly well at the moment, it’s always a good idea to explore alternative possibilities, because there may come a point in the near future when “good enough” turns out to no longer be good enough. Circumstances are always changing, and what works best now may not always be what works best in the future; so we should always be looking for opportunities to improve our ideologies and update them as new information becomes available.