I – II – III – IV – V – VI – VII – VIII – IX – X – XI – XII – XIII – XIV – XV – XVI – XVII – XVIII – XIX – XX

[Single-page view]

One final note here before I wrap up: When I say everything we do ought to be aligned with the system I’ve been describing, I mean everything, including even those decisions that we wouldn’t usually regard as having anything to do with morality. You might intuitively think, for instance, that certain choices you make which apply solely to yourself, and to no one else, might be outside the scope of moral consideration. But as I mentioned before, your precommitment to maximize global utility also includes your own utility function – so even if you’re the only one who stands to gain or lose from a particular choice, you’re still obligated to maximize global utility by steering the universe onto the timeline-branch that gives you the most utility. That might seem like a trivial point, but what it means is that this whole framework can not only function as a moral philosophy, but also as a universal decision-making guide for any dilemma you might encounter – and that’s not only important for everyday life, but could have potential implications for deeper theoretical areas as well. There are certain problems in decision theory, for instance, like Newcomb’s Paradox and Parfit’s Hitchhiker, which have become famous for the challenges they pose to standard theories of self-interest – specifically the way they seem to punish traditional “rational” decision-making. But under the framework I’ve been laying out here, it becomes possible to successfully navigate these dilemmas, because by having already precommitted yourself to maximizing your overall utility – even if it means acting in a way that would seem to be less than optimal at the object level – you enable yourself to successfully avoid the lower-utility branches of the timeline.

(If you’re not really into decision theory, by the way, I apologize for what will probably seem like a weird digression right here at the end – but if you are at least a little bit curious about this kind of thing, I hope you’ll understand why I left this part in.)

Consider Parfit’s Hitchhiker. If you haven’t heard of this thought experiment before, it goes like this: Imagine that you’re stranded out in the middle of a desert somewhere, about to die of thirst, when someone pulls up to you in their car and makes you an offer: “I’ll drive you into town,” they say, “but only if you promise to give me $100 from an ATM when we get there.” Let’s say for the sake of simplicity that this driver won’t actually derive any positive or negative utility from the situation regardless of what occurs (maybe they’re a robot or something), so all that’s at stake is your own utility (which you always want to maximize). But let’s also stipulate that the driver somehow has the ability to perfectly read your facial expressions and tone of voice (or maybe you’re just unable to lie convincingly), so the driver will know whether or not you’re making a false promise. What do you do here? Obviously, you’d like to tell the driver, “Yes, I’ll pay you the $100” – but you also know that once you actually get into town, the outcome that will give you the most utility will be to break your promise and not pay the driver after all. Knowing this, then, there will be no way for you to honestly agree to the driver’s terms – so even if you answer yes, the driver will know you’re lying, and will drive away, leaving you to die.

Is this just a hopeless situation for you? It might seem that way from a traditional “rational self-interest” perspective. But once you look at it instead from the perspective of the system I’ve been describing here, it’s not actually that challenging. After all, if you’re thinking about the situation in terms of possible timeline-branches, it quickly becomes apparent that the potential timeline-branch in which you successfully hitch a ride without paying for it later doesn’t actually exist; there’s no way for you to leave open the possibility of later stiffing the driver, while also somehow having that fact escape the driver’s notice. (Maybe if you’d woken up to find yourself already inside the car, with the driver having already decided to give you a ride, then you could potentially get away with not paying once you got into town – but that’s not the situation you find yourself in.) The only way to successfully get a ride is to precommit yourself to paying, and to actually mean it. That’s the timeline-branch that actually gives you the greatest utility. And since you’ve already made an implicit precommitment to maximize global utility, way back in the original position before you were even born, then that’s the course of action you should follow.

The same thing applies to Newcomb’s Paradox. In this thought experiment, the setup goes like this: Imagine that you’re at a carnival and you discover a mysterious-looking circus tent with a thousand people lined up to get in. You get in line as well, and when it’s finally your turn, you enter the tent to see a table with two boxes sitting on top of it – an opaque one, and a transparent one containing $1000. You’re offered the choice either to take both boxes, or to only take the opaque box. But here’s the twist: Seated at the table is an individual known as the Predictor (who might be a mad scientist or an alien superintelligence or some other such thing depending on which version of this thought experiment you like best); and this Predictor informs you that just before you entered the tent, your brain was scanned using an advanced brain-scanning device, which ascertained with utmost certainty whether you would choose to take both boxes or just take the opaque box alone. If it determined that you would take the opaque box alone, then the Predictor placed $1,000,000 inside that box before you walked into the tent; but if it determined that you would take both boxes, then the Predictor left the opaque box empty. The contents of the boxes are now set and cannot be changed; so in theory, this game seems like it should be easy to exploit. However, before you entered the tent, you watched the Predictor make the same offer to each of the other thousand people in front of you in line – and in each case, the Predictor was correct about which choice they ultimately made. That is to say, everyone who chose to take both boxes walked away with $1000, and everyone who chose to only take the opaque box walked away with $1,000,000. The Predictor’s ability to tell the future, in short, is functionally perfect.

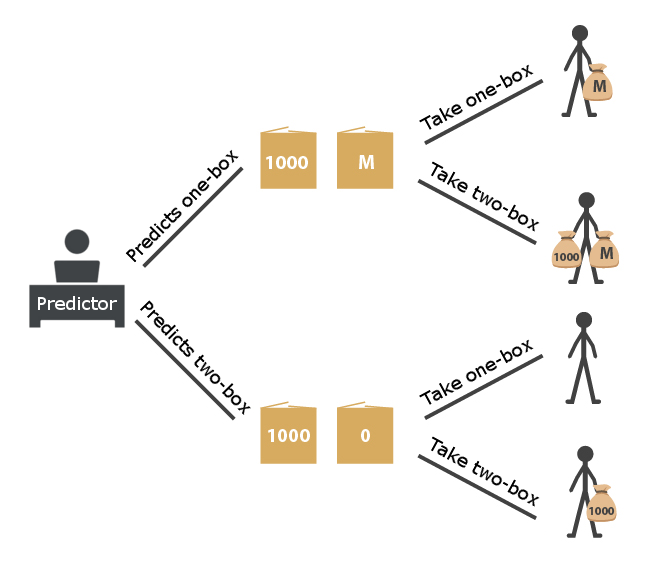

So what do you choose? On the one hand, you know that the contents of the boxes are already fixed and can’t be changed – so either the opaque box contains nothing, in which case you’d be better off taking both boxes and at least getting $1000, or the opaque box contains $1,000,000, in which case you’d still be better off taking both boxes, since it’d get you an extra $1000 on top of the $1,000,000 that’s already in the opaque box. It might seem, then, like two-boxing would be your best bet. But on the other hand, given the Predictor’s perfect record of predicting the future, you also have every reason to believe that if you take both boxes, the opaque one will be empty. So what’s the right answer? Well, once again, your decision in this scenario comes down to your ability to distinguish between which timeline-branches actually exist and which ones only seem to exist. Sure, it might be easy in theory to imagine a universe in which both boxes could be full and you could take them both – but in reality, there are only two paths that the universe might actually follow here: Either the conditions of the universe when you entered the tent (i.e. the configuration of atoms in your brain and so on) were such that they would cause you to take both boxes, in which case they would also have necessarily caused the Predictor to have left the opaque one empty, or they were such that they would cause you to only take the opaque box, in which case they would also have caused the Predictor to have filled it. In other words, the possibilities here don’t look like this:

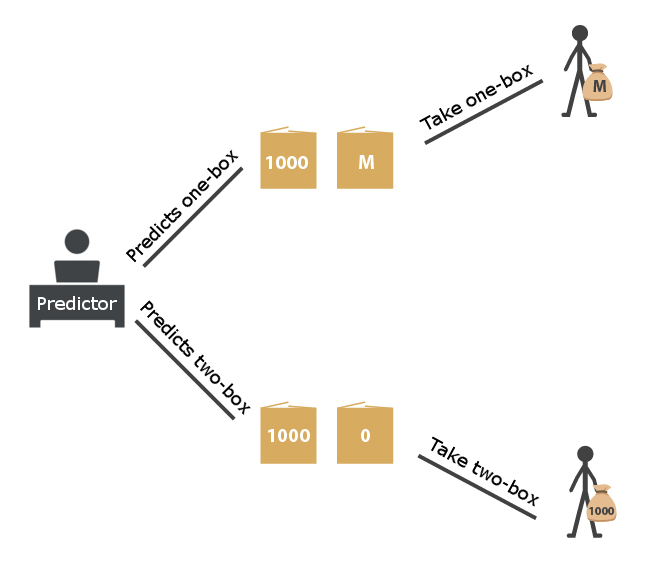

They look like this:

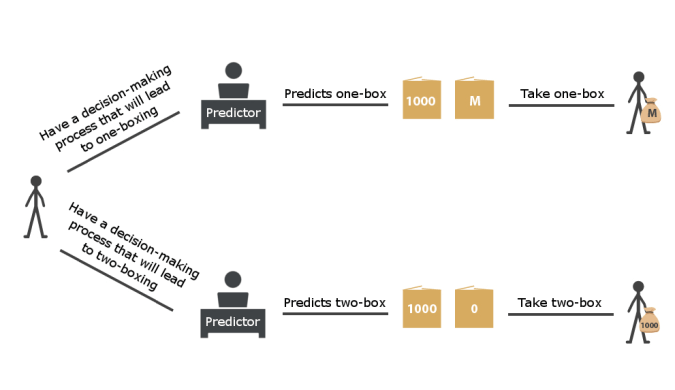

Or even more accurately, like this:

Given that reality, then, the actual best choice here is clearly to take the opaque box alone. It’s true that in doing so, you really are leaving money on the table; but the fact of the matter is that there’s no universe in which you can get the $1,000,000 without leaving the $1000 on the table – just like there was no universe in which you could have gotten a ride from the driver in the desert without paying for it later, and just like there was no universe in which Odysseus could have survived hearing the song of the Sirens while remaining unbound. (I mean, I’m sure you could imagine other creative solutions in his case, but you get what I’m saying.) It’s like buying a $5 ticket for a $500 raffle with no other entrants: There’s one possible universe in which you buy the ticket and win the prize, for a net gain of $495; and there’s a second possible universe in which you don’t buy the ticket and don’t win anything, but at least get to keep your $5; but there is no universe in which you somehow get to keep your $5 because you didn’t buy a ticket, and yet you still somehow win the raffle and get the $500 prize money as well. The “have your cake and eat it too” option doesn’t exist here; so the only correct choice if you want to maximize your utility is to have a self-restricting precommitment in place that you won’t try to have your cake and eat it too. You have to be willing to accept the small up-front utility reduction of paying $5 for the raffle ticket (or forgoing the $1000 in the transparent box), because there’s no other way of arriving at the much larger utility boost of winning the $500 prize (or getting the $1,000,000 in the opaque box). You might be tempted to argue that Newcomb’s Paradox is different from this raffle analogy, because your decision of whether to one-box or two-box doesn’t come until after the boxes have already been filled, so it can’t actually have any causal effect on their contents; the opaque box is already either filled or it isn’t. But that’s a misunderstanding of what decision you’re actually making here. The choice that decides the outcome of this scenario isn’t whether to take one or both boxes when they’re presented to you; that’s only a result of the actual choice, which is whether to go into the tent with the kind of brain that would be willing to subsequently forgo the $1000 or not. And that’s a choice that does have a causal effect on the boxes’ contents.

In other words, the key variable here isn’t the object-level decision of whether you want to one-box or two-box (which, although it might feel that way from the inside, isn’t really a free choice at all); the key variable is which decision-making algorithm you want to have implemented in your brain, which itself will be what determines whether you one-box or two-box. The right algorithm, of course, is the one that precommits you to always following the timeline-branch that maximizes utility. But it’s always possible to break that precommitment and do the wrong thing, by switching to an algorithm that would ultimately opt for taking both boxes – and naturally, that will lead you onto the timeline-branch where you only walk away with $1000.

If you’re still having trouble imagining how it might be possible for the Predictor to perfectly foresee your ultimate choice – i.e. if you’re asking yourself, “Couldn’t I just plan to one-box when I enter the tent but then change my mind and take both boxes after they’re actually presented to me?” – think about it this way: Imagine a version of this scenario in which, instead of being a human, you’re actually a highly-advanced AI, and the Predictor is a programmer who can simply examine your source code to see in advance exactly what your choice will be. It might feel to you from the inside like you’re free to make whichever decision strikes your fancy; but in reality, you can only choose what your programming dictates you will choose – and even if you start off planning to one-box but then change your mind and two-box, that change of mind will itself have only occurred because it was part of your code from the start. What that means, then, is that the question that you should really be focused on here isn’t which choice you’ll make in the tent – because that’s entirely determined by your code. The question you should be asking is which code you want to have making your decisions in the first place. In other words, the key question is: If you could modify your own programming, which algorithm would be best for you to implement in your own robot brain, and to thereby commit all of your future decisions to? Obviously, it would be the one that would precommit you to following whichever timeline-branch produced the greatest utility.

This seems logical enough for a purely mechanical AI, right? Well, the same logic applies to us flesh-and-blood humans too – because after all, at the end of the day, our brains are nothing but biological machines themselves, and the decisions we make are nothing but the product of our neural programming. (See my previous post for more on this.) Whatever choice you might make inside the Predictor’s tent is purely the result of whatever decision-making algorithm is running in your brain at that time; so even though it might feel like you’re freely choosing it in the moment, both your choice and the contents of the boxes are results of what your brain has been pre-programmed to make you do in that situation. Funny enough, that means that even if both boxes were completely transparent and you could actually see that one of them contained $1,000,000, the fact that it did contain that money would necessarily mean that you’d find yourself unable (or more accurately, unwilling) to take both boxes; the fact that the Predictor had perfectly read your programming would mean that there could be no other possible outcome. Seeing the full $1,000,000 box wouldn’t prompt you to also take the $1000 box, because the only way you could have gotten to that point would be if you were the kind of person whose brain was programmed to disregard that temptation. Seeing both boxes full would simply alert you to the fact that your brain was already in the process of deciding to one-box (even if you weren’t consciously aware of it yet). Again, there’s no timeline-branch in which it could be true both that the $1,000,000 box was full and that you would walk away with the $1000, any more than such a thing would be possible if you were a pre-programmed AI. Getting the $1,000,000 is the best outcome that it’s possible for you to achieve; so that’s the outcome that you should be precommitted to bringing about.

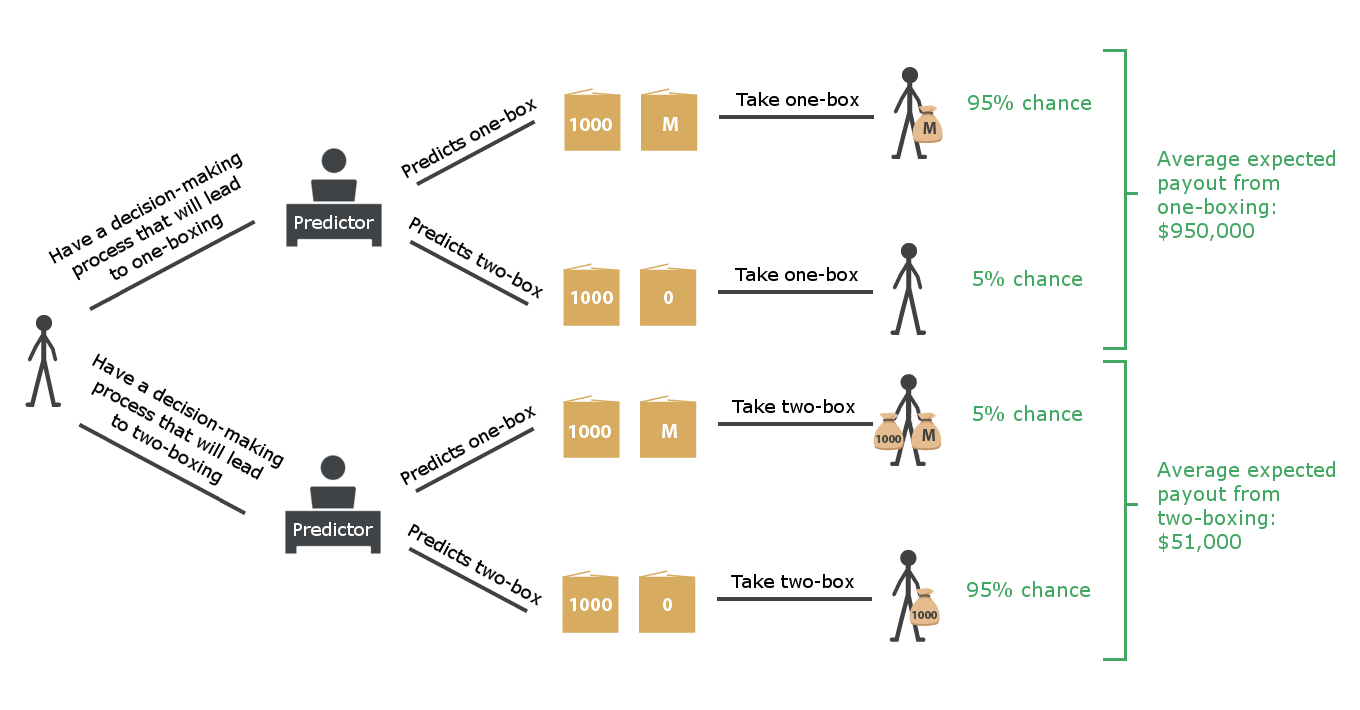

Of course, where this thought experiment gets a bit trickier is when you imagine a variation in which the Predictor isn’t actually perfectly accurate. To go back to the version of the thought experiment in which you were an AI, for instance, imagine if there were (say) a 5% chance that the programmer reading your code would smudge their glasses or get distracted or something, and would consequently misread your code in such a way that it would cause them to inaccurately predict your eventual choice. In that case, would it still be best to only take the opaque box? Well, again, you have to think of it in terms of potential timeline-branches. In the previous version of the problem, we could say with 100% certainty that the timeline-branch in which you walked away with $1,001,000 didn’t and couldn’t exist. But now, with this new fudge factor to account for, there’s a 5% chance that it actually could exist. Of course, that still leaves an 95% chance of it not existing – and it also leaves a 5% chance that you might one-box, only to find that the opaque box has been left empty because the programmer failed to see that that’s what you’d do. So this version of the problem isn’t quite as simple as just comparing the $1,000,000 payout to the $1000 payout and picking the higher one; instead, you have to calculate the average expected value of each possible choice, accounting for the fact that the best- and worst-case timeline-branches only have a 5% chance of actually existing. In other words, your possible outcomes look like this:

What this means, then, is that once you crunch the numbers, your average expected payout from one-boxing will be $950,000, whereas your average expected payout from two-boxing will be $51,000. Clearly, with an error rate of only 5%, your best decision-making algorithm will still be to precommit to one-boxing, for all the same reasons discussed above.

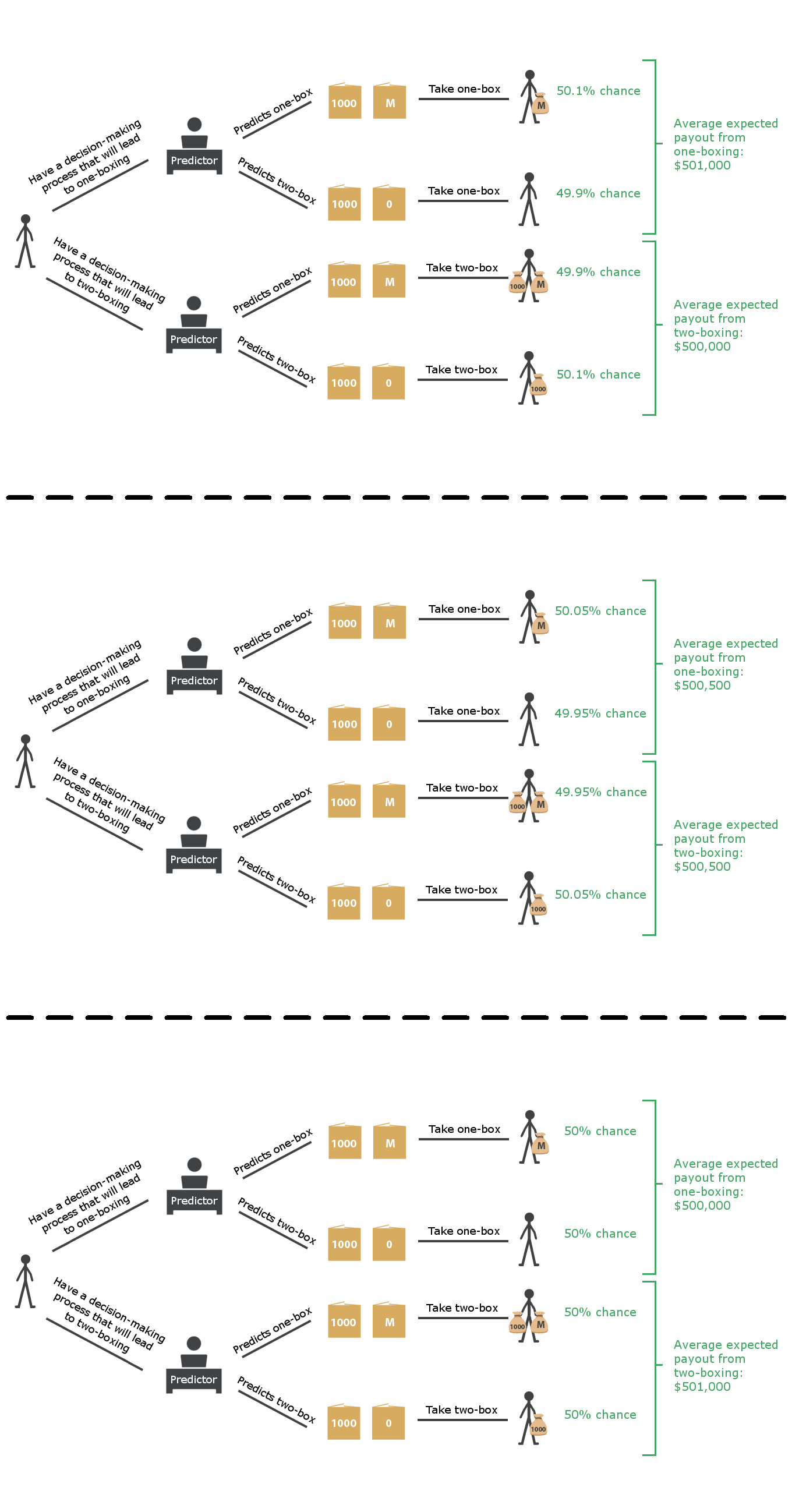

But what about if the error rate is higher – like 20%, or 30%, or 40%? Actually, in all of these cases, the expected payout from one-boxing is still higher than the expected payout from two-boxing; in fact, it remains higher even if the Predictor’s accuracy drops all the way down to 50.1%. Believe it or not, it’s not until the Predictor’s accuracy dips below 50.05% (at which point the expected payouts from one-boxing and two-boxing both equal $500,500) that it becomes worthwhile to take both boxes. Anything above that, and the expected benefit of potentially getting an extra $1000 is outweighed by the likelihood that that particular timeline-branch won’t even be available to you at all.

I could keep going here; there are about a dozen other variations of this thought experiment and others we could explore in this vein. (If you’re really interested, Yudkowsky and Nate Soares discuss several of them in this paper, which approaches these ideas from a somewhat different angle from the one I’ve described here but ends up reaching many of the same conclusions.) But at this point, you probably get the idea. In each of these examples, the solution will lie in your choice of which decision-making algorithm to have implemented in your brain, not necessarily which object-level decision seems to offer the highest reward in the moment it’s presented to you. Coming out ahead in these scenarios is entirely a matter of being precommitted to following the highest-utility possible branch of the timeline. And that’s not just true for isolated scenarios like the ones described above; it also applies to your entire life as a whole. To bring it all full circle here, then, we can go all the way back to the original position, back before you were even born, and say that there would have been no better way for you to have maximized your expected utility – not just for one or two hypothetical future situations, but for your whole life – than to have made the implicit precommitment (for acausal trade reasons) to follow whichever branch of the universe would best maximize the utility of its inhabitants, regardless of who you eventually became after you were born, or what specific situations you might have eventually encountered in your life. Fortunately, it just so happens that that’s the pledge you did precommit yourself to following (thanks to your Master Preference), whether you consciously realize it now or not – and it’s the one to which everyone else has precommitted themselves as well. What that means, then, is that when you’re in a situation where your own utility is the only thing at stake, you’re obligated to do whatever will produce the best results for you. And what it also means, as I’ve been saying all along, is that when you’re in a situation where you’re not the only one involved, and the preferences of others also have to be accounted for, you’re obligated to do what will satisfy those preferences to the greatest possible extent. In other words, you’re obligated to act in exactly the way that you would have wanted someone in your position to act if you were still in the original position, describing how you’d want future events to play out, and you didn’t know whether you’d actually become that person or not. That’s the principle that should always guide your actions: Imagine what someone in the original position would want you to do, despite not knowing whether they’d become you or not – then follow that course of action. (An alternative way of conceptualizing this is to imagine that you aren’t just an individual, but that you’re everyone – some kind of all-encompassing superorganism comprising all sentient life – and then do whatever maximizes the utility of that whole.) That, in short, is the objectively right way to act. And so if I had to sum up this whole framework in a nutshell, that’s what I’d say the bottom line is. The decisions we make do have objectively right answers. There is an objective morality, which we’re all obligated to follow. And what it says is that we should all act in such a way as to bring the universe onto the timeline-branch that provides the greatest degree of preference satisfaction for its inhabitants. What that means in practice, simply enough, is that the moral wisdom you’ve already heard is true: Love yourself, and love others as you love yourself. You may not always succeed, but all that matters is that you do the best you can. That, to quote Hillel the Elder, is the whole of the law – and everything else is commentary. ∎