I – II – III – IV – V – VI – VII – VIII – IX – X – XI – XII – XIII – XIV – XV – XVI – XVII – XVIII – XIX – XX

[Separate-page view]

In my last post, I talked about why I’m not religious and why I don’t think religion provides a good basis for morality. Whenever this topic comes up, one of the most common responses from believers is to ask: Well, if you don’t think morality comes from God, where do you think it comes from? If God isn’t defining which actions are good and which are bad, then who does? Does everybody just use their own definitions of good and bad? Are we stuck with a system of moral relativism, in which there’s no basis for saying anyone’s definition is more legitimate than anyone else’s, and the conceptions of morality promoted by the Nazis and the Taliban are considered to be no less valid than those promoted by Martin Luther King and Mahatma Gandhi? Is morality really nothing more than a matter of cultural convention or personal opinion or preference – like a favorite ice cream flavor or something – with no objectively correct answer?

It’s a reasonable concern, because there actually are quite a few people who do believe in this kind of relativism. You’ll sometimes encounter well-meaning progressive types, for instance, who start from the premise that it’s good to respect other cultures (which is certainly true!) but then take that premise as such an absolute that they extend it far beyond its reasonable limits, to the point that they’ll readily accept even the most brutal and inhumane practices in the name of universal tolerance. This attitude can lead to some ugly results – as when, for instance, the government of Brunei recently attempted to justify its draconian penal code (which imposes punishments like amputation and stoning to death for offenses like adultery, theft, and homosexual behavior) with the assertion that “it must be appreciated that the diversities in culture, traditional and religious values in the world means that there is no one standard that fits all.” Not exactly that progressive after all, it turns out.

But in addition to the “all cultural practices are equally respectable” crowd (AKA “normative relativists”), there are also plenty of people – including professional philosophers – who don’t necessarily like the idea of tolerating all practices equally, yet can’t find any way of grounding that stance objectively, and so feel compelled to bite the bullet and admit that it’s all subjective (AKA “meta-ethical relativists”).

Personally, I share the intuition held by most people that moral relativism can’t be the right answer. But what’s the alternative? If people’s various conceptions of goodness and badness are totally subjective, how can we say that statements like “Torturing innocent people for fun is wrong” or “It’s immoral to enslave other people for profit” are somehow objectively correct? On what basis (other than pure intuition) can we claim that objective moral truths exist? Is such a thing even possible?

I actually think it is. But before I explain why, I should point out that there are actually two distinct questions we need to answer here. The first question, of course, is how we can objectively say what’s good and what’s bad. But even if we manage to answer that, it doesn’t automatically mean that we’ve solved all of morality. There’s also the second question, which is: Even if we can objectively define good and bad, why should we then do what’s good rather than what’s bad? How do we ground the assertion that we ought to do what’s right rather than simply doing what’s best for ourselves?

There’s a lot of overlap between these questions; but they do require two distinct answers, and answering one won’t necessarily answer the other. Ultimately, I’ll try to answer both in this post. But let’s take them one at a time, starting with the first one.

The first thing I think we have to recognize is that the relativists are actually right about one point: When we talk about goodness and badness (not merely in the specific moral sense of “right” and “wrong,” but also in the general sense of “desirable” and “undesirable” more broadly), we aren’t talking about some inherent property that the universe has, or that particular acts have. Properties of goodness and badness don’t just exist “out there” in the world, independent of anyone’s will. Acts can only have positive or negative value if that value is ascribed to them by sentient beings. If the world hypothetically consisted of nothing but rocks, and the wind caused one rock to roll down a hill and land on another rock, that event wouldn’t have any value one way or the other; it would just be something that happened. (By contrast, if the rock crushed someone’s foot, that person would certainly consider that a bad outcome.) The only way an event can be called good or bad (i.e. positive-value or negative-value) is if there’s at least one sentient being around to consider it good or bad. In other words, you can’t have value without a valuer.

That’s the part I agree with the relativists on: These kinds of valuations are necessarily subjective. The word “goodness” is basically just a synonym for “the positive value that we ascribe to things.” But does that then mean that we can never objectively say which actions are more good (i.e. have higher value) and which are more bad (i.e. have lower value)? On the contrary – as odd as it might sound, I think that subjective valuations are actually the very thing in which the objective goodness or badness of our actions can be grounded. Let me explain.

See, the key point here is that just because something is based on subjective valuations or experiences doesn’t mean that we can’t make objective statements about it. This is something we can see clearly enough when it comes to things other than goodness/badness, like our emotions and tactile sensations. Let’s say, for instance, that you burn your hand on a hot stove. The pain you feel from that experience is entirely subjective, true. But the fact that the pain exists is not. It’s not just a matter of opinion whether burning your hand causes you pain; it’s an objective fact that the universe in which you burn your hand contains more pain than an otherwise identical universe in which you don’t burn your hand. That’s not because painfulness is some inherent physical property of hot stoves; the subjective experience of hurting is what constitutes pain. But it’s because of that subjectivity-based definition that we can objectively say that some things are more painful than others. When we say that hot stoves are objectively more painful than room-temperature stoves, what we’re saying is that they objectively produce more of that subjective experience known as pain.

Similarly, take something like disgust. We can all agree that disgust is an inherently subjective valuation, not something that exists independently “out there” in the universe. The only way something can be called disgusting is if someone thinks it’s disgusting. And yet, it’s also possible to say that a hypothetical universe in which (say) everyone was asked to examine a bowl of vomit would objectively contain more disgust than an otherwise-identical universe in which everyone was asked to examine a bowl of roses. Despite the fact that disgust is a purely subjective valuation, we can nonetheless say objectively that certain universe-states will contain more or less disgust than others. Again, just because something is based on subjective valuations doesn’t mean that we can’t make objective statements about it.

And we can apply this same framework to the concept of goodness. Moral philosophers will often suggest that the only way to objectively ground the concept of goodness is to somehow find a way around the inconvenient fact that goodness seems to be nothing but a subjective valuation that we ascribe to things. But instead of trying to find a way around that premise, I think we can actually accept it as obviously true, embrace it, and run with it – and ironically, it’s precisely by doing so that we can form an objective basis for saying what’s good and what’s bad.

To illustrate what I mean, here’s one more thought experiment to go with the others above: Imagine a hypothetical universe in which (say) a mother noticed that her son was sad and gave him a hug; and then imagine a second otherwise-identical universe in which she stabbed him instead. In these scenarios, we can safely say that the inhabitants of the first universe (particularly the mother and son themselves) would ascribe a higher level of positive value (i.e. goodness) to the hug than the inhabitants of the second universe would ascribe to the stabbing. (Maybe if the mother in the second universe was a psychotic sadist or something, she might consider stabbing her son to be a good thing; but however much positive value she might ascribe to it, her son would certainly ascribe a whole lot more negative value to it – not to mention whatever negative value might be ascribed to it by the other inhabitants of their universe – so on net, the overall level of goodness ascribed to the stabbing would be significantly negative.) All of these valuations would be totally subjective, of course; the level of goodness ascribed to the mother’s actions by one person might be totally different from the level of goodness ascribed to them by another person. But what wouldn’t be subjective is whether the hugging universe ultimately contained a higher level of this subjectively-ascribed goodness than the stabbing universe. It inarguably would. What this shows, then, is that by accepting that goodness is nothing but a subjective valuation that we ascribe to things, we actually make it possible to make objective statements about which acts have higher levels of goodness than others.

Another way of saying this is that we don’t have an affinity toward certain things because they’re innately good and an aversion to other things because they’re innately bad; rather, our affinities and aversions toward these things are what constitute their goodness or badness. If we give something a higher level of subjective valuation, we can objectively say that it has a higher level of goodness, and if we give something a lower level of subjective valuation, we can objectively say that it has a lower level of goodness – because those subjective valuations are what goodness and badness are. Again, the only value that can exist is value that is ascribed to something by sentient beings like us. So if one action has a higher level of subjectively-ascribed value than another action, it’s necessarily better by definition, in the same sense that a triangle necessarily has three sides or a circle is necessarily round.

This might sound superficially similar to moral relativism; but it couldn’t be more different in its conclusions. Under moral relativism, there would be no basis for saying that (for instance) a society that routinely murdered children for fun was acting badly. Under this alternative system, though, if the society’s affinity for murdering its children was outweighed by the children’s aversion to being murdered (which it certainly would be, assuming the children were normal humans), we’d be able to objectively say that the society’s practice of child murder was morally bad. (And since there would also be extremely negative second-order effects on the broader society, this would be even more the case.) Weighing all the subjective valuations against each other would yield a total level of goodness or badness that we could objectively identify as positive or negative. And that’s the bottom line here: We can evaluate moral goodness as an objective quantification of individuals’ subjectively-held valuations and interests.

This is essentially what I see as the basis for a kind of utilitarianism. If you’ve studied moral philosophy before, you’ll already be familiar with utilitarianism – but if not, its core idea is that the morality of an action is defined by how much that action benefits sentient beings like us and how little it harms them. (For this reason, it’s considered a branch of consequentialism, which simply says that the morality of an action is defined by its consequences.) This isn’t the only way of trying to define morality, of course; there are also philosophies like deontology – utilitarianism’s biggest rival – which says that the consequences of an action aren’t actually what determine its morality at all, but that morality instead consists solely of following certain unconditional rules at all times, like “don’t lie” and “don’t steal” and so on, regardless of the consequences. Under the deontological model, if an axe murderer shows up at your front door and asks you where your best friend is, you should tell him exactly where she is – even if you know he’s going to go kill her – because lying is immoral, period, in every context. But according to utilitarianism, morality is more than just unconditional rule-following; the conditions actually matter. You have to weigh the harms against the benefits – and whichever choice produces the least amount of harm and the greatest amount of benefit (AKA utility, AKA the kind of subjectively-ascribed goodness I’ve been talking about), that’s the moral choice.

So in the above example, for instance, if you imagined using a numerical scale to rate the amount of utility that would accrue to everyone involved, you might conclude something like: Telling the axe murderer where your friend is would give a +20 utility boost to the murderer (since it would help him in his mission to kill her), a +10 boost to yourself (since you’d get the satisfaction of being honest), and a +500 boost to the broader society (since it would help reinforce a social norm of everyone being honest all the time), but it would also result in a reduction of -10,000,000 utility for your friend (since she would be killed and lose everything), a -10,000 reduction for all her loved ones (since they’d be devastated by her death), a -300 reduction for yourself (since you’d have to live with the guilt of knowing you abetted your friend’s murder), and a -500 reduction for the broader society (since it would help reinforce a social norm of everyone readily abetting axe murderers upon request) – so on net, telling the axe murderer where your friend is would be a significant negative in terms of total utility (a loss of -10,010,270 overall) relative to the alternative choice of lying to him. These numerical utility ratings, of course, would be based entirely on each person’s subjective valuation of the possible outcomes; the utility boost that the murderer would get from killing your friend might be higher or lower than +20 depending on how much he wanted to kill her, the utility reduction that your friend would get from dying might be higher or lower than -10,000,000 depending on how much she wanted to live, and so on. But knowing the subjective valuations of everyone involved would give us all the information we’d need to objectively say whether the act of lying to the murderer would ultimately be a good thing (positive utility) or a bad thing (negative utility) – because again, goodness and badness are nothing but subjective valuations themselves, and it is in fact possible to make objective statements about such things.

(Obviously, here in the real world we can’t know the utility values of every situation with the kind of exact numerical precision used in the example above; I just made up the numbers there for illustrative purposes. But we can at least approximate. And who knows, maybe at some point in the near future, we’ll develop such advanced brain scanning and computing technology that we actually will be able to determine exactly how much utility people ascribe to things. Either way though, the fact that we might only ever have an imperfect understanding of the objective moral truth of any given situation doesn’t change the fact that there is an objective truth there to be found, whether our estimations of it are perfect or not.)

Needless to say, I think utilitarianism/consequentialism makes a lot more sense than deontology as a foundation for morality – not only because it just seems more intuitively plausible (Would a deontologist refuse to tell a lie even if the consequence was that the entire universe would be destroyed?), but also because the fundamental basis for deontology just doesn’t seem like something you can actually pin down once you probe into its internal logic. For instance, in the example of the axe murderer, you might refuse to tell a lie because you consider “tell the truth” to be an inviolable moral duty; but who’s to say that you couldn’t just as easily consider something like “protect the innocent” or “don’t abet murder” to be an inviolable moral duty (which would lead you to take the opposite action and lie to the murderer)? Which rules are the ones you should consider absolute, and how should you make that determination? The guideline given by Immanuel Kant, history’s most famous deontologist philosopher, is that you should only consider something to be moral if it’s universalizable – meaning that (according to its most popular interpretation) you should only do something if you’d be willing to live in a world where everyone did it. Hence, telling lies would be considered immoral, since you wouldn’t want to live in a world where everyone lied whenever they thought they could get away with it. But by that same token, refusing to protect an innocent person from being murdered would also have to be considered immoral, since you wouldn’t want to live in a world where people readily abetted murderers upon request. The moral duty of telling the truth and the moral duty of protecting innocent life would fundamentally conflict with each other in this scenario – and since (for the purposes of this thought experiment) you wouldn’t be able to uphold both at the same time, how would you then figure out what to do? One approach, of course, would be to try and avoid the contradiction by formulating rules that were more narrowly tailored to particular circumstances – i.e. instead of having broad rules like “don’t lie” and “protect the innocent,” you could have much more specific rules like “don’t lie to firefighters when they ask you where the occupants of a burning building are” or “do go ahead and lie to axe murderers when they ask you where their would-be victims are hiding.” But even then, there are always so many nuances of circumstance in every decision, and accordingly so many potential moral tradeoffs to account for, that no two decisions are exactly alike; so if you wanted rules that were specific enough to never conflict with any other rules, you’d have to get extremely specific, to the point where you’d practically be creating a new rule for every individual situation on a one-by-one basis. And at that point, you’d be defeating the whole purpose of deontology, because you’d no longer be using broadly generalizable rules of moral behavior at all; you’d essentially just be doing a more convoluted version of what utilitarians do, weighing different moral considerations against each other according to the circumstances of each situation.

At any rate, it seems to me that the whole idea of trying to have a rule-based system of morality that’s separate from all consequentialist considerations is futile from the start, regardless of how you resolve such conflicts between rules. After all, the only way of determining whether a rule can be considered universalizable in the first place is to ask whether you’d rationally want to live in a hypothetical world where the rule had been made standard for everyone to follow – and how can you answer that question without basing it on your subjective judgment of what the consequences of that hypothetical scenario would be? How can you determine whether it would be desirable to live in a world where everybody lied, or everybody stole, or everybody killed, without considering what the consequences of those actions would be if they were universalized?

This was the argument that Jeremy Bentham, the founder of utilitarianism, made against deontology. According to him (and his successor John Stuart Mill), despite deontology’s claim to be a rival theory to consequentialism, once you drilled down far enough, it turned out that it was actually based on consequentialist considerations itself without realizing it. In fact, not only was deontology subsumed by consequentialism; so was every other serious ethical theory on the market. As Michael Sandel puts it:

Bentham’s argument for the principle that we should maximize utility takes the form of a bold assertion: There are no possible grounds for rejecting it. Every moral argument, he claims, must implicitly draw on the idea of maximizing [utility]. People may say they believe in certain absolute, categorical duties or rights. But they would have no basis for defending these duties or rights unless they believed that respecting them would maximize human [utility], at least in the long run.

In other words, even if you had a system of morality that tried to define the goodness of an act by something other than its consequences – like whether the person doing it had good motives, or whether their actions adhered to a certain set of rules, etc. – once you actually dug a little deeper and asked what the basis was for those criteria (What is it that makes good motives good? What is it that makes adherence to certain rules good? Etc.), you’d ultimately have to either arrive at some consequentialist/utilitarian justification for what you were calling good, or else find yourself stuck in a tautology. That isn’t to say, of course, that it wouldn’t even be theoretically possible to have a normative system that had nothing to do with utility – you could, for instance, have a system that said something like “what constitutes goodness is always wearing green on Sundays” – but at that point it seems fair to say that what you’d then have would no longer be a real system of morality at all, but something else entirely.

(Speaking of which, I should mention for the sake of completeness that the brand of deontology promoted by Kant himself was actually somewhat different from the more popular formulation of deontology I’ve been addressing here, and did in fact make more of an effort to define goodness in a non-consequentialist way. I don’t think he was ultimately successful in this effort; as G. W. F. Hegel points out, the standard for goodness Kant uses seems more like a test of non-contradiction than a true test of morality. Still, a lot of Kant’s ideas are genuinely valuable even within a consequentialist framework, and I’ll be bringing a few of them back into the discussion later on.)

I could keep going on about the whole consequentialism vs. deontology debate, but others have already covered it exhaustively and it’s not really my main focus here, so I don’t want to spend too much more time on it. I’ll just add that if you’re still on the fence about it (or even if you aren’t), I’d highly recommend Scott Alexander’s post on the subject here; I’ll be quoting him quite a bit in this discussion. (See also his brief response here to T.M. Scanlon’s “incommensurability” counterargument.)

Now of course, none of this is to say that consequentialism and utilitarianism don’t raise some tricky questions of their own. For one thing, if morality is always a matter of having to weigh various interests against each other (as opposed to just having black-and-white rules which say that certain actions are always right and others are always wrong), does that mean that nothing is truly sacrosanct? Are there really no duties or rights – like people’s lives, or their freedom – that are so unconditional that they couldn’t be violated if doing so would provide a big enough net utility gain?

Any time you start considering the idea of potential moral tradeoffs, it’s easy to fall back onto absolutist principles like “there are certain rights that must never be violated for any reason,” and “life must be protected at all costs.” But the thing is, we disregard these principles all the time, and for good reasons. We restrict people’s right to freedom of speech any time we prohibit them from falsely shouting “fire!” in a crowded theater. We endanger people’s lives any time we allow the speed limit to be higher than 20 miles per hour. If we really considered these rights to be totally inviolable, we could guarantee total freedom of speech by allowing people to say whatever they wanted at any time; we could guarantee maximal public safety by confining everyone to protective bubbles at all times and never letting anyone do anything that might endanger them in any way; and so on. The fact that virtually none of us think these are good ideas reveals that, although it’s generally good to think of certain rights and liberties as being absolutely inviolable, there are in fact real limits to them that have to be recognized. The utility value of these rights and liberties is immensely high, no doubt – and should be treated accordingly – but it isn’t infinite. And so if the harm of unconditionally guaranteeing them in some specific situation would outweigh any possible benefit, it would be better not to guarantee them in that situation.

This is especially true considering that in most such situations, the thing that shifts the balance of the utility calculations in favor of violating some particular right or liberty is the fact that doing so would be the only way to protect some other equally important right or liberty. We have a habit here in the US of treating freedom as some kind of binary thing that you either have or don’t have; you’re either totally free, or your rights are being infringed (and therefore you’re being oppressed). But in truth, it isn’t possible to have absolute freedom at all times, simply because different freedoms often conflict with each other. One person’s right to use their property as they see fit (say, by building a factory) might conflict with another person’s right to life and health (if the pollution from the factory would damage their lungs). A newspaper’s right to freely publish news stories might conflict with their subjects’ right to privacy (if the newspaper published details of those people’s personal lives). In order to protect people’s rights, other people’s rights sometimes have to be impinged upon. Again, it all comes down to the utilitarian process of weighing the various interests against each other. And although things like rights and duties and generalized rules of conduct absolutely can (and should) play a role within this utilitarian framework, they don’t form the foundation themselves; they’re more like helpful tools for ensuring that utility is maximized.

Here’s an excerpt from Alexander’s Q&A that explains:

[Q]: So what about all the usual moral rules, like “don’t lie” and “don’t steal”?

Consequentialists accord great respect to these rules. But instead of viewing them as the base level of morality, we view them as heuristics (“heuristic” – a convenient rule-of-thumb which is usually, but not always true).

For example, “don’t steal” is a good heuristic, because when I steal something, I deny you the use of it, lowering your utility. A world in which theft is permissible is one where no one has any incentive to do honest labor, the economy collapses, and everyone is reduced to thievery. This is not a very good world, and its people are on average less happy than people in a world without theft. Theft usually lowers utility, and we can package that insight to remember later in the convenient form of “don’t steal.”

[Q]: But what do you mean when you say these sorts of heuristics aren’t always true?

In the example with the axe murderer […] above, we already noticed that the heuristic “don’t lie” doesn’t always hold true. The same can sometimes be true of “don’t steal”.

In Les Miserables Jean Valjean’s family is trapped in bitter poverty in 19th century France, and his nephew is slowly starving to death. Valjean steals a loaf of bread from a rich man who has more than enough, in order to save his nephew’s life. Although not all of us would condone Jean’s act, it sure seems more excusable than, say, stealing a PlayStation because you like PlayStations.

The common thread here seems to be that although lying and stealing usually make the world a worse place and hurt other people, in certain rare cases they might do the opposite, in which case they are okay.

[Q]: So it’s okay to lie or steal or murder whenever you think lying or stealing or murdering would make the world a better place?

Not really. Having a hard-and-fast rule “never murder” is, if nothing else, painfully clear. You know where you stand with a rule like that.

There’s a reason God supposedly gave Moses a big stone with “Thou shalt not steal” and not “Thou shalt not steal unless you have a really good reason.” People have different definitions of “really good reason”. Some people would steal to save their nephew’s life. Some people would steal if it helped defend their friends from axe murderers. And some people would steal a PlayStation, and think up some bogus moral justification for it later.

We humans are very good at special pleading – the ability to think that MY situation is COMPLETELY DIFFERENT from all those other situations other people might get into. We’re very good at thinking up post hoc justifications for why whatever we want to do anyway is the right thing to do. And we’re all pretty sure that if we allowed people to steal if they thought there was a good reason, some idiot would abuse it and we’d all be worse off. So we enshrine the heuristic “don’t steal” as law, and I think it’s probably a very good choice.

Nevertheless, we do have procedures in place for breaking the heuristic when we need to. When society goes through the proper decision procedures, in most cases a vote by democratically elected representatives, the government is allowed to steal some money from everyone in the form of taxes. This is how modern day nation-states solve Jean Valjean’s problem without licensing random people to steal PlayStations: everyone agrees that Valjean’s nephew’s health is more important than a rich guy having some bread he doesn’t need, so the government taxes rich people and distributes the money to pay for bread for poor families. Having these procedures in place is also probably a very good choice.

[Q]: So is it ever okay to break laws?

I think civil disobedience – deliberate breaking of laws in accord with the principle of utility – is acceptable when you’re exceptionally sure that your action will raise utility rather than lower it.

To be exceptionally sure, you’d need very good evidence and you’d probably want to limit it to cases where you personally aren’t the beneficiary of the law-breaking, in order to prevent your brain from thinking up spurious moral arguments for breaking laws whenever it’s in your self-interest to do so.

I agree with the common opinion that people like Martin Luther King Jr. and Mahatma Gandhi who used civil disobedience for good ends were right to do so. They were certain enough in their own cause to violate moral heuristics in the name of the greater good, and as such were being good utilitarians.

[Q]: What about human rights? Are these also heuristics?

Yes, and political discussion would make a lot more sense if people realized this.

Everyone disagrees on what rights people do or do not have, and these disagreements about rights mirror their political positions only in a more inscrutable and unsolvable way. Suppose I say people should get free government-sponsored health care, and you say they shouldn’t. This disagreement is problematic, but it at least seems like we could have a reasonable discussion and perhaps change our minds. But if I assert “People should have free health care because everyone has a right to free health care,” then there’s not much you can say except “No they don’t!” The interesting and potentially debatable question “Should the government provide free health care?” has turned into a purely metaphysical question about which it is theoretically impossible to develop evidence either way: “Do people have a right to free health care?”

And this will only get worse if you respond “And you can’t raise my taxes to fund universal health care, because I have a right to my own property!”

Whenever there’s a political conflict, both parties figure out some reason why their natural rights are at stake, and the arbitrator can do whatever [they feel] like. No one can prove [them] wrong, because our common notion of rights is an inherently fuzzy concept created mainly so that people who would otherwise say things like “I hate euthanasia, but I guess I have no justification” can now say things like “I hate euthanasia, because it violates your right to life and your right to dignity.” (I actually heard someone use this argument a while ago)

Consequentialism allows us to use rights not as a way to avoid honest discussion, but as the outcome of such a discussion. Suppose we debate whether universal health care will make our country a better place, and we decide that it will. And suppose we are so certain about this decision that we want to enshrine a philosophical principle that everyone should definitely get free health care and future governments should never be able to change their mind on this no matter how convenient it would be at the time. In this case, we can say “There is a right to free health care” – i.e. establish a heuristic that such care should always be available.

Our modern array of rights – free speech, free religion, property, and all the rest – are heuristics that have been established as beneficial over many years. Free speech is a perfect example. It’s very tempting to get the government to shut up certain irritating people like racists, neo-Nazis, cultists, and the like. But we’ve realized that we’re not very good at deciding who genuinely ought to be silenced, and that once we give anyone the power to silence people they’ll probably use it for evil. So instead we enforce the heuristic “Never deny anyone their freedom of speech”.

Of course, it’s still a heuristic and not a universal law, which is why we’re perfectly willing to prevent people from speaking freely in cases where we’re very sure it would lower total utility; for example, shouting “Fire!” in a crowded theater.

[Q]: So consequentialism is a higher level of morality than rights?

Yes, and it is the proper level on which to think about cases where rights conflict or in which we are not certain which rights should apply.

For example, we believe in a right to freedom of movement: people (except prisoners) should be allowed to travel freely. But we also believe in parents’ rights to take care of their children. So if a five year old decides he wants to go live in the forest, should we allow the parents to tell him he can’t?

Yes. Although this is a case of two rights conflicting, once we realize that the right to freedom of movement only exists to help mature reasonable people live in the sort of places that make them happy, it becomes clear that allowing a five year old to run away to the forest would result in bad consequences like him being eaten by bears, and we see no reason to follow it.

But what if that child wants to run away because his parents are abusing him? Everyone has a right to dignity and to freedom from fear, but parents also have a right to take care of their children. So if a five year old is being abused, is it okay for him to run away to a foster home or somewhere?

Yes. Although two rights once again conflict, and even though “right to dignity and freedom from fear” might not be a real right and I kinda just made it up, it’s more important for the child to have a safe and healthy life than for the parents to exercise their “right” to take care of him. In fact, the latter right only exists as a heuristic pointing to the insight that children will usually do better with their parents taking care of them than without; since that insight clearly doesn’t apply here, we can send the child to foster care without qualms.

The proper procedure in cases like this is to change levels and go to consequentialism, not shout ever more loudly about how such-and-such a right is being violated.

[Q]: Summary?

Rules that are generally pretty good at keeping utility high are called moral heuristics. It is usually a better idea to follow moral heuristics than to calculate utility of every individual possible action, since the latter is susceptible to bias and ignorance. When forming a law code, use of moral heuristics allows the laws to be consistent and easy to follow. On a wider scale, the moral heuristics that bind the government are called rights. Although following moral heuristics is a very good idea, in certain cases when you’re very certain of the results – like saving your friend from an axe murderer or preventing someone from shouting “Fire!” in a crowded theater – it may be permissible to break the heuristic.

(An even simpler variation on this conception of rights might be to just regard them as those interests held by every individual which have such a high utility value that it would require a genuinely huge countervailing interest to override them. This might not be a perfect definition compared to Alexander’s – it might be better suited as a definition for “needs” or something – but you understand what I’m getting at. The point here, again, is just that everything must necessarily come down to utility once you get down to the most fundamental level.)

Now, having said all this, there is one sense in which I actually do think that moral rights and duties are fundamental – namely, I think the principle of maximizing global utility can itself be regarded as a moral duty (or more accurately, as a kind of meta-duty), and not just as something that’s good. And I think that the violation of that principle would likewise constitute an infringement on a real moral right (or meta-right). I’ll explain later why I think this is the case. But before we get to that, let me just finish addressing some of the more immediate questions about defining goodness in utilitarian terms, and what the implications of this system are.

One of the more common negative responses to the idea of consequentialism/utilitarianism is to ask: Okay, if everything really does just come down to a calculation of utility tradeoffs, then wouldn’t the resulting lack of respect for human dignity as an end in itself lead to some disturbingly exploitative and unjust outcomes?

The short answer is that it wouldn’t, simply because any consequence that would be an overall negative (including a breakdown of respect for human dignity) would, by definition, not be prescribed by consequentialism. Arguing that a particular course of action would lead to worse outcomes isn’t an argument against consequentialism; it’s an argument within consequentialism, about which course of action will actually lead to the best consequences. As Alexander writes:

There’s a hilarious tactic one can use to defend consequentialism. Someone says “Consequentialism must be wrong, because if we acted in a consequentialist manner, it would cause Horrible Thing X.” Maybe X is half the population enslaving the other half, or everyone wireheading, or people being murdered for their organs. You answer “Is Horrible Thing X good?” They say “Of course not!”. You answer “Then good consequentialists wouldn’t act in such a way as to cause it, would they?”

By way of elaboration, he breaks down the slavery example:

[Q]: Wouldn’t utilitarianism lead to 51% of the population enslaving 49% of the population?

The argument goes: it gives 51% of the population higher utility. And it only gives 49% of the population lower utility. Therefore, the majority benefits. Therefore, by utilitarianism we should do it.

This is a fundamental misunderstanding of utilitarianism. It doesn’t say “do whatever makes the majority of people happier”, it says “do whatever increases the sum of happiness across people the most”.

Suppose that ten people get together – nine well-fed Americans and one starving African. Each one has a candy. The well-fed Americans get +1 unit utility from eating a candy, but the starving African gets +10 units utility from eating a candy. The highest utility action is to give all ten candies to the starving African, for a total utility of +100.

A person who doesn’t understand utilitarianism might say “Why not have all the Americans agree to take the African’s candy and divide it among them? Since there are 9 of them and only one of him, that means more people benefit.” But in fact we see that that would only create +10 utility – much less than the first option.

A person who thinks slavery would raise overall utility is making the same mistake. Sure, having a slave would be mildly useful to the master. But getting enslaved would be extremely unpleasant to the slave. Even though the majority of people “benefit”, the action is overall a very large net loss.

(if you don’t see why this is true, imagine I offered you a chance to live in either the real world, or a hypothetical world in which 51% of people are masters and 49% are slaves – with the caveat that you’ll be a randomly selected person and might end up in either group. Would you prefer to go into the pro-slavery world? If not, you’ve admitted that that’s not a “better” world to live in.)

He also addresses the organ-harvesting example (AKA “the transplant problem”):

[Q]: Wouldn’t utilitarianism lead to healthy people being killed to distribute their organs among people who needed organ transplants [assuming there was no other way to save them], since each person has a bunch of organs and so could save a bunch of lives?

We’ll start with the unsatisfying weaselish answers to this objection, which are nevertheless important. The first weaselish answer is that most people’s organs aren’t compatible and that most organ transplants don’t take very well, so the calculation would be less obvious than “I have two kidneys, so killing me could save two people who need kidney transplants.” The second weaselish answer is that a properly utilitarian society would solve the organ shortage long before this became necessary […] and so this would never come up.

But those answers, although true, don’t really address the philosophical question here, which is whether you can just go around killing people willy-nilly to save other people’s lives. I think that one important consideration here is the heuristic-related one mentioned [earlier]: having a rule against killing people is useful, and what any more complicated rule gained in flexibility, it might lose in sacrosanct-ness, making it more likely that immoral people or an immoral government would consider murder to be an option (see David Friedman on Schelling points).

[…]

But note that [this] still is a consequentialist argument and subject to discussion or refutation on consequentialist grounds.

Just as an aside, the transplant problem is particularly interesting to me, because although I share the immediate intuition that, say, a surgeon killing one unsuspecting bystander to save five lives (as in Judith Jarvis Thomson’s original formulation of the problem) would be acting immorally, Julia Galef points out that it’s possible to tweak the parameters of the problem so that the answer is much less intuitively obvious (or equally obvious but in the opposite direction). For instance, what if the surgeon couldn’t just save five lives by killing the bystander, but fifty lives, or five thousand lives, or five billion lives, or every life in existence? Would it still be more moral to let everyone in the world die than to kill one bystander? The fact that you can raise or lower the utility value on one side of the equation, and thereby change whether the action on the other side of the equation would be acceptable or not, seems like a pretty good indication that utility is in fact the key variable even here. That is to say, the fundamental question isn’t so much whether the bystander has a right not to be unexpectedly killed, but whether the benefit of upholding that right outweighs the alternative.

Assuming it does, though – i.e. assuming it’s right to think that killing the bystander to save only a few patients would be worse than sparing the bystander and letting the patients die – what’s the utilitarian reason for this conclusion? I think Alexander is right with his explanation here; we have an extremely strong heuristic against sudden unprovoked murder, and that heuristic produces a ton of utility in the world specifically because it’s so strong – so maintaining the authority of that rule for use in future situations has a high (if indirect) utility value in itself, on top of the basic object-level considerations of how many lives are lost or saved in the immediate situation. We also have a strong rule against using another person merely as an unconsenting means to some end, as Kant famously put it – even if that end is helping other people – and maintaining the authority of that heuristic likewise has a high utility value due to the better outcomes it’ll tend to produce over the long run. If you want to undermine these rules, then, there has to be an extremely high utility gain on the other side of the scale to justify it; and although saving five billion lives would surely be enough to do so, it’s not quite as obvious that saving only one or two extra lives would. True, it would be good that those lives had been saved – but considering the extent to which word of the surgeon’s actions would undoubtedly spread, and the extent to which it would make everyone afraid to ever go to the hospital again (not to mention all the other negative social effects it would have), it seems clear that it would make the broader society worse off overall.

Of course, there are other factors in play here too – and there are trickier variations on this thought experiment that we might imagine (What if it happened in secret? What if it was the last act of the surgeon’s life?) – so we’ll be coming back to it again later. For now though, the point is just that any interpretation of utilitarianism that claims it would allow for things like mass nonconsensual organ-harvesting (or some other such terrible outcome) makes the basic mistake of only applying the utilitarian calculus to the object level of the situation in question (e.g. lives saved vs. lives lost), rather than recognizing that if there were negative higher-order effects at the level of the broader society, the utilitarian calculus would necessarily take those into account as well. It’s the same kind of mistake creationists make when they argue that life on earth couldn’t have evolved, because the second law of thermodynamics prevents entropy from ever decreasing within a system (that is, a system can’t ever spontaneously grow more ordered, only more chaotic). What this argument overlooks is that there’s actually a huge external factor – namely the massive amount of energy being added into the system by the sun – which tips the entropic scales and does in fact provide the necessary fuel for life to emerge and evolve. And it’s the same story with utilitarianism: Sure, a particular harm-benefit calculation might not appear to make sense if you’re only considering an isolated object-level situation as a closed system – but once you recognize that it’s not a closed system at all and that there are all kinds of external factors to account for, the conclusions suddenly make a lot more sense. It’s true that sometimes you’ll find that allowing for exceptions to widespread moral rules would in fact be better for the world in the long run – and in those cases, that’s what utilitarianism would prescribe. But in other cases, you’ll find that it would be better for the world in the long run to set an extremely high bar for breaking certain moral rules, even if it means accepting some utility losses in the short run – and in those cases, that’s what utilitarianism would prescribe. The key is just that in whatever scenario you’re considering, you have to make sure to apply the utilitarian calculus to the whole big-picture situation – the whole state of the universe – rather than to only one small part of it.

(As for the question of how exactly to decide when to abide by moral rules and when to break them, it seems to me that a pretty good meta-heuristic (not a universal rule, of course, but just a general guideline) is to follow Alexander’s “be nice, at least until you can coordinate meanness” approach – that is, don’t make an individual habit of breaking moral rules in isolated situations where it would seem to increase utility more; only allow for moral rules to be broken when it’s collectively implemented in an officially-sanctioned way by the broader society.)

So all right, we’ve established that the function of consequentialist/utilitarian morality is, as Alexander summarizes it, to “try to make the world a better place according to the values and wishes of the people in it” – and we’ve established that the way to tell whether an act meets that standard is to evaluate it in the broadest possible terms, making sure to account for all the various side effects and long-term implications.

The next issue we have to deal with, then, is the fact that trying to “make the world a better place according to the values and wishes of the people in it” depends on what those people’s values and wishes actually are. If those people happen to have values and wishes that are irrational or destructive or otherwise terrible (as a lot of people certainly do), then isn’t that a problem for our system? What if people hold desires that are sadistic or bigoted – or even more problematic, what if people hold desires that don’t even maximize their own utility? How do we deal with that?

Well, the first part of the question – what if people hold desires that are sadistic or bigoted – is one that we’ve somewhat addressed already. It’s true that the simplest version of utilitarianism counts the satisfaction of these harmful desires just as positively as the satisfaction of more benign ones. If someone feels revulsion whenever they encounter an interracial couple, for instance, then that person’s discomfort is in fact real, and does count as a reduction in their utility. And if they could get a law passed to ban interracial marriage, then the alleviation of their discomfort would in fact register as an increase in their utility (just as the axe murderer from before would get a utility boost from murdering his victim). But of course, acknowledging that one person might derive some positive utility from a particular act doesn’t mean that the act is an overall positive in the broadest sense. As much utility as our hypothetical racist might derive from banning interracial marriage, it would be far more of a decrease in utility for all the interracial couples who would no longer be able to get married – so in global terms, it would still be a major utility reduction. In other words, you wouldn’t be able to call it morally good; at most, all you could say is that it would be good for the racist who no longer had to experience as much discomfort.

That being said, though, we can make this example more difficult. Let’s imagine, for instance, that we aren’t just talking about just one racist individual, but a whole society. Say there’s an entire country that forbids interracial marriage, because its populace mistakenly fears that legalizing it would destroy their society. What’s more, let’s imagine that there’s no one within this country who actually wants to marry outside their race – so no one’s utility is being reduced by the ban (at least not directly). So far, then, it seems like maintaining the ban would meet our definition of being morally good, right? Everyone’s preferences are being met and no one’s utility is being reduced. But is it really right to say that encouraging racism would be good, even in a society where everyone approves of it? After all, as much benefit as they might derive from banning interracial marriage, maybe they’d be even better off if they learned not to feel revulsion towards people of different races in the first place. Their conscious, explicit preference might still be to maintain their racism, but wouldn’t they actually gain more utility from not having such toxic feelings at all? Wouldn’t the real utilitarian thing to do here be to go against the action that the people have ascribed the most subjective value to?

I think this is a valid question. So let’s imagine that one day, the leader of our hypothetical country (who let’s assume has been legitimately democratically elected and entrusted with making these kinds of judgments for the good of the populace) decides to go against the ostensible will of the people and launch a campaign pushing for the legalization of interracial marriage – which ultimately succeeds, and to everyone’s surprise (except the leader’s), doesn’t end up destroying their society at all, but actually improves it significantly. Lonely single people are suddenly able to find spouses, extended families are enriched by the opportunity to discover new perspectives from their new in-laws, and so on. The thing that everyone had regarded as bad for them actually ended up providing a significant increase in their utility. In other words, they were wrong about what was best for them.

(If you want to consider a different example of this, you might instead imagine a misguided populace wanting to start a disastrous war with a neighboring country or something (in a way that would be totally self-destructive), with their leaders being the only ones who realize this and want to avoid it.)

How, then, does that fit into our system? This is the second part of our question from before – what if people hold desires that don’t actually maximize their own utility? Utilitarianism is supposed to be all about satisfying people’s preferences, right? So what if their preferences are flawed, and the amount of goodness they ascribe to a potential outcome doesn’t actually correspond with the amount of goodness that that outcome would produce for them? On what basis could our hypothetical political leader justify legalizing interracial marriage as a moral act if nobody actually considered it a good thing? Sure, we could say that it was a good thing after the fact, once everybody came around and started ascribing a positive value to it rather than a negative one – but what about before then? How could we say that it would be good to take a certain action if doing so would go against everyone’s subjective valuations? Is goodness defined by those subjective valuations, or isn’t it?

This isn’t just a one-off problem, either, nor one exclusive to national governance; it can apply to all kinds of different situations. If you see someone about to cross the street, oblivious to the bus that’s heading straight for them, would it be good to stop them from crossing even though their explicit preference is to cross? If you see someone about to drink a beverage, oblivious to the fact that it’s actually poison, would it be good to stop them from drinking it even though they’ve ascribed more goodness to drinking it than to remaining thirsty? If goodness is nothing but a subjective valuation that people ascribe to things, then how can going against those subjective valuations be considered good for them?

As you might have already figured out, this question isn’t actually as problematic as it seems. Yes, it’s true that goodness is defined by people’s subjective valuations; an act can’t be good unless someone judges it to be good. And it’s true that people’s judgments of what’s good – their conscious preferences – don’t always match up with what they’d actually prefer if they knew better. But the thing is, the explicit object-level preferences that people have toward their immediate situations aren’t the only preferences that people hold. They also have meta-preferences – i.e. preferences about their preferences – and in situations like the ones above, those higher-order meta-preferences can supersede their object-level preferences. So for instance, if you were about to cross the street, your object-level preference might be to cross freely without anyone stopping you; but at the same time, you’d have a meta-preference that if you were wrong in your assumption that crossing freely would be the most utility-maximizing outcome for you (like if there were an oncoming bus about to run you over), you’d actually prefer for someone to step in and stop you from crossing. Similarly, if you were about to drink a beverage, you might have an object-level preference to drink it, but you’d also have a higher-order preference that if your object-level preference would actually be harmful in some way you didn’t anticipate (like if the beverage were poisoned), then someone should intervene and stop you. In other words, whatever your object-level preference is toward the specific situation you’re in, your meta-preference will always be that if there’s alternative outcome that would maximize your utility more than your object-level preference would, then your misguided object-level preference should be disregarded; and if there’s someone around who has a better understanding of the situation and is in a position where they can overrule your misguided preference, then your meta-preference will be to defer to them and let them act on your behalf. The outcome of having your misguided preferences overruled, even if it frustrates you at the object level, is the one that you actually ascribe the greatest value to overall. So in this way, your meta-preference is a kind of Master Preference – a preference over all other preferences – which simply says that no matter what, you will always prefer outcomes that maximize your utility, even if you aren’t explicitly aware beforehand that they will do so.

(If this all seems trivially obvious to you, well, I’m glad you think so, because this concept will do quite a bit of heavy lifting for us later on.)

One of the crucial elements of this Master Preference (which, I should note, has been proposed in a few slightly different forms before – e.g. John Harsanyi’s concept of “manifest” preferences vs. “true” preferences, Eliezer Yudkowsky’s concept of coherent extrapolated volition, etc.) is that, like many other preferences, you can hold it without ever explicitly affirming that you hold it – or, for that matter, even consciously realizing that you hold it. At no point is it necessary for you to acknowledge (even to yourself) that you’d prefer an outcome like “being deprived of a beverage against your will” to one like “dying because you didn’t realize your beverage was poisoned;” all that’s necessary for such a preference to exist is that you actually would get more value out of the first outcome happening than the second outcome happening. The mere fact that you’d derive more value from the first situation than the second one is what would constitute the first one being preferable to you in the first place. And in that sense, simply the fact that you have any preferences at all (whether conscious or unconscious) means that you must necessarily hold this Master Preference, because all other preferences – merely by virtue of being preferences – are automatically subsumed by it. Even if you were the kind of stubborn contrarian who would explicitly deny having the Master Preference, claiming that you’d never want any of your object-level preferences overruled for any reason, you still wouldn’t be able to get away from it – because the principle of never wanting your object-level preferences overruled is itself a preference that might turn out to be misguided; and if there were ever a situation in which having that preference overruled would be preferable to you, then the Master Preference would supersede it. (Granted, you might never encounter such a situation – maybe by sheer luck, your valuations really would always be perfect – but that wouldn’t change the fact that the Master Preference would still remain in effect for you; it simply wouldn’t ever require anyone to act on it.) To deny that you’d ever want your object-level preferences overruled if doing so would give you greater utility would be an incoherent self-contradiction – it’d be like saying that what was preferable to you wasn’t preferable to you.

It’s for this same reason, by the way, that if someone’s judgment is being impaired somehow, utilitarianism doesn’t necessarily require that all their object-level preferences be met. That is, if someone is (say) in the grip of a crippling drug addiction, it isn’t automatically a good thing to give them more drugs – even if they say that’s what they want – because they’ll be far better off if they can break that addiction and come out of their impaired state of consciousness. That’s the outcome that would actually be preferable to them, even if they don’t realize it at the time. Similarly, if someone suffers brain damage and loses the ability to make good decisions for themselves, the best thing to do isn’t just to satisfy whatever misguided preference they might assert; it’s to do what actually maximizes their utility. This is also true for people who are born with certain cognitive disabilities, who never have the capacity for making fully informed decisions in the first place; merely the fact that they’re capable of considering some states of their existence better than others is all it takes for the Master Preference to apply to them, and to therefore make it morally good for them to be treated well (even if they never consciously understand that that’s what they want). And in fact, this is even true for animals, despite the fact that they lack the biological capacity for human-level judgment altogether; again, merely the fact that they’re capable of preferring some outcomes to others is enough to mean that the Master Preference applies to them, and that maximizing their utility is morally better than not doing so – regardless of whether they can ever fully understand what would be best for them or why. In other words, even if your family dog really wanted to run out into busy traffic – maybe because she saw a rabbit on the other side of the highway or something – it would be morally better not to let her, because getting hit by a car would be such a terrible outcome for her (and would certainly be an outcome she’d want to avoid if she were aware of it). Likewise, even if she didn’t understand the nutritional content of the food you were feeding her – and didn’t know that it would be in her best interest to prefer the healthy kibble over the kibble-flavored asbestos – it would still be morally better to feed her the healthy kibble than the asbestos. Merely her ability to instinctively want what’s best for herself is all it would take to reify that preference as a moral consideration.

I want to talk a little more about how these ideas can apply to non-humans; but before I do, I should clarify a few more conceptual points. First of all, if it wasn’t clear already, when we talk about people having preferences and wanting to maximize their utility, that doesn’t necessarily just mean wanting to selfishly do things that are only good for themselves. A lot of people derive immense satisfaction from things like helping others and abstaining from worldly pleasures – so for those people, the thing that would maximize their utility might not necessarily be a purely hedonistic lifestyle; it might be to spend their lives doing charity work or some other form of public service instead. Similarly, there are some instances in which a person’s preference isn’t to enhance their own well-being at all, but to sacrifice it (if necessary) for the sake of something they value more. (Think about a parent sacrificing their time or their health – or even their life – for their children, for example.) So when we talk about calculating net utilities and figuring out what would best meet people’s interests, we have to account for the fact that people don’t always prefer to just benefit themselves alone. Sometimes, the best way to help a particular person is actually to help others.

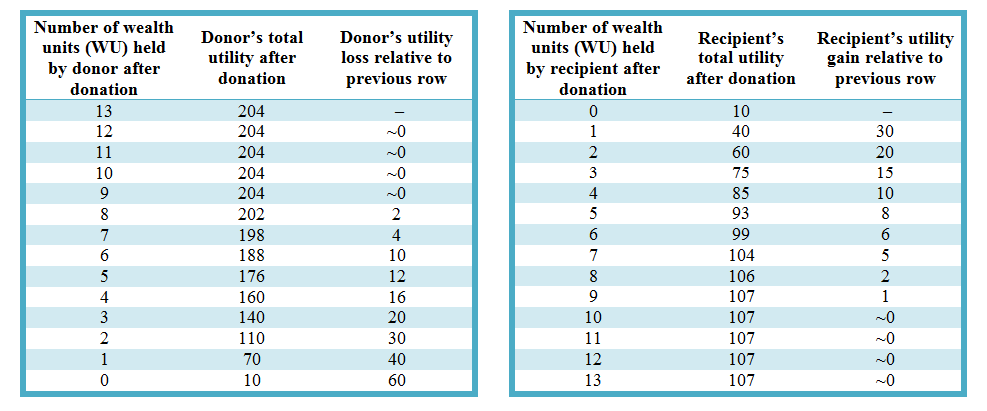

On that same note, because some people’s preferences end up producing greater benefits beyond themselves than other people’s preferences do, we have to make sure to incorporate those second-order effects into our utilitarian calculus when we weigh people’s preferences against each other. For example, if we were in some kind of scenario where we had to choose between releasing a sadistic serial killer from prison and releasing a compassionate aid worker who had also been imprisoned, then even though both of them might value their freedom equally highly, it’s clear that freeing the aid worker would produce more goodness overall due to all the positive utility they’d bring to others later on – so the utilitarian choice would be to free the aid worker. Or to take a slightly less obvious example, if we decided to donate a bunch of money to charity, it wouldn’t be right to assume (as a lot of people unfortunately seem to do) that our donation would be equally good regardless of which charity we actually donated to or how much utility the charity produced relative to other charities; the most utilitarian thing to do would be to figure out exactly which charities were doing the most good per dollar, and donate to one of those. As I’ve been emphasizing all along, the best actions within utilitarianism are those that produce the highest level of goodness within the most all-inclusive possible context – the full state of the universe and all the sentient beings within it.

With all these clarifications out of the way, then, let’s take a step back and return to our original question of whether it’s possible to say that things can be objectively good or bad – and let’s apply it to the whole global state of affairs. Is it possible to coherently talk about “moral progress” in the world? Can we really say that our species’ moral behavior has improved (or can improve) in any way over the course of history? A moral relativist might argue that it’s impossible to do so – that morality is entirely relative to its cultural context, and that what’s good in one era might be bad in another era. But within the utilitarian system we’ve set up here, we can say decisively that moral progress is possible. States of the world that have higher levels of utility are objectively better than states of the world that have lower levels of utility – regardless of era, and regardless of context; and to whatever extent we manage to bring our actions into alignment with what would bring about the highest level of global goodness, that’s the extent to which we’re making moral progress as a species. If we implement some new policy or program that makes everyone happier, that’s obviously a good thing. If we implement a policy that has some drawbacks and makes some people unhappy, but still does more good than harm overall, then that may not be ideal, but it can still be considered a positive. But even if we implement a policy that everyone seems to be against (at least at the object level), like legalizing interracial marriage in our hypothetical country from before, it can still be a good thing overall if it ultimately increases the people’s utility, because then it would still satisfy their Master Preference, which is the true measure of their well-being in the end.

Of course, I should caution here against abusing this idea of the Master Preference – because it really is easy to abuse if not understood properly. History is tragically rife with examples of people harming and oppressing others and claiming that it’s “for their own good.” But just because people have a meta-preference that their object-level preferences should be overruled if it would better increase their utility doesn’t mean that it would naturally be a good thing for us (or anyone else) to forcefully impose our preferred way of life on everyone else just because we think it would be better for them. The only way we could call such an intervention good would be if it actually were better for them (which forceful actions hardly ever are); otherwise, it would most definitely be a bad thing. (And I should point out that one of the biggest reasons of all why an intervention might not be morally acceptable is the simple fact that respecting people’s explicit wishes is such an important norm in itself – so even if a particular intervention seemed otherwise worthwhile, the mere fact that it would undermine this norm might still keep it from being justifiable.) In short, then, while it is in fact possible to make moral progress in the world, there’s a difference between actually making moral progress in the world, and causing things to become worse in the name of making moral progress. It’s critically important, when making any kind of major moral decision, to keep this distinction in mind – and to have enough humility to recognize that if you think somebody’s object-level preferences should be disregarded for the sake of upholding their Master Preference, it’s always possible that you may actually be the one whose preferences are misguided and ought to be overruled, both for your own sake and for the sake of those who will be affected by your actions.

Speaking of acting responsibly toward those who are less powerful, though, let’s quickly finish up that point about applying these ideas to non-human species before we move on to anything else. For simplicity, I’ve mostly been referring to humans up the this point – but really, when I talk about fulfilling individuals’ preferences and maximizing their utility and so on, I’m talking about sentient beings in general, which can include both human and non-human species.

A lot of moral theories don’t leave any room for non-human species at all. As Tyler Cowen writes:

Some frameworks, such as contractarianism, imply that animal welfare issues stand outside the mainstream discourse of ethics, because we have not and cannot conclude agreements, even hypothetical ones, with (non-domesticable) animals. There is no bargain with a starling, even though starlings seem to be much smarter than we had thought.

Likewise, many religious conceptions of morality don’t include any consideration for animals, on the basis that animals lack souls and are therefore entitled to no more moral consideration than plants or rocks.

But under the system I’ve been laying out here – in which the goodness or badness of an action is defined simply by how much value is ascribed to it by subjective agents – animals do have to count for something, because just like humans, they too are capable of preferring some outcomes to others. Granted, those preferences might be purely implicit; a cat might never explicitly articulate that it prefers one brand of cat food to another, or that it prefers playing with a ball of yarn to being mauled by a larger animal. But as we’ve already established, preferences don’t have to be articulated in order to exist; a human infant, for instance, doesn’t have to be capable of speech (or even particularly complex thought) in order to prefer being fed to going hungry. Merely the fact that a being (whether human or non-human) is capable of preferring some outcomes to others means that its interests must necessarily count for something in the moral calculus.

I think most of us understand this intuitively; it’s hard to find someone who thinks there’s nothing wrong with torturing kittens, for instance. Still, a lot of people will try to selectively deny their intuitions on this point (usually as a means of defending their carnivorous diets) by arguing that animals aren’t actually capable of holding mental states at all, but are basically just automata, and that their behavior is purely robotic. Sam Harris provides a good response to this argument:

What is it like to be a chimpanzee? If we knew more about the details of chimpanzee experience, even our most conservative use of them in research might begin to seem unconscionably cruel. Were it possible to trade places with one of these creatures, we might no longer think it ethical to so much as separate a pair of chimpanzee siblings, let alone perform invasive procedures on their bodies for curiosity’s sake. It is important to reiterate that there are surely facts of the matter to be found here, whether or not we ever devise methods sufficient to find them. Do pigs led to slaughter feel something akin to terror? Do they feel a terror that no decent man or woman would ever knowingly impose upon another sentient creature? We have, at present, no idea at all. What we do know (or should) is that an answer to this question could have profound implications, given our current practices.

All of this is to say that our sense of compassion and ethical responsibility tracks our sense of a creature’s likely phenomenology. Compassion, after all, is a response to suffering – and thus a creature’s capacity to suffer is paramount. Whether or not a fly is “conscious” is not precisely the point. The question of ethical moment is, What could it possibly be conscious of?

Much ink has been spilled over the question of whether or not animals have conscious mental states at all. It is legitimate to ask how and to what degree a given animal’s experience differs from our own (Does a chimpanzee attribute states of mind to others? Does a dog recognize himself in a mirror?), but is there really a question about whether any nonhuman animals have conscious experience? I would like to suggest that there is not. It is not that there is sufficient experimental evidence to overcome our doubts on this score; it is just that such doubts are unreasonable. Indeed, no experiment could prove that other human beings have conscious experience, were we to assume otherwise as our working hypothesis.

The question of scientific parsimony visits us here. A common misconstrual of parsimony regularly inspires deflationary accounts of animal minds. That we can explain the behavior of a dog without resort to notions of consciousness or mental states does not mean that it is easier or more elegant to do so. It isn’t. In fact, it places a greater burden upon us to explain why a dog brain (cortex and all) is not sufficient for consciousness, while human brains are. Skepticism about chimpanzee consciousness seems an even greater liability in this respect. To be biased on the side of withholding attributions of consciousness to other mammals is not in the least parsimonious in the scientific sense. It actually entails a gratuitous proliferation of theory – in much the same way that solipsism would, if it were ever seriously entertained. How do I know that other human beings are conscious like myself? Philosophers call this the problem of “other minds,” and it is generally acknowledged to be one of reason’s many cul de sacs, for it has long been observed that this problem, once taken seriously, admits of no satisfactory exit. But need we take it seriously?

Solipsism appears, at first glance, to be as parsimonious a stance as there is, until I attempt to explain why all other people seem to have minds, why their behavior and physical structure are more or less identical to my own, and yet I am uniquely conscious – at which time it reveals itself to be the least parsimonious theory of all. There is no argument for the existence of other human minds apart from the fact that to assume otherwise (that is, to take solipsism as a serious hypothesis) is to impose upon oneself the very heavy burden of explaining the (apparently conscious) behavior of zombies. The devil is in the details for the solipsist; his solitude requires a very muscular and inelegant bit of theorizing to be made sense of. Whatever might be said in defense of such a view, it is not in the least “parsimonious.”

The same criticism applies to any view that would make the human brain a unique island of mental life. If we withhold conscious emotional states from chimpanzees in the name of “parsimony,” we must then explain not only how such states are uniquely realized in our own case but also why so much of what chimps do as an apparent expression of emotionality is not what it seems. The neuroscientist is suddenly faced with the task of finding the difference between human and chimpanzee brains that accounts for the respective existence and nonexistence of emotional states; and the ethologist is left to explain why a creature, as apparently angry as a chimp in a rage, will lash out at one of his rivals without feeling anything at all. If ever there was an example of a philosophical dogma creating empirical problems where none exist, surely this is one.

What really drives home Harris’s argument is the fact that (as Richard Dawkins points out) although it’s easy for us humans to imagine that we’re morally distinct from other animals because there’s such a wide gap between our relative levels of intelligence, there’s no reason why this gap necessarily had to have appeared in the first place. It’s merely an accident of evolutionary history that all the intermediate species between humans and chimps happen to have gone extinct; and it’s perfectly possible to imagine a scenario in which they’d instead all survived to this day. Imagine if that actually had been the case: What if our modern-day world included humans and Neanderthals and australopiths and chimpanzees all living alongside one another? How would we think differently about the moral status of animals if there were no clear taxonomic cutoff between them and our own species? Here’s Dawkins:

[There is a popular] unspoken assumption of human moral uniqueness. [But] it is harder than most people realise to justify the unique and exclusive status that Homo sapiens enjoys in our unconscious assumptions. Why does ‘pro life’ always mean ‘pro human life?’ Why are so many people outraged at the idea of killing an 8-celled human conceptus, while cheerfully masticating a steak which cost the life of an adult, sentient and probably terrified cow? What precisely is the moral difference between our ancestors’ attitude to slaves and our attitude to nonhuman animals? Probably there are good answers to these questions. But shouldn’t the questions themselves at least be put?