I – II – III – IV – V – VI – VII – VIII – IX – X – XI – XII – XIII – XIV – XV – XVI – XVII – XVIII – XIX – XX

[Single-page view]

I opened the last section by asking whether the goal of morality is to maximize aggregate utility or average utility. In most situations, this isn’t really a relevant question, since each potential outcome will have the same population, so the outcome with the highest average utility and the outcome with the highest aggregate utility will be one and the same. But in scenarios that do involve population differences, there can be a significant difference; for example, if you bring a new person into the world whose utility is below average but still positive, you can increase the aggregate utility level while decreasing the average. So which standard is the right one to use? Well, I’d be remiss if I didn’t bring up Parfit’s work here, since he’s undoubtedly the most famous thinker on the subject. What he showed, though, was that just trying to use one of these standards or the other isn’t really workable. For instance, let’s say you decided to go with average utility as your metric of choice. If this were your only standard for judging goodness and badness, then you’d have to conclude that a world containing ten million people who were all suffering extreme negative utility (e.g. having to undergo constant, unending torture) would be no worse than a world containing just ten people with the same average utility levels. (Parfit calls this “Hell One vs. Hell Two.”) That can’t be right, can it? But similarly, if you decided to stick with aggregate utility instead, you’d have to conclude that a world containing ten million people whose lives were all maximally ecstatic – i.e. people who had extremely high average levels of positive utility – would be worse than a world containing trillions of people whose lives were barely worth living – i.e. people whose utility levels were barely positive, but whose population levels were so much higher that their positive utility added up to a higher sum in aggregate terms. (Parfit calls this “the Repugnant Conclusion.”) That doesn’t seem right either. So how do we resolve this impasse?

This is another one of those notorious puzzles in moral philosophy that has always seemed to evade an easy answer. Again though, as with the Asymmetry, I think the framework I’ve been describing here offers us a plausible way of addressing it (and resolving both of Parfit’s thought experiments). By distinguishing between real people and hypothetical people, it provides a basis for saying that it would be immoral to add more people to the world in both cases. In the Hell One vs. Hell Two example, we can say that it would be immoral to add more people because they would be real people who would be entitled to moral treatment, and giving them such a negative-utility life would therefore count as a negative in the global utility calculus. And in the Repugnant Conclusion example, we can say that it would be immoral to introduce a bunch of new people into the world if doing so would lower the utility levels of those who already existed, because the as-yet-hypothetical people we were considering adding wouldn’t be real, so there wouldn’t be anything wrong with not bringing them into existence; no preferences would be violated.

In both of these thought experiments, the reason why the traditional average/aggregate dichotomy fails is that it frames the dilemma as a simple side-by-side comparison between the utility levels of two different universe-states. But this traditional approach overlooks the fact that in practice, it’s not actually possible to just magically change from one universe-state to another. In practice, you can only get from one population level to another by either creating new beings or killing existing ones – and both of those acts have built-in utility implications of their own. In other words, the relevant variable here isn’t just the static utility level of each universe-state and nothing else; it also matters how you go from one universe-state to the other. Morality is wayfinding tool; it’s about finding the best path to follow, not about determining the best destination which you can then somehow miraculously teleport into. If you’re only comparing after-the-fact utility levels, you’ll miss half the picture.

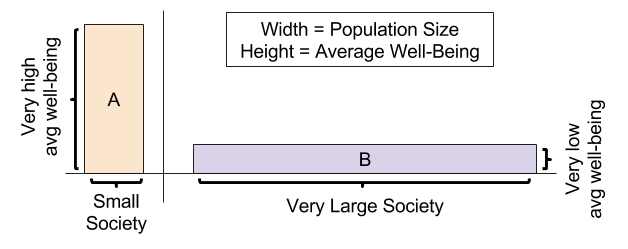

As an illustration of this point, consider that in the Repugnant Conclusion scenario, it may actually be both immoral to go from Universe A (the happy but sparsely-populated universe) to Universe B (the more populous but less happy universe), and also immoral to go from Universe B to Universe A. After all, in order to go from A to B, you’d have to reduce the utility levels of the already-existing population to introduce the new people, and our model wouldn’t condone that. But in order to go from B to A, you’d have to kill off most of the population to raise the utility of the survivors, and our model most definitely wouldn’t condone that either. If your only way of thinking about morality was in terms of aggregate utility levels, you might consider B to be unconditionally better than A – and so you might consider it perfectly moral to immiserate A’s population in order to bring about a universe more like B. Likewise, if your only way of thinking about morality was in terms of average utility levels, you might consider A unconditionally better than B – and so you might consider it perfectly moral to kill off most of B’s population in order to raise the survivors’ satisfaction levels to those of A. But under the system I’ve been describing here, the morality of the situation would depend crucially on which universe you were starting off in; the moral asymmetry between bringing people into existence and taking them out of existence would mean that the morality of going from one universe to another wouldn’t necessarily be reversible. So to try and make a moral judgment about which universe was better in an isolated side-by-side comparison, without any indication as to what the starting conditions were, would be like trying to determine which direction an object was moving in, and how quickly, without knowing anything except the object’s current position. The morally relevant question here, in short, wouldn’t be whether one static universe-state was better than another in a vacuum – it would be whether the act of going from one universe-state to another would be good or bad – and that answer might be totally different depending on which universe-state was the starting point. Sure, we might rightly be able to say that in some given universe, the average utility level would be higher if all the people who were less-satisfied-than-average with their lives had never been born; but in practice, we wouldn’t have any way of actually getting to that lower-population alternate universe with its higher utility levels – only to an alternate universe in which the population was lower because all those people had been killed and global utility had therefore been massively reduced. To use a physics analogy, the higher-average-utility universe would be outside of our moral light cone, so to speak. (Or to use a chess analogy, it would be like trying to arrive at some board configuration that would be impossible to ever legitimately arrive at according to the rules of the game.) So the answer to the question of whether it would be moral to try to create that higher-average-utility universe anyway (by killing all those people) would be a decisive no, regardless of whether the after-the-fact utility levels would seem more favorable in a vacuum. Again, just because it would be immoral to go from Universe A to Universe B doesn’t automatically mean that going from Universe B to Universe A would be moral. The moral asymmetry between creating and destroying life is critical here.

When it comes to this question of aggregate vs. average utility, then, there is in fact a way of reconciling the apparent disparities and resolving both the Hell One vs. Hell Two dilemma and the Repugnant Conclusion dilemma at the same time. But it requires cutting the Gordian knot of the average/aggregate dichotomy outright, and recognizing that it was never actually the right way to frame these dilemmas in the first place. The actual appropriate standard for whether it would be morally permissible to go from one particular universe-state to another, again, is simply whether doing so would maximize the utility of the sentient beings that already existed or were expected to exist. And so in this sense, the real dichotomy by which we should be judging these kinds of population-related decisions isn’t average utility vs. aggregate utility; it’s hypothetical preferences vs. real preferences.

Now, before I move on from this point, I should mention that Parfit does have an additional variation on his Repugnant Conclusion example that’s relevant to this discussion, which he calls the Mere Addition Paradox. The basic gist of it (as summarized by Wikipedia) is as follows:

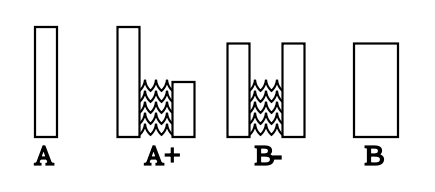

Consider the four populations depicted in the following diagram: A, A+, B− and B. Each bar represents a distinct group of people, with the group’s size represented by the bar’s width and the happiness of each of the group’s members represented by the bar’s height. Unlike A and B, A+ and B− are complex populations, each comprising two distinct groups of people. It is also stipulated that the lives of the members of each group are good enough that it is better for them to be alive than for them to not exist.

How do these populations compare in value? Parfit makes the following three suggestions:

1. A+ seems no worse than A. This is because the people in A are no worse-off in A+, while the additional people who exist in A+ are better off in A+ compared to A, since it is stipulated that their lives are good enough that living them is better than not existing.

2. B− seems better than A+. This is because B− has greater total and average happiness than A+.

3. B seems equally as good as B−, as the only difference between B− and B is that the two groups in B− are merged to form one group in B.

Together, these three comparisons entail that B is better than A. However, Parfit also observes the following:

4. When we directly compare A (a population with high average happiness) and B (a population with lower average happiness, but more total happiness because of its larger population), it may seem that B can be worse than A.

Thus, there is a paradox. The following intuitively plausible claims are jointly incompatible: (1) that A+ is no worse than A, (2) that B− is better than A+, (3) that B− is equally as good as B, and (4) that B can be worse than A.

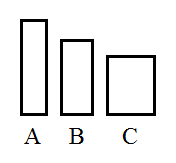

You might not actually share the intuition that B could be worse than A, but if you don’t, consider that the same chain of logic that took us from A to B could just as easily be applied to B to take us to yet another universe called C, with an even larger population and lower quality of life:

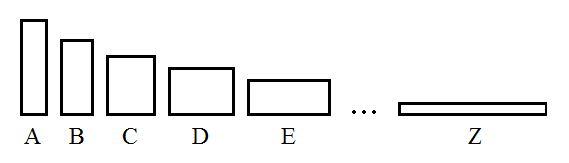

And then we could go from C to D, and D to E, and so on – making our way through the entire alphabet – until we finally reached a universe Z, with an extremely large population, but where life was barely worth living at all. At this point, it seems very hard to accept that this universe Z would be better than the original universe A (with its lower population but vastly higher quality of life) – hence the name of the idea we find ourselves having returned to: the Repugnant Conclusion.

The important thing to note about this paradox, of course, is that it only really seems paradoxical because it switches its definition of “better” halfway through the thought experiment. If you were judging the scenario solely in terms of average utility, Universe A would be unequivocally better than all the other universes; and if you were judging it solely in terms of aggregate utility, Universe Z would be the best. By trying to appeal to both standards at the same time, the Mere Addition Paradox creates what might seem to be a puzzling contradiction. But once we figure out an actually appropriate standard and stick to it, it becomes clear that there’s actually nothing paradoxical about the scenario at all. More specifically, if we apply the system I’ve been describing here, then simply knowing how each step in the sequence would lead to the next one would be sufficient grounds to nip the whole progression in the bud. We could say decisively that if we were starting from A, the act of creating a bunch of new people and thereby going to A+ would be immoral, because doing so would ultimately result in A’s original inhabitants having their utility reduced when A+ inevitably progressed to B- and B. True, if we didn’t know that A+ would eventually progress to B, then that would be a different story; remember, morality is based on expected utility, so if we didn’t expect any utility reduction for A’s existing inhabitants, then there wouldn’t be any basis for considering it immoral to introduce the new people of A+. But the fact that the situation would eventually progress to B- and B, and would thereby cause an unexpected utility reduction for A’s original inhabitants, doesn’t show that there’s anything paradoxical going on here; all it shows is that sometimes our utility estimations are inaccurate, and actions that we expect to be utility-positive or utility-neutral for ourselves or others sometimes turn out to be utility-negative, or vice-versa. That’s not a problem with our utility calculus – it’s just regular old human fallibility. Like we discussed earlier, it’s the difference between an act being good and an act being moral (i.e. expected to be good).